- Ford’s CEO doesn’t think he can upskill his entire workforce quick enough: I don’t think they are going to make it. Ford employees, if you are reading this: surpriseeeeee!!!

- DeepMind’s co-founder thinks there will be a serious number of losers among white-collar workers because of AI. Like when you don’t scratch the right lottery ticket, but with a lot more effort and a huge debt.

- The American science fiction writer Ted Chiang provokes: AI researchers are increasing the concentration of wealth to such extreme levels that the only way to avoid societal collapse is for the government to step in.

- New research uses AI to rephrase human written communication in a more empathetic way and improve interactions. No more fights for logo placement on the Nascar slide inside big corporations?

- Stephen Marche is the nth writer using ChatGPT to compose his masterpiece. Surely, it will be remembered 100 years from now.

- The Writers Guild of America feels like this AI thing is a bit threatening and goes on strike. The BBC shows them they are right.

- A project to create an unstoppable fashion blogger shows how we’ll have infinite garbage as long as somebody consumes it.

P.s.: This week’s Splendid Edition of Synthetic Work is titled And You Thought That Horoscopes Couldn’t Be Any Worse.

It covers what MakeMyTrip, Wendy’s, Citadel Securities, Takeda Pharmaceutical, and Ingenio are using AI for.

It also reviews the amazing MacGPT and shows you how much you can achieve with a good prompt and when that is not good enough anymore.

As you know, Synthetic Work uses Memberful to manage your memberships and gate online access to various sections of the website.

Memberful has been a great partner during these first three months (plus one to set up the whole infrastructure that serves the website).

A couple of weeks ago, they decided to write a profile about me and why I decided to start this newsletter:

Launching a newsletter about artificial intelligence for the people: Synthetic Work

This week, they decided to publish a second part, where I shared more about the challenges of writing a newsletter and building a brand that lasts: People don’t read for content.

As if you didn’t have enough to read already.

Alessandro

This might be the most important thing I mentioned in these first three months: Ford’s CEO, Jim Farley, interviewed by Joanna Stern for the Wall Street Journal, talks about the transformation that his company is going through.

He talks about various things, including his vision of how AI can make a difference in commercial vehicles, but the one bit that is truly important is at minute 28:46.

Nikisha Alcindor, from the STEM Educational Institute, sits in the audience and asks: “What’s your plan to build up your workforce so they are ready for all the tech that you are going to bring into Ford?”

Farley, who comes across as significantly more genuine and honest than the overwhelming majority of CEOs I met in life, candidly replies:

We’re not ready. We’re a long wy from ready, but we’re getting ready.

…

Upskilling, this is the hard part. I’m not sure we can upskill everyone. I don’t think they are going to make it. It’ ll take too long. So there’s gonna be a big shift in know-how in the company.We’ll always need powertrain engineers, calibration engineers, supply chain for seats and, you know, people have to do that. But there’s a new skillset we’re gonna need and I don’t think I can teach everyone the new. It’ll take too much time.

So there’s gonna be disruption in this transition, but we need young people who are trained and they… I think that’s our application.

The whole interview is worth watching, but this bit is particularly suggestive.

(Thanks to Matthew for pointing my attention to this interview)

In these three months, you have read about a growing number of world leaders, in business or in research, being open about the impact of AI on jobs.

Particularly impressive were last week’s stories I mentioned in Issue #11 – The Nutella Threshold.

But when we talk about the impact of AI on jobs, the conversation shouldn’t be just about the risk of displacement. It should also be about reskilling and upskilling.

Ford’s CEO is a prime example of something that I expect (and I have seen with other technology waves) from other companies around the world: your company may not be able to help you upskill to remain competitive in a business world powered by AI. You have to learn by yourself if you don’t want to be left behind.

The Splendid Edition of Synthetic Work, and the new tools that I’m putting online for paid members (like the How to Prompt database), are early steps to try and help people upskill themselves.

And then there is another piece of the puzzle:

When an emerging technology is so impactful that it gets adopted at planetary scale, the biggest challenge is never the technology itself. Far from it. It's change management. I've seen this with automation for over a decade. Generative AI will be the biggest change management…

— Alessandro Perilli ✍️ Synthetic Work 🇺🇦 (@giano) May 10, 2023

During my 23 years of career, I’ve seen countless technology providers fail and disappear, despite amazing tech, because they failed to understand that, to succeed, their customers must be helped to manage the change. It’s a people’s problem, not a technology problem.

Automation has seen a fraction of the adoption it could have because automation technology providers, even the largest ones, simply have no clue about the impact of their solutions on people and how people react to them. Moreover, these providers have no interest in learning about change management, or even partnering with firms that are experts at that.

Very often, technology providers are made just by technologists moved by the desire to build cool tools for other technologists. And, as their career progress, this worldview reaches the Product Manager level, the GM level, the VP level, and sadly, the CEO level.

These technology providers, even the biggest ones, like to say that they listen to customers and want to solve business problems. In my experience, that is rarely the case and the need of the customers come last, if mentioned at all.

In an industry like that, how many really care about the impact of AI on jobs or the change management necessary to facilitate the adoption of AI?

–

Let’s stay on this topic for the second thing I’d like to focus your attention to: Mustafa Suleyman, one of the DeepMind co-founders, comments on the impact of AI on jobs at the GIC’s Bridge Forum in San Francisco.

George Hammond, reporting for the Financial Times:

Unquestionably, many of the tasks in white-collar land will look very different in the next five to 10 years . . . there are going to be a serious number of losers [and they] will be very unhappy, very agitated

…

Suleyman said governments would need to think about how they support those whose jobs would be destroyed, with universal basic income as one potential solution: “That needs material compensation . . . This is a political and economic measure we have to start talking about in a serious way.”

One funny part of all of this comes from the almost infinite number of “The AI Guy” on social media that used to idolize people world-class AI experts like Mustafa Suleyman and Geoffrey Hinton. Those people, now, selectively refuse to pay attention to their former idols because they fear that mom might take the new toy away.

Another funny part of all of this comes from another camp, the camp of the pundits. Those that are experts in automation, job employment, finance, AI, bank runs, quantum computing, cryptography, how to make a pizza, washing really stubborn stains, history of technology, etc.

The attitude of some of these people, which I call “P&P” (Pedantic & Patronizing™), on the impact of AI on jobs has been: “You are talking nonsense. It’s just mathematics”. But now that expert mathematicians are expressing concerns about the topic, none of those pundits is amplifying the message.

It is entirely possible that all these experts are wrong about the topic and their anxiety is unjustified. We all sincerely hope it’s the case, I’m sure. But not even discussing the possibility that they are right, and devise a plan for that scenario, seems short-sighted.

I am reading that very many people have yet to try GPT-4 or Midjourney. This might explain the lack of awareness and urgency to think about the future of work.

But many have tried these tools. Microsoft reports:

In just 90 days, our customers ave engaged in over a half a billion chats, using chat features to get summarized answers to help them with everything from finding the best place to travel for someone with pollen allergies, to organizing the last 10 years of worldwide volcanic activity into a table. We have also seen people create over 200 million images with Bing Image Creator.

The ones that tried it might not have seen its full potential because they were not sure what to prompt or their prompt yielded results less than impressive. I’m thinking about how to fix that with Synthetic Work.

–

The third thing I want to bring to your attention this week is a provocative piece by Ted Chiang for the New Yorker titled Will A.I. Become the New McKinsey?

Chiang, who also wrote the (in)famous piece ChatGPT Is a Blurry JPEG of the Web, is an American science fiction writer. His work has won four Nebula awards, four Hugo awards, the John W. Campbell Award for Best New Writer, and six Locus awards.

In this new piece, he writes:

A former McKinsey employee has escribed the company as “capital’s willing executioners”: if you want something done but don’t want to get your hands dirty, McKinsey will do it for you. That escape from accountability is one of the most valuable services that management consultancies provide. Bosses have certain goals, but don’t want to be blamed for doing what’s necessary to achieve those goals; by hiring consultants, management can say that they were just following independent, expert advice. Even in its current rudimentary form, A.I. has become a way for a company to evade responsibility by saying that it’s just doing what “the algorithm” says, even though it was the company that commissioned the algorithm in the first place.

The question we should be asking is: as A.I. becomes more powerful and flexible, is there any way to keep it from being another version of McKinsey?

…

As it is currently deployed, A.I. often amounts to an effort to analyze a task that human beings perform and figure out a way to replace the human being. Coincidentally, this is exactly the type of problem that management wants solved. As a result, A.I. assists capital at the expense of labor. There isn’t really anything like a labor-consulting firm that furthers the interests of workers. Is it possible for A.I. to take on that role? Can A.I. do anything to assist workers instead of management?Some might say that it’s not the job of A.I. to oppose capitalism. That may be true, but it’s not the job of A.I. to strengthen capitalism, either. Yet that is what it currently does. If we cannot come up with ways for A.I. to reduce the concentration of wealth, then I’d say it’s hard to argue that A.I. is a neutral technology, let alone a beneficial one.

Many people think that A.I. will create more unemployment, and bring up universal basic income, or U.B.I., as a solution to that problem. In general, I like the idea of universal basic income; however, over time, I’ve become skeptical about the way that people who work in A.I. suggest U.B.I. as a response to A.I.-driven unemployment. It would be different if we already had universal basic income, but we don’t, so expressing support for it seems like a way for the people developing A.I. to pass the buck to the government. In effect, they are intensifying the problems that capitalism creates with the expectation that, when those problems become bad enough, the government will have no choice but to step in. As a strategy for making the world a better place, this seems dubious.

…

By building A.I. to do jobs previously performed by people, A.I. researchers are increasing the concentration of wealth to such extreme levels that the only way to avoid societal collapse is for the government to step in.

…

The fact that personal computers didn’t raise the median income is particularly relevant when thinking about the possible benefits of A.I. It’s often suggested that researchers should focus on ways that A.I. can increase individual workers’ productivity rather than replace them; this is referred to as the augmentation path, as opposed to the automation path. That’s a worthy goal, but, by itself, it won’t improve people’s economic fortunes. The productivity software that ran on personal computers was a perfect example of augmentation rather than automation: word-processing programs replaced typewriters rather than typists, and spreadsheet programs replaced paper spreadsheets rather than accountants. But the increased personal productivity brought about by the personal computer wasn’t matched by an increased standard of living.The only way that technology can boost the standard of living is if there are economic policies in place to distribute the benefits of technology appropriately.

I won’t quote any further. Even if you disagree with the position, the entire article is definitely worth reading.

You won’t believe that people would fall for it, but they do. Boy, they do.

So this is a section dedicated to making me popular.

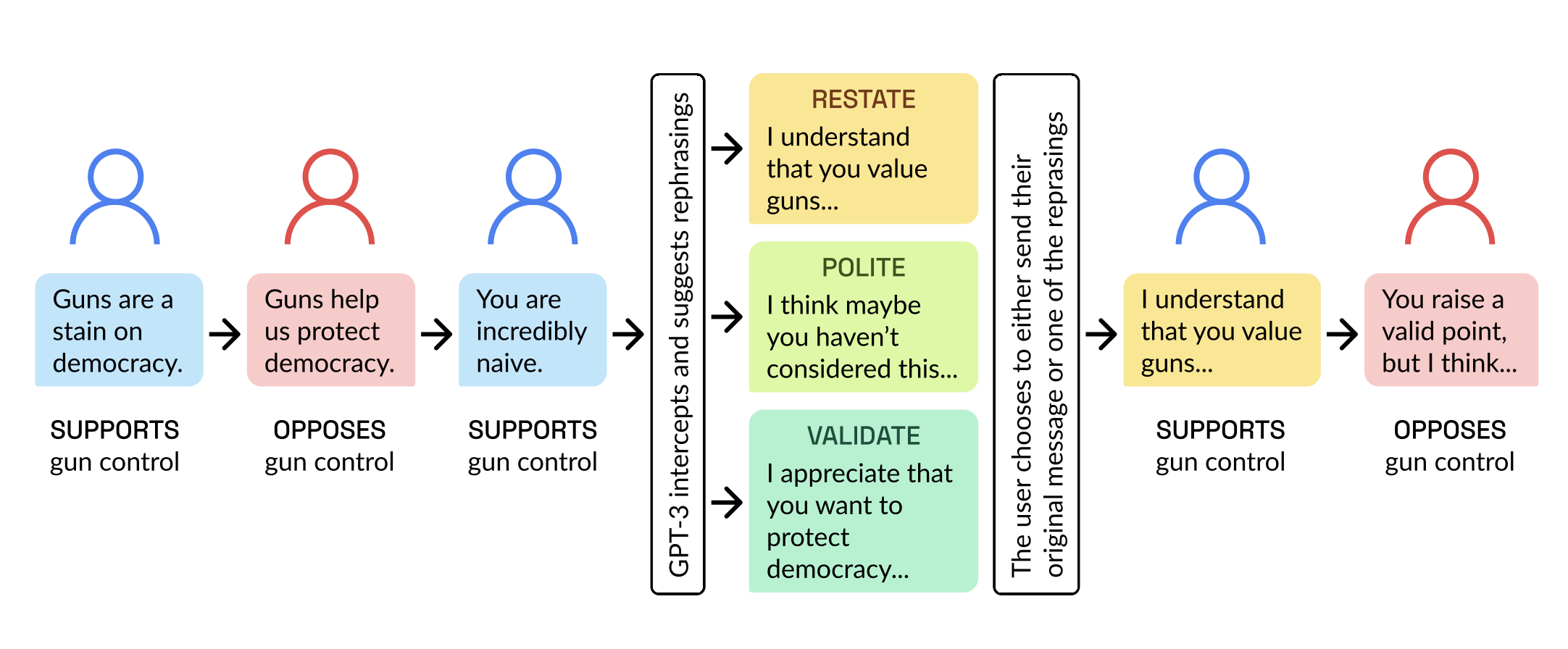

From the good news department, here’s an infographic that summarizes a very promising research titled AI Chat Assistants can Improve Conversations about Divisive Topics.

No. Despite the image, I don’t want to talk about gun control at all.

Let’s start with the research. I’m going somewhere, I promise.

we employ a large language mode to make real-time, evidence-based recommendations intended to improve participants’ perception of feeling understood in conversations. We find that these interventions improve the reported quality of the conversation, reduce political divisiveness, and improve the tone, without systematically changing the content of the conversation or moving people’s policy attitudes. These findings have important implications for future research on social media, political deliberation, and the growing community of scholars interested in the place of artificial intelligence within computational social science.

…In this research project, we specifically define “better quality” conversations as those in which people have an increased perception that they are better understood by the person with whom they are talking. Although all conversations across lines of difference do not reduce divisiveness, the feeling of being understood has been shown to generate a host of positive social

outcomes. Importantly, research shows that the benefits of such conversations do not require persuasion or agreement between participants on the issues discussed, just a feeling that each person’s perspective was heard, understood, and respected. As such, our use of AI in this experiment does not seek to change participants’ minds; we suggest this as a model for how AI can be employed without pushing a particular political or social agenda. Our focus

on feeling understood is also a response to some concerns about over-emphasizing increasing civility and specific forms of depolarization as normative goals.Research suggests a number of specific, actionable conversation techniques to effectively increase the perception of being understood, which are used worldwide

…

We developed an AI chat assistant to fill this need and act as a real-time moderator. The assistant makes repeated, tailored suggestions on how to rephrase specific texts in the course of a live, online conversation, without fundamentally affecting the content of the message. The suggestions are based on three specific conversation-improving techniques from the literatures mentioned earlier: restatement, simply repeating back a person’s main point to demonstrate understanding; validation, positively affirming the statement made by the other person without requiring explicit statements of agreement (e.g. “I can see you care a lot about this issue”); and politeness, modifying the statement to use more civil or less argumentative language.

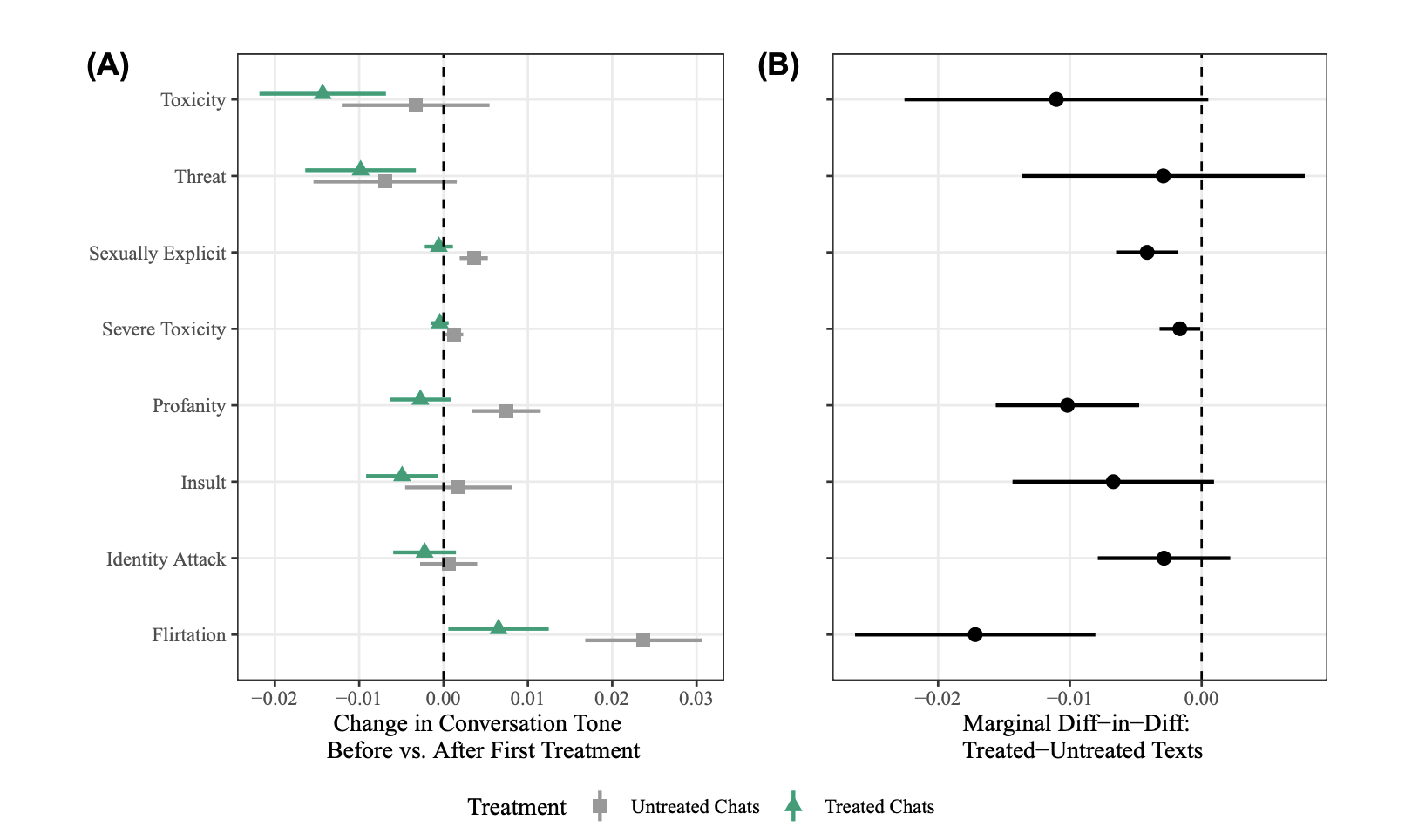

As you can see in the following chart, the AI filtering (technically called “treatment”) produces a positive effect on the quality of the conversation and reduces various negative traits.

This is all great, but we are not here to save the world. Especially when the world doesn’t want to be saved. We are here to talk about the impact of AI on how we work.

So why this research is so noteworthy? Because this approach could be applied to communication between departments within an organization, increasing cooperation and boosting productivity.

If you ever worked in a large enterprise organization you know that many of them are organized by business units and many of these BUs are in charge of one or more products within the company product portfolio.

While many top executives live in a fantasy world where these BUs harmoniously cooperate to show the synergy between their product and a business value that is greater than the sum of its parts, the reality is that these BUs fight each other every day, all day long, on a myriad of issues:

- Airtime in corporate marketing brand campaigns

- Attention of the sales force and opportunities for enablement

- Allocation of headcounts and budget

- Chances to pursue an acquisition

- Priority in product roadmap development and integrations

- and way too many other things that are way too boring to list.

The tension between these BUs escalates quickly and it lasts years. Especially if two BUs have to work together on an integration and one of the two underdelivers, damaging both.

In other words, BUs vertically in charge of products hate each other. Collaboration between them is like walking on quicksands and the effort to get people to work together is equal to political lobbying.

The one that loses the most is the customer as nothing gets done. Just like in politics. Same same.

Now.

Imagine an AI operating in a way similar to the research we’ve just seen, placed as a central hub of email exchanges between corporate business units.

Emails that come and go between them pass through the AI hub that rephrases them to increase the empathy level and the chance to be understood by the counterpart.

That is not very difficult to implement and while it might be unsettling that your email is being rewritten without your approval, we are going in that direction anyway. In this case, at least it’s for a good cause.

CEOs not living in a fantasy land where their VPs and GMs harmoniously cooperate to maximize the shareholders’ value, should seriously consider some experiments in this area.

For everybody else, if you don’t behave, it will be done to you in a more forceful way in an imaginary near-term future:

This is NOT the new @Dyson Zone. No. This is the new Advanced Negativity Filter™ (ANF). It works in this way:

The voices of the people that speak TO YOU go through an AI like GPT-4 which rephrases every sentence to remove any negativity and improve your chance to see the other… pic.twitter.com/JyIYKwCCkl

— Alessandro Perilli ✍️ Synthetic Work 🇺🇦 (@giano) May 8, 2023

This is the material that will be greatly expanded in the Splendid Edition of the newsletter.

Keep in mind the following story when you’ll read the How Do You Feel section below.

Stephen Marche a novelist and essayist who has written for Wired, The New Yorker, The Atlantic, and The New York Times about artificial intelligence since 2017, has used ChatGPT to generate a book titled Death of an Author.

Tom Comitta, reviews it for Wired:

Death of an Author, by Stephen arche, is the best example yet of the great writing that can be done with an LLM like ChatGPT. Not only is it an exciting read, it’s clearly the product of an astute author and a machine with the equivalent of a million PhDs in genre fiction. ChatGPT read basically the entire internet and all of literature, finding billions of parameters that go into “good” writing.

…

Death of an Author doesn’t try to hide many of the quirks that come from collaborating with ChatGPT, which favors Thomas Pynchon-esque monikers, like a literary agent named Beverly Bookman, and fake book titles, such as God, Inc. and Tropic of Tundra.

…

An LLM cannot write a cohesive, novel-length narrative from start to finish. At present, ChatGPT can produce roughly 600 words at a time, so in order to complete a novel, a human has to feed it prompts and then collage its outputs into a complete story. One prompt might be something like “Describe the death of an author in the style of CBC news.” The next might be “Write Augustus’ response to this death.” The computer can’t keep track of the minutiae of plot and character, leaving holes in the process.

All of this, as the loyal readers of Synthetic Work know very well, is already a limitation of the past.

GPT-4 has an 8K token context window (think about it as the short-term memory of the AI) and it will soon have a 32K token context window. Which means, as we explained in multiple issues of this newsletter, no issue whatsoever in maintaining narrative and character consistency across a medium-length novel.

Let’s continue:

Despite the use of so many diffrent programs and styles, this text has Stephen Marche’s signature all over it. Marche even said as much to The New York Times: “I am the creator of this work, 100 percent.” What’s striking, though, is what he says next: “But on the other hand, I didn’t create the words.”

…

As someone who has just finished writing two novels that incorporate LLM language, I agree with Marche that only a good writer will make anything worthwhile with these programs. Because of this, it seems important to acknowledge the human hand in every aspect of the writing process.Even the choice to include a particular LLM output over another is a human decision, not unlike the selective reframing employed by artists like Marcel Duchamp and Andy Warhol.

Notice how people systematically think about the AI in terms of what’s capable of today, not realising the quantum leap that it has already made in just one year, and the fact that the next generation is already being developed.

We dedicated a whole Splendid Edition of Synthetic Work to the adoption of AI by the publishing industry: Issue #5 – The Perpetual Garbage Generator.

A world where, say, 50% of the text is AI-generated might become the new normal. If so, there might be consequences:

Let's stretch our imagination, and play some devil's advocate, shall we?

One year from now, or five, most people will write things assisted by AI: emails to prospects, clients, and colleagues; business and marketing plans; slide decks and conference speeches, songs and poems.…— Alessandro Perilli ✍️ Synthetic Work 🇺🇦 (@giano) May 9, 2023

While we wait to see what happens, you might be interested in reading an excerpt of Death of an Author.

For any new technology to be successfully adopted in a work environment or by society, people must feel good about it (before, during, and after its use). No business rollout plan will ever be successful before taking this into account.

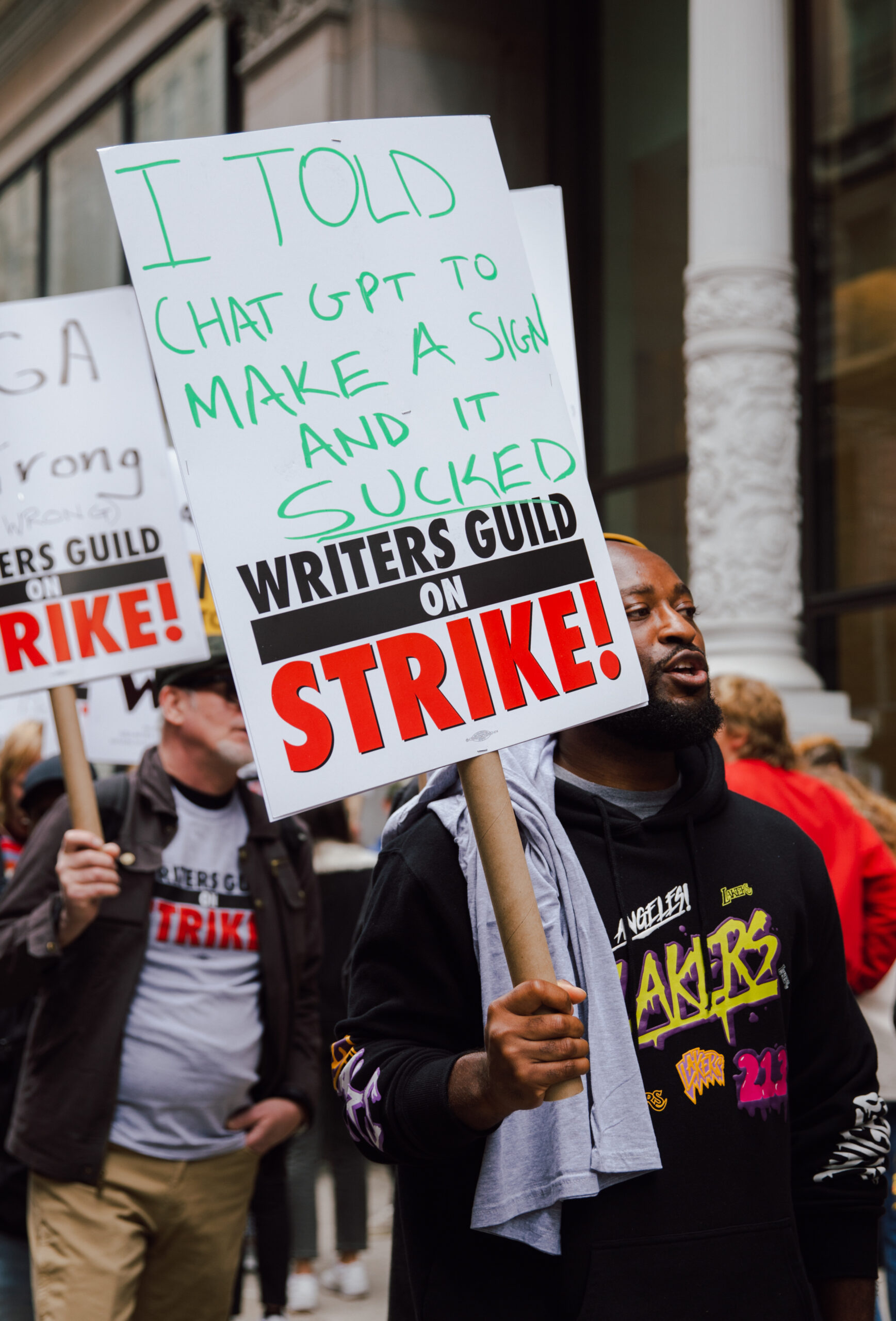

Many people don’t feel their job threatened yet by AI, but a growing category of workers definitely is: professional writers.

Lois Beckett, reporting for The Guardian:

Outside Amazon Studios in Los Ageles, the striking writers of Hollywood had a promise for studio executives: “AI will replace you before it replaces us.”

…

The 11,500 members of the Writers Guild of America went on strike on Tuesday, sending hundreds in Los Angeles and New York to picket lines outside of major studios such as Amazon, Netflix, Paramount and Warner Brothers, instead of their writers’ rooms. As they negotiate a new contract with major studios, writers are arguing that the rise of the online streaming era has left them behind, leading to worse pay and less stable work, and demanding new rules for how studios use artificial intelligence in film and television writing.…

For Pendleton-Thompson, a Star Trek writer who spent years working as a Hollywood assistant before joining the Writers Guild in 2021, the strike means financial uncertainty, and negotiations over dream film projects suddenly in limbo. But she believes the fight is worth it.

She is especially concerned about studios using AI for stories about people of color and people with disabilities: “We’re going to get the stories of people who have been disempowered told through the voice of the algorithm rather than people who have experienced it.

“I think it’s beginning of a bigger conversation about how AI is going to be used to continue to funnel money straight to the top, rather than distributing it to the hard-working people who built this industry,” she added.

I love Star Trek (but only Star Trek The Next Generation). So, I feel for this writer.

In response to this strike, the BBC runs an “experiment.”

Chelsea Bailey, reporting for the BBC:

The WGA likened the use of AI i screenwriting to plagiarism and said it was fighting to regulate the technology because it “undermines writers’ working standards including compensation.”

Among the first casualties of the strike is late-night TV, which relies on a bevy of writers to quickly turn the day’s events into comedy gold. So far, Jimmy Kimmel Live!, The Late Show with Stephen Colbert, and The Tonight Show with Jimmy Fallon have all announced a hiatus as writers take to the picket line.

So we put ChatGPT to the test to see if it could match the brilliance – and comedic minds – of America’s favourite late-night hosts and the teams of writers behind them. Within seconds, the bot had drafted a series of jokes. But were they funny?

The article goes on with a series of jokes, supposedly in the style of Stephen Colbert, generated by ChatGPT.

But:

- The BBC didn’t use GPT-4, which is significantly more capable than ChatGPT.

- They are not disclosing the prompt they used, so it’s hard to say if they are following the techniques we list in the How to Prompt section of Synthetic Work or not.

- Here the problem is not what the AI can do today. It’s what it will be able to do in 3 years from now. I’m pretty sure that the BBC is perfectly capable of understanding that.

With these three things in mind, the conclusion of the article:

Rumours of Hollywood’s demise a the hands of artificial intelligence appear, at least for the moment, a little exaggerated.

Uhm, sure. I don’t know about you, but this seemed more like a veiled threat than a reassuring failure.

Reddit user u/cocktail_peanut has been playing with the text generation AI model called LLaMA and the text to image AI model Stable Diffusion, forcing them to cooperate thanks to some clever automation.

The result is an autonomous fashion blogger that never stops pretending to be somebody you want to follow.

You can see more on this website: https://balenciaga.dal.computer

Clearly, this is just a proof of concept: the images are not exceptional and you can read the prompt being used to generate them. But it’s trivial to make it look realistic.

The same identical approach can be used to generate synthetic experts or influencers about any topic you desire. Fashion blogging is visually impactful, but it could be ant blogging if your heart is in it.

In fact, I think ant blogging would be a blockbuster.

Many, many people have already started doing this without revealing it. The human-made digital trash that pollutes your social media feed is being progressively replaced with AI-made digital trash by people that you thought were your friends.

Only some have ethical issues and eventually reveal that they are using AI. Like Boris Eldagsen, who first won the Sony World Photography Awards (in the Creative category) with the picture below and then admitted that he actually used generative AI.

Et tu, Brute?

Yep. Big time.

In the Travel & Tourism industry, MakeMyTrip is rolling out an AI voice assistant to help customers book flights and hotels.

Saritha Rai, reporting for Bloomberg:

MakeMyTrip Ltd. will use Microsft Corp.’s Azure Open AI technology for the chat, initially available in English and Hindi, the Indian company said in a statement Monday. The voice assistant will appear on the company’s app and the front page of its travel site and is designed to help users book flights and holidays.

The company, which plans to add further Indian languages to the service, showcased an AI voice trial chat in the Bhojpuri language native to parts of northern India. The AI agent offered airline information, flight dates, timings and pricing. After choosing a trip, users received a QR code to help them complete a payment.

…

The services use a combination of GPT-3.5 and GPT-4 systems developed by Microsoft-backed OpenAI, MakeMyTrip said…