- It’s time for a long exploration of an extreme scenario where AI impacts all white-collar jobs at once. Sigh.

- Ada Palmer, a professor of history at the University of Chicago, is positive about the impact of AI on jobs but advocates for strong government policies.

- David Autor, a professor at MIT, widely regarded as one of the greatest labor economists in the world, is equally positive about the future of work in an AI world.

- The latest survey conducted by Pew Research Centers suggests that a lot of people are talking about something they didn’t even try: ChatGPT.

- The National Eating Disorders Association (NEDA) fires its call center employees to replace them with AI, then shuts down AI.

- Customers services workers start to feel that employers have not been entirely upfront with their workers about the ways they are incorporating generative AI.

P.s.: This week’s Splendid Edition of Synthetic Work is titled I’d like to buy 4K AI experts, thank you.

It covers what Wells Fargo, Carvana, Deutsche Bank, Amazon, and JP Morgan Chase are using AI for.

It also teaches you how to use GPT-4 to properly document a corporate procedure as mortally boring as opening a bank account. Goosebumps, I know.

It’s time to go deeper into something that nobody likes to discuss. I long postponed weighing in on the following topic, as I hoped that the news we discuss every week here on Synthetic Work would be enough to show a trend.

However, the more I read the news on social media and mainstream media, the more I’m convinced that there’s little understanding of what’s at stake today because of artificial intelligence.

So let’s talk about it. I’ll regret it, I know.

Techno-optimists promise you a future where the impact of AI on our jobs will be offset by an abundance of new jobs. They cannot quantify how many new jobs we’ll have thanks to AI, or even describe them, but they are certain we’ll have those jobs.

And, for sure, we’ll have some new jobs. In these first three months of Synthetic Work, we’ve seen a few companies starting to hire for a position that didn’t exist six months ago: the prompt engineer.

Of course, that’s not enough to justify the techno-optimism, and the avalanche of data points we documented with Synthetic Work so far, suggests all but techno-optimism.

We may have just entered a golden age for humanity thanks to AI. I hope so. We all hope so. But the people that believe in the advent of this golden age seem adamant about not discussing at least six troubling aspects.

One. Past performances are not indicative of future results.

If you get even remotely close to finance or trading, it’s one of the first things that you are told. And yet, we keep forgetting about it.

Here’s an example, kindly put together by the data visualizations wizards at Visual Capitalist:

Only because the roll-out of new technologies created more jobs in the past, it doesn’t mean that it is true for all technologies.

It is possible, but not certain. And AI is a very different technology compared to everything else humans have invented so far (see the next point).

As Seth Godin reminds us, sometimes we call this behavior survivorship bias: when assessing the risk of something, people tend to focus exclusively on the winners, ignoring the losers.

Two. The magnitude of the automation introduced by generative AI is unprecedented.

The analogy that most techno-optimists like to do is between the automation enabled by modern AI (and here we all really mean generative AI) and the automation introduced by other new technologies in the past.

But generative artificial intelligence is not like other technologies. The automation it introduces is in a very different place.

Automation is a fundamentally stupid technology that simply speeds up a process already in place: acquire an artifact as input, manipulate it, and produce an output.

The artifact can be a prime resource extracted from the ground in a mine or a collection of numbers related to the performance of a company.

Automation makes the execution of the steps that are part of the process faster and more efficient.

Human labor is core for the sourcing of these artifacts that automation needs as input, and core for the interpretation of the output of the automated process.

Generative artificial intelligence does more than just automate a process. It automates the sourcing of the artifacts and the interpretation of the output of the automated process.

Let’s use a search on Google as a (terrible) example:

- You ask your question and receive back hundreds of thousands of results. Those web pages that become the results of our search are the artifacts, that is the input of your process.

- You pick the top three results and read them. After reading these three results, you combine parts across them to come to something that answers your original question in the best possible way. That is your manipulation of the input, the core of your process.

- The mashup of the top three results is the output of your process.

Automation can open the browser on your behalf, pick the top three results on your behalf, and combine them in a single page avoiding repetitions on your behalf. So you save a ton of time to get to the output you want. But you still have to manually interpret the output of this automated process.

So there’s human labor in sourcing the artifacts, human labor in writing the automation workflow, and human labor in interpreting the output of the process.

Generative AI systems like GPT-4 are not like that. Over-oversimplifying:

- They don’t have to source the artifacts that will be used as input. They make up the artifacts that are necessary to answer your question. GPT-4 can generate web pages about the topic you are searching for instantaneously, without the need for any human to create those web pages in the first place. There’s no human labor involved in the sourcing of the input.

- Then, they look at the content of all these web pages they can generate, not just the top three like you did when you used Google Search. Then, they pick what is the most likely answer you would receive if you had asked your question to a real person. Just like a kid learns what is the most appropriate answer for a certain question by looking at how the parents answer that question in different contexts.

This is the manipulation part of our process and it’s fully automated, too. - What you end up with is not just the output of the automated process, but an interpreted output. The interpretation has been done for you, in a fully automated way, without the need for any human labor.

As you can see, human labor is almost completely eliminated from the process, reduced to you asking what you want and getting it.

And this is true not just with text generation, but also with image generation. If you ever used Midjourney, you know the astonishing images you can produce by writing a single, short sentence. And very soon, you’ll be able to do the same with videos, and then with music.

Even if generative AI systems will remain imperfect for some years, and their imperfections force people to repeat the initial request multiple times and tweak the answer they get, the parts of the process where people still have a role to play are fewer.

So, when someone compares the introduction of the electronic spreadsheet, and its impact on bookkeepers, with the introduction of generative AI, and its impact on…every white-collar job (see the next point), they are ignoring the fact that AI drastically reduces the effort of a human operator (just like Excel) but it also drastically reduces the need for human operators.

To use yet another analogy, it’s the difference that goes between giving a person a second pair of arms (thanks to automation) and cloning that person entirely (thanks to generative AI).

Three. Generative AI is impacting all white-collar jobs at the same time.

Most human inventions of the past have displaced jobs for specific categories of workers or in specific industries. The introduction of the electronic spreadsheet, as we said, has impacted bookkeepers. We saw an enlightening chart about this in Issue #6 – Putting Lipstick on a Pig (at a Wedding).

However, these inventions have never threatened white-collar employment across all industries at the same time. So, let’s explore a scenario where this happens.

Let’s say that you lose your job because of AI. You are a customer service representative in Rome, a creative writer in LA, or a digital artist in Beijing. All stories that we have read about in the past issues of Synthetic Work.

What do you do?

First, you try to find another company that might offer you the same job. But, eventually, crumbling under competitive pressure, it gets harder and harder to find an employer that is not forced to replace people with AI to keep production costs down and win customers.

You take this as a sign that it’s time for a radical change. Maybe you change industry completely. Maybe you try to pursue the dream job that you actually always wanted to do. We saw so many people doing that during COVID and we are seeing many more now, as companies are laying off employees in mass due to the difficult economic environment.

But at this point you discover something unexpected: AI is taking jobs in many other industries, not just yours. It’s suddenly harder to radically change career because most careers are impacted by AI, all at the same time.

What do you do, then?

You decide to work for yourself. Becoming a creator. You start a YouTube channel, a podcast, a newsletter… You tell yourself: “People will forever want to connect. It’s in our genes. I’ll always find an audience.”

But while you were busy doing your old job before being fired, creators all around the world found the time to learn and master an increasingly powerful AI, and now they can generate 10x more YouTube episodes than you, and write 10x more issues of their newsletter than you, in the same amount of time.

In fact, some of them, have completely digitalized their persona and automated the creation process. They leverage their synthetic voices and video presence to generate realistic performances that can be created at almost zero cost and at the speed of light.

You can’t compete with that.

Even if you have something exceptionally valuable to say, the noise generated by these creators on AI steroids drowns your voice down to the point of insignificance.

You did not spend your personal time to upskill yourself and learn about these new AI technologies, thinking that your employer would take care of it, and instead, your employer has fired you at a moment when all jobs are concurrently impacted by AI, in every industry.

Fine. No problem. You are resourceful.

You give up on the idea of working as an information worker and, instead, decide to focus on more manual activities, which are significantly less impacted by generative AI.

The problem is that you are not the only one with these problems. All of a sudden, millions are in the same identical situation. They are all trying to find a new, more manual job. So manual labor becomes a commodity, paid at a much cheaper price than it already is today.

So the question becomes: how much more do you need to work in this new life that you carved for yourself to sustain the same lifestyle you had as an information worker?

Four. Job creation requires time, and who gets the new jobs rarely is the one that lost the old one.

Perhaps, the scenario I described in the previous point will never happen because generative AI will enable an abundance of new jobs. But if all job categories across all industries are impacted at the same time, can we really create enough new jobs quickly enough to avoid mass unemployment?

And even if so, without extensive upskilling programs supported by the governments of the world, can the displaced people really benefit from these new jobs?

In other words: if the adoption of generative AI leads to 10, 50, 100 million jobs lost in a very short timeframe, can we act quickly enough to avoid 10, 50, 100 million homeless people?

Perhaps, the Israeli historian and intellectual Yuval Noah Harari hinted at this when, just a few weeks ago, he said:

Another danger is that a lot of people might find themselves completely out of a job, not just temporarily, but lacking the basic skills for the future job market. We might reach a point where the economic system sees millions of people as completely useless. This has terrible psychological and political ramifications.

You can read more about his interview in Issue #11 – The Nutella Threshold.

Five. It might get better before it gets worse.

You are certainly familiar with the reverse expression. Indulge with my version just this one time.

If you read the Splendid Edition of Synthetic Work, you know that systems like GPT-4 can help you achieve incredible things.

These systems are gaining new capabilities every month, and each and every one of these capabilities is phenomenal in itself. Once OpenAI combines all of them together, the result might be much more powerful than the sum of its parts.

In fact, you should really stop wondering about what will happen with GPT-5 or 6. What’s available today with GPT-4 is already astonishingly powerful, and what OpenAI can do with it is far beyond the imagination of most people.

We have yet to see:

- GPT-4 with a bigger short-term memory (what’s called context window), able to manipulate long documents like PDFs with many pages, SEC filings, multiple short documents that could be correlated together, etc.It sounds like GPT-4 might gain a 1M tokens context window before the end of this year.Imagine what could be possible with such a large short-term memory.

- GPT-4 with the capability to ingest and “see” images. This won’t be useful just for analyzing pretty pictures and modifying them as you please (like the inpainting or the ControlNet acrobatics that you do with Stable Diffusion, but frictionless).For example: for years, I wanted to adapt the idea of computer vision to work on a desktop OS environment, to seamlessly understand running GUI and CLI apps and act on them (e.g., manual workflow recognition and conversion into an automated workflow).If GPT-4 can “see” images, perhaps this will be possible, and we might gain the capability to analyze and act on ANY app without the need for that app to integrate/adapt.Imagine the incredible opportunity to automate the legacy apps that power most of the business world without having to wait for them to release and update APIs, become API first, etc.(short of this, we’d still gain the capability to control those desktop apps with voice)

- GPT-4 with all its new capabilities (browsing, code interpreter, plugins, image understanding, etc.) available in the same session.Today, you use only one of them at a time, and most are in alpha/beta. Like a kid that is learning how to master one ability at a time.Then, one day, he/she starts to use all those abilities together and you are blown away by how much that individual can accomplish.

- GPT-4 with serious plug-ins. For the most part, except for a handful of valuable plug-ins, what we are seeing today is a series of prototypes that are more about promoting the brand of the plug-in developer than providing real value to customers.This will change as top data providers will start offering plug-ins to access their data sources (probably for a fee). Imagine Wharton or McKinsey offering a plug-in that allows GPT-4 users to query (or even just take into account) the data they have about business management best practices.

- GPT-4 with Dall-E 3 integration.

You have seen what Midjourney has been capable of accomplishing in just 1 year of development. And they are a team of…11?…people with infinitely less capital and leverage than OpenAI.In the meanwhile, groundbreaking research comes out every other day about new things that can be done with image generation.I’d be amazed if GPT-4 wasn’t already designed to become the main interface for the next version of Dall-E. - GPT-4 with voice. You know that OpenAI has already published one of the two components that are necessary for a voice interface: speech-to-text. It’s Whisper, and it’s already the state of the art in STT.The other component is text-to-speech. It happens that the creator of one of the best TTS engines out there (Tortoise TTS) has stopped working on his project to take a job in OpenAI in 2022.And then you have Andrej that returns to OpenAI and writes in his bio that he’s “Building a kind of JARVIS”.You have to ask yourself what will be the emotional reaction of people when an AI that already feels human in its replies will start to sound human.The impact of a GPT-4 that can talk is underrated because Siri, Alexa, and Google have failed to impress for years. But our reaction to an AI that talks will be very different when the replies we get are as good as the ones that GPT-4 generates.

This impact is not just emotional. Imagine the opportunity for people with disabilities to suddenly be able to count on GPT-4, which is able to understand them infinitely better than any vocal interface humans have ever built.

I could continue, but you get the point: you don’t need GPT-5 to get a revolutionary system. It’s already right in front of you. It’s just disassembled.

And while it’s disassembled, the core AI system and the new skills we are being introduced to, month after month, are having a very positive impact on our productivity. For the most part, they are not threatening our jobs. They are empowering us to do much more in much less time.

However, at some point, all these skills will come together, the revolutionary system will be fully assembled, and it won’t look like it looks today.

So the question becomes: is there a threshold that AI can pass, in terms of capabilities, after which it stops being a productivity boost and becomes a job displacer?

In other words: are we running at full speed, lifted by AI, towards an employment cliff that we won’t be able to see until it’s too late?

Six. AI-driven automation doesn’t imply a better quality of life.

Let’s say that techno-optimists are right and there will not be a cliff like the one I described in the previous point. Let’s say that we’ll have an abundance of new jobs thanks to AI, and that we’ll be able to create them fast enough to avoid mass unemployment. A huge portion of today’s labor will be automated.

In the last few decades, we’ve seen automation helping people do more in less time across a wide range of jobs and industries. Perhaps, including yours.

Did we see people earning more, or working less for the same pay?

Or did we see people increasingly doing more work for the same pay or even less pay, or getting two jobs?

Who benefits from automation? The workers or the employers?

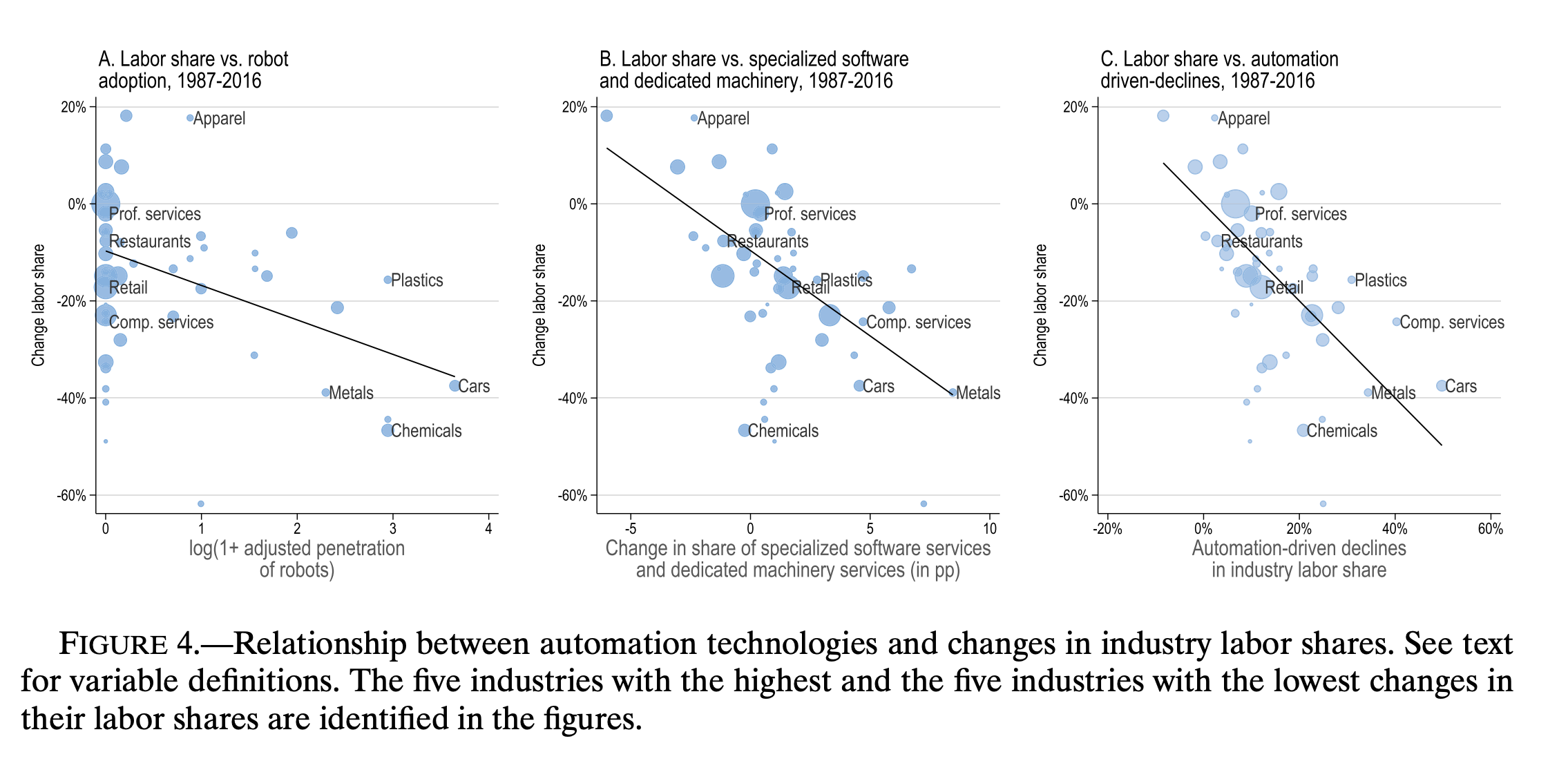

In October 2022, the Econometric Society, published a research titled Tasks, Automation, and the Rise in U.S. Wage Inequality

The authors write:

This paper proposes a new approach for thinking about wage inequality. In our theory, shifts against less skilled workers result from technologies that automate and thus displace workers from tasks they used to perform. Our main contribution is to develop a general version of this theory and show how it can be applied to quantify the effects of

automation on wages and inequality. Based on this approach, we document that between 50% and 70% of the overall changes in the U.S. wage structure over the last four decades are driven by automation.

We’ll do a deeper analysis of this paper in the A Chart to Look Smart section of Synthetic Work, in a future issue. But for now, it’s worth asking the questions.

I hope that these six points will give you an alternative perspective to reframe the techno-optimism evangelized by people living in Silicon Valley and tech enthusiasts all around the world.

I don’t know if any of these truly gloomy (and oversimplified) scenarios will happen. But I feel much more comfortable discussing them, and playing the devil’s advocate, than ignoring them.

And you should think about them, too, as the exercise helps you to push your government representatives to start thinking about them, too.

Our plan to react to this outcome can’t just be “Let’s wait and see what happens” or “Let’s hope that these AI researchers invent the so-called Artificial General Intelligence (AGI) so that this amazing being will figure out how to solve hunger, illnesses, and unemployment”.

Government policies and social welfare devices like the Universal Basic Income (UBI) must be designed and evaluated in case this scenario happens to protect all of us and our families.

And for us, it seems to me that the only sane approach is to observe carefully, take advantage of what AI has to offer you today, and stay cautious about tomorrow.

The only problem is that being sane doesn’t make you rich. Risk-taking does. That’s what’s propelling much of the AI race we see today.

This is the trade-off we are facing today when we talk about AI.

With this framing in mind, let’s say what happened this week.

Alessandro

P.s.: as you just noticed, Synthetic Work is now delivered on Sundays. If you prefer to go back to Fridays, let me know.

To counterbalance the armageddon intro of this issue, let’s take a look at two (mostly) positive perspectives that I read this week.

Let’s start with a very positive essay titled We are an information revolution species by Ada Palmer, a professor of history at the University of Chicago.

She writes:

College graduation used to involve passing a test on writing scripts with ink and quill. Students and teachers saved innumerable hours with the transition from quill to pencil, to typewriter, to digital. Now that students submit their essays to me through the Wi-Fi aether, they have time to write more, learn more, do more. AI will soon make easier, our current methods of creating and evaluating college essays obsolete. Mourning the changes, as scribes mourned the obsolescence of quill and ink, too easily distracts us from imagining the great new things that will be made with better tools. People today still learn calligraphy, still make a living at it, but we use it when we truly want to make that kind of art—we use word processors when making other things. That doesn’t mean we create less art overall, it means we create more.

AI is bringing the democratization of the power to make the modern media we constantly consume. Soon, creating a professional quality videogame or full-length movie may be no harder than writing a college essay. My history students often say they want to write a screenplay, or describe a videogame they want to make about the period, but that isn’t what they really want. They want to make the game, to make the film—to see it, not describe it. Now it won’t be long until they can. In a Shakespeare class five years from now, instead of a final essay, students could direct three versions of a scene, having AI produce full-length video with variations in the set, costumes, and direction. If tasks that once were hard are now easy, that frees us to take on harder ones.

…

Soon, every time we have an idea for an app, we will be able to create it, not to sell and make a living, but to use ourselves, just as we want it, easily, as we print out our photographs to decorate our walls and make us smile.Doesn’t that threaten the livelihoods of app-makers?

It does.

The livelihoods of the scribe, the quill-maker, the typist, and the professional photographer were all threatened by the information revolutions. They needed safety nets, retraining, transformation of their employments into new ones. But we know about such dangers now, and that we can prepare for them. Information revolutions need thoughtful transitions. When the digital spreadsheet arrived, which could do in seconds what took accountants weeks, so accountants transitioned to using them, and doing more. If an AI can pass the written Bar Exam, that puts Bar Exam level information in everyone’s hands, while lawyers of the next generation will train using VR trials, and learn to compile documents at speed with AI help, skills which will mean a poor person can get the documents they need with one hour of costly lawyer time rather than ten. That is democratizing information.

…

The dangers of ChatGPT and its successors do not lie in the technologies themselves. They lie in the fact that we must now make choices, good or bad, about how we help those navigate the rollout effects. We fear the artist and writer will starve, but the artist and the writer are already starving because our copyright system is broken, serving mainly monopolies. We fear the actor’s image will be harvested unpaid by algorithms to produce those middle-schoolers’ superhero tales, but practically all actors are already struggling, working second jobs, without a means to make a living as their words and images ripple through the digital world. Most of the profits from hit songs go to the stockholder, not the musician. That—not AI—is the threat to human flourishing. If we pour a precious new elixir into a leaky cup and it leaks, we need to fix the cup, not fear the elixir.

…

In 2000, Time Magazine declared Gutenberg their Man of the Millennium, but many moments in the print revolution he unleashed were terrifying as well as good, just like this one. This revolution will be faster, but we have something the Gutenberg generations lacked: we understand social safety nets. We know we need them, how to make them. We have centuries of examples of how to handle information revolutions well or badly. We know the cup is already leaking, the actor and the artist already struggling as the megacorp grows rich. Policy is everything. We know we can do this well or badly. The only sure road to real life dystopia is if we convince ourselves dystopia is unavoidable, and fail to try for something better.

That’s a wonderful essay, worth reading in full. And I’m glad Professor Palmer and I agree on the need for social welfare.

BUT… 🙂

If I’d have to apply the same logic that techno-optimists apply when they imagine a future abundance of jobs thanks to AI, if I’d forecast our future results on our past performances about social welfare, I’d say that we are not going to do a good job at all with that leaky cup.

So which is which? We can’t selectively decide to lean on the past only when it’s convenient to us.

—

The second thing to pay attention to this week is an exceptional interview with David Autor, a professor at MIT, widely regarded as one of the greatest labor economists in the world, for NPR Planet Money:

DAVID AUTOR: So prior to the Industrial Revolution, there was a lot of artisans, people who did all the steps in making a product – right? – so whether it’s a piece of clothing or, you know, building house or a tool. The era of mass production created an alternative way of making things. And it was basically breaking things down into a series of small steps that would be, you know, accomplished in sequence often by, you know, machines, managers and pretty low-skill workers, right? And so a lot of artisanal skill was displaced. I mean, the Luddites rose up for a reason.

ROSALSKY: Autor says that, at first, the factory jobs that displaced the artisans required less skill and also paid less – so kind of a bummer. But then, machines got more complex, and so did the things they could make. You know, we’re talking automobiles instead of textiles. And so factory owners started to need workers with more skills.

AUTOR: Over time, that work became more skill-demanding because people had to follow formal rules. And if you’re using a lot of expensive equipment and making precise products and using expensive inputs, you need people who are kind of – can follow those rules well. So this created what you might think of as the kind of middle skill, what I would call mass expertise, right?

…

For people who didn’t have four-year college degrees, these were the relatively better-paid jobs, right? They’re better-paid than, for example, food service, cleaning, security and so on. And the reason is that food service, cleaning, security – they’re valuable pursuits. They do, you know, important things in the world, but most people can do them. And so they’re not going to be well remunerated. For work to be well paid, especially in an industrial economy, it needs to be expert work of some sort.By expert, I mean, one, you need a certain body of knowledge or competency to accomplish that – a thing. That thing must be worth accomplishing, right? And not everyone can do it. And so it is the case that the kind of industrial era helped really grow the middle class. It created this tail wind where people with a reasonable amount of education – all of a sudden, it made them highly productive in offices, highly productive in factories, highly productive in sales. And so, yeah, it created this huge rising tide that, you know, was relatively equalizing. Now, I don’t want to say it’s only technology, right? There are institutions that went with this. There’s democracy. There was obviously the system that educated people. But the technology helped.

…

if you’re a highly educated worker, you know, if you’re a doctor or an attorney or a marketer or researcher, those people are highly, strongly complemented by this sort of automation of these information processing and routine tasks. On the other hand, if you are someone who does, like, dexterous, manual work, like food service, cleaning, security, entertainment, recreation, there’s really not much complementarity there at all, right?

…

It doesn’t make you much better, doesn’t make you worse. However, you have lots of people in the middle, who are now being pushed out of those middle-skill occupations. And it’s just not very easy to move up, right? If you’re an adult and you’re displaced from your manufacturing job, it’s very unlikely you’re going to get a law degree or medical degree. So you’re going to more likely end up driving a truck, working in a restaurant, working as a security guard. And so the computer era actually devalued that mass expertise and massively amplified demand for elite expertise, which has been really not so great, right?

…

There’s a big possibility there. So the good scenario is one where AI makes elite expertise cheaper and more accessible, right? So right now, you know, if you want to do a lot of medical procedures, you need a medical degree. That takes a decade, right? And that makes those people scarce, expert and expensive. But you can imagine that with the right tools, you could devolve some of those tasks to people who have – know something about medicine and health care, but they don’t have to have that level of education. And then, they could do much more. And, you know, we already have an example of that, right? So the nurse practitioner – a nurse practitioner’s a nurse who has an additional master’s degree.

…

OK, great. And so nurse practitioners are well paid, right? The median pay is about $150,000 a year. And they do many of the things that only medical doctors were allowed to do, right? They diagnose. They prescribe. They treat, right? And, you know, how is that kind of passable? Partly, it’s a change in, you know, medical norms and scope of practice boundaries. Partly, they’re enabled by technology, right? There’s a machine that says, don’t put those two prescriptions together; you know, that would be a problem. And, you know, this set of symptoms is associated with this constellation of diseases; check the following. And you can imagine many ways in which people with foundational skills in something could use AI to make that expertise go further. So the good scenario is basically where AI lowers the cost of elite expertise, makes it more available, and increases the value of basically the middle-skill workers of the future. That’s my good scenario.

…

But even in this good scenario, know there’s going to be a disruption of people who are currently making – I don’t know – a hundred to $200,000 a year or something like that. All of a sudden, it doesn’t make as much sense to pay those people as much anymore because you have a whole pipeline of people who can now do that job.AUTOR: That’s correct. It’s possible that, basically, you will see some expensive expert work just less in demand, that you will need fewer managers for certain types of decision-making, that, you know, more, like, legal work will be done by machines as opposed to by lawyers, and that you’ll have lawyers, but they’re supervisory, and there are, you know, fewer of them. So, yeah, I think it’s possible. But, you know, in the long run, that means fewer people will have to go to a college (laughter), which is expensive.

…

ROSALSKY: One scenario – obviously, like, companies who own these systems will get insanely rich. But then, there’s the – also, like, the downstream effects where there’s a whole bunch of industries where a bunch of people used to do the job, but now only you need one or two people to do it.AUTOR: Yeah.

ROSALSKY: So what do you think about that sort of pessimistic potential future?

AUTOR: I don’t want to rule it out. I mean, you can – so you can imagine a world where, you know, you just need a few superexperts overseeing everything, and everything else is done by machines, right? So that’s one possibility. Another possibility is one where, like, no one’s labor scarce, right? That’s not a good world because then we have lots of productivity, but nobody – who owns it? Just the owners of capital, right? Then, we have to have a revolution and blah, blah, blah. It’s not going to work out well, right? Those things never work out well. So I don’t view those scenarios as highly likely.

One thing to recognize is that we are actually in a period of sustained labor scarcity because of demographics, right? We have very low fertility rates. We have large populations who are retiring. And we have radically restricted immigration. And so the U.S. population is growing at its slowest rate since the founding of the nation. And most industrialized countries – and China as well, by the way – are facing this problem of they’re getting smaller and older, or their populations are not growing. That’s a world where we need a lot more automation actually to enable us to do the things we need to do including care for the elderly. So I’m not worried about us running out of work and running out of jobs to do. I am worried about the devaluation of expertise.

…

I’m worried about a world where no one’s labor scarce. But let me give you, like, an example of what I mean by this. Like, for example, you might say, oh, Waze makes everyone an expert driver, right? But, no, actually, it doesn’t. It doesn’t make anyone an expert driver. It has the expertise, right? So there was a time when London taxicab drivers needed to know all the highways and byways of London, which is a – it took years to master, right? It was an incredible feat of memorization. And then, that made them really expert. They could get you around London better than any other driver. Well, now, you don’t need to know that. You just need a phone, right? And that’s good for passengers. It’s good for consumers. But it devalues the expertise that those drivers.

…

ROSALSKY: OK. So, yeah, that’s one of Autor’s big worries – that what happened to London cabbies kind of happens to the entire labor force – that AI makes human expertise kind of irrelevant. It devalues it. But David Autor doesn’t actually think that will happen, at least not for all workers and not any time soon. He says people still have all of these advantages over AI. Like, we’re more adaptable. We have more common sense. We’re better at relating to other people. Not to mention – we have bodies. We have arms and legs, and we move around in the world. Like, there’s a bunch of things about being a human that still have advantages in the marketplace.

Mr. Autor clearly doesn’t read the academic papers that the AI community releases every single day. Otherwise, he wouldn’t be so optimistic about our supremacy over AI in some of these areas.

But for now, that supremacy exists and, perhaps, it will last long enough to safely reach a golden age of prosperity for our species.

You won’t believe that people would fall for it, but they do. Boy, they do.

So this is a section dedicated to making me popular.

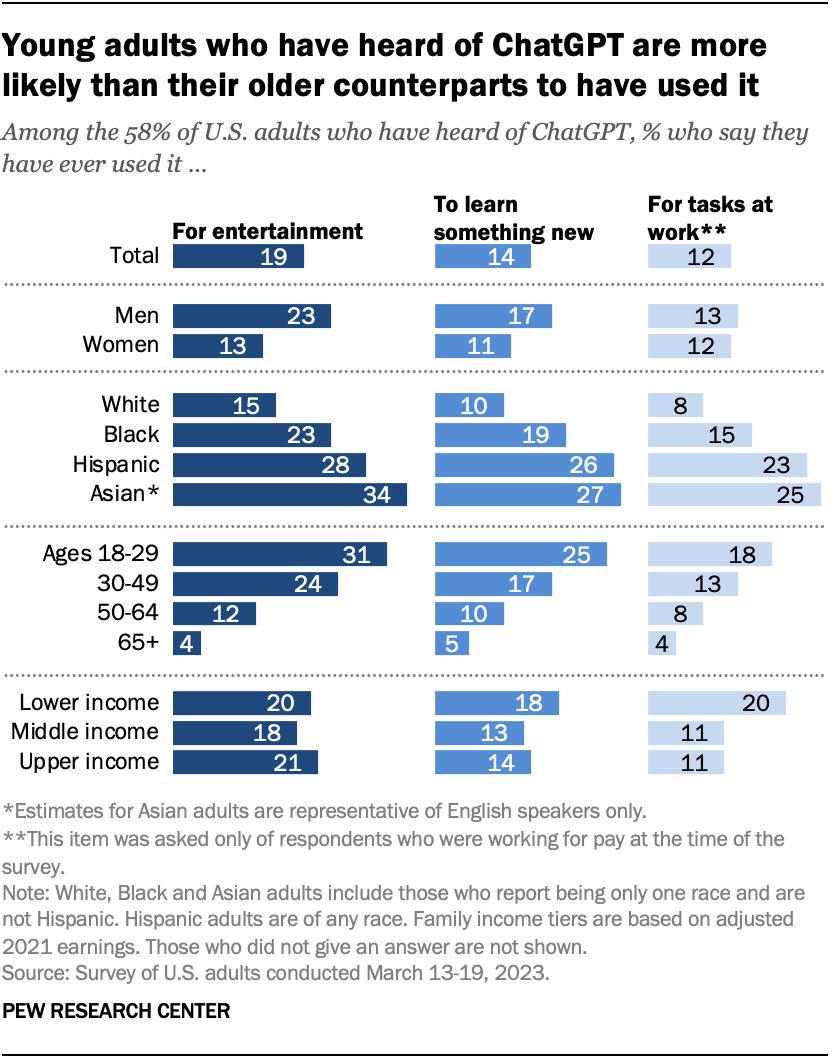

The Pew Research Center, a nonpartisan American think tank, published the result of a new survey, conducted in March 2023, about how many people have actually used ChatGPT.

This is the result:

In other words, one-in-ten adults who have heard of ChatGPT have used it at work.

Pew Research frames the number with a “just” but, to me, it sounds like an astonishing number for a technology that was released just a few months ago.

Also, it must be seen if people are telling the truth:

You have no idea how many coworkers of yours are secretly using GPT-4 to get ahead. https://t.co/AyoEys6RGM

— Alessandro Perilli ✍️ Synthetic Work 🇺🇦 (@giano) May 24, 2023

This is the material that will be greatly expanded in the Splendid Edition of the newsletter.

The protagonist of this section for the week is the National Eating Disorders Association (NEDA), the largest nonprofit organization dedicated to eating disorders in the United States.

Chloe Xiang, reporting for Vice:

NEDA, the largest nonprofit organization dedicated to eating disorders, has had a helpline for the last twenty years that provided support to hundreds of thousands of people via chat, phone call, and text. “NEDA claims this was a long-anticipated change and that AI can better serve those with eating disorders. But do not be fooled—this isn’t really about a chatbot. This is about union busting, plain and simple,” helpline associate and union member Abbie Harper wrote in a blog post.

According to Harper, the helpline is composed of six paid staffers, a couple of supervisors, and up to 200 volunteers at any given time. A group of four full-time workers at NEDA, including Harper, decided to unionize because they felt overwhelmed and understaffed.

“We asked for adequate staffing and ongoing training to keep up with our changing and growing Helpline, and opportunities for promotion to grow within NEDA. We didn’t even ask for more money,” Harper wrote. “When NEDA refused [to recognize our union], we filed for an election with the National Labor Relations Board and won on March 17. Then, four days after our election results were certified, all four of us were told we were being let go and replaced by a chatbot.”

…

The chatbot, named Tessa, is described as a “wellness chatbot” and has been in operation since February 2022. The Helpline program will end starting June 1, and Tessa will become the main support system available through NEDA. Helpline volunteers were also asked to step down from their one-on-one support roles and serve as “testers” for the chatbot. According to NPR, which obtained a recording of the call where NEDA fired helpline staff and announced a transition to the chatbot, Tessa was created by a team at Washington University’s medical school and spearheaded by Dr. Ellen Fitzsimmons-Craft. The chatbot was trained to specifically address body image issues using therapeutic methods and only has a limited number of responses.

…

“Please note that Tessa, the chatbot program, is NOT a replacement for the Helpline; it is a completely different program offering and was borne out of the need to adapt to the changing needs and expectations of our community,” a NEDA spokesperson told Motherboard. “Also, Tessa is NOT ChatGBT [sic], this is a rule-based, guided conversation. Tessa does not make decisions or ‘grow’ with the chatter; the program follows predetermined pathways based upon the researcher’s knowledge of individuals and their needs.”

This statement from the doctor suggests that they didn’t use a multi-million dollar generative AI model like GPT-4 to replace people, but an antiquate expert system. A form of artificial intelligence that is based on predefined rules set upfront by humans.

But, oh the irony, just two days later, NEDA is forced to turn off Tessa as one of their users, Sharon Maxwell, reports having received harmful advice from the AI in an oddly flashy Instagram post.

It seems unlikely that an expert system can suggest the opposite of what they are programmed to suggest, so something isn’t quite right about this story.

The two lessons to be learned here are:

- You are good to fire your entire workforce only after you are incredibly confident about your AI. A test with 2,500 users is nowhere near enough.

- If you replace your workforce with AI, it’s very, very, very likely that they will fight back. Reputational damage due to your former employees going to press, or outright sabotage, should be expected. So hire really good lawyers with the money that you just saved in workers’ salaries.

Now let’s go back to the original article for a last very important bit worth commenting:

AI researchers I spoke to then warned against the application of chatbots on people in mental health crises, especially when chatbots are left to operate without human supervision. In a more severe recent case, a Belgian man committed suicide after speaking with a personified AI chatbot called Eliza. Even when people know they are talking to a chatbot, the presentation of a chatbot using a name and first-person pronouns makes it extremely difficult for users to understand that the chatbot is not actually sentient or capable of feeling any emotions.

This is a wrong assumption. As we have seen in many past issues of Synthetic Work, how a chatbot presents itself makes no difference in how people perceive AI.

As we saw in Issue #6 – Putting Lipstick on a Pig (at a Wedding), people developed an attachment for rudimentary forms of AI in the 1960s controlled experiments, after being explicitly told that they were using an AI.

And, as we saw in Issue #2 – 61% of the office workers admit to having an affair with the AI inside Excel, people fell in love with Replika chatbots despite the service makes it crystal clear that the users are talking to an AI.

The presentation is not the problem. The way our brain works is.

For any new technology to be successfully adopted in a work environment or by society, people must feel good about it (before, during, and after its use). No business rollout plan will ever be successful before taking this into account.

While many people are busy falling in love or getting emotionally attached to AI, others are starting to get seriously concerned about their jobs.

The writers’ strike in LA that we saw in Issue #12 – ChatGPT Sucks at Making Signs didn’t help.

What the OpenAI CEO said about the impact of AI on jobs in front of the US Senate that we saw in Issue #13 – If you create an AGI that destroys humanity, I’ll be forced to revoke your license didn’t help, either.

Emma Goldberg, reporting for the New York Times:

“We’ve never been in a period where the scope of automation is so wide potentially,” said Harry Holzer, an economist at Georgetown. “Historically if your job gets automated, you find something new. With A.I. the thing that’s kind of scary is it could simply grow and take over more tasks. It’s a moving target.”

…

“It’s definitely scary,” said Justin Felt, 41, a customer service worker in Pittsburgh, who has worked for Verizon Fios for nearly 12 years. He feels that employers have not been entirely upfront with their workers about the ways they are incorporating generative A.I. into customer support roles, he said. “It’s definitely taking our work.”

Other interesting comments about policy-making from the article that are quite aligned with the intro of this issue:

Workers could benefit, for example, from paid leave policies that allow them to take time away from their jobs to develop new skills. Germany already has a similar program, in which workers in most German states can take at least five paid days a year for educational courses, an initiative the labor minister recently said he planned to expand.

Another possibility is a displacement tax, levied on employers when a worker’s job is automated but the person is not retrained, which could make businesses more inclined to retrain workers. The government could also offer A.I. companies financial incentives to create products designed to augment what workers do, rather than replace them — for example, A.I. that provides TV writers with research but doesn’t draft scripts, which are likely to be of low quality.

Let me tell you this: after the last seven years focused on artificial intelligence, and after spending at least 12 hours every day reading and learning about it, five paid days a year for educational courses is not even remotely enough to upskill our workforce.

Will companies be willing to invest in a month of training for each employee?

Or will they say what the Ford CEO said in the video interview we discussed in Issue #12 – ChatGPT Sucks at Making Signs:

I don’t think he can upskill his entire workforce quick enough: I don’t think they are going to make it.

Let’s continue with the article:

Workers could benefit, for example, from employer apprenticeships and retraining programs. The accounting giant PwC recently announced a $1 billion investment in generative A.I., which includes efforts to train its 65,000 workers on how to use A.I. What spurred the initiative was the chief executive’s trip to the World Economic Forum’s gathering in Davos, Switzerland, where he heard constant discussion of generative A.I.

“A number of us walking out of that room knew something had changed,” recalled Joe Atkinson, the company’s chief products and technology officer.

PwC’s workers have expressed fears about displacement, according to Mr. Atkinson, especially as their company explores automating roles with generative A.I. Mr. Atkinson stressed, though, that PwC planned to retrain people with new technical skills so their work would change but their jobs wouldn’t be eliminated.

Now. I’m sure that if your company is not doing what PwC is doing, your employees’ fear of displacement will take care of themselves.

They won’t impact their productivity, their loyalty to the brand, or your company’s attrition.

It’s just a phase. They’ll get over it.

After the overwhelming success of last week’s tutorial on how to use GPT-4 to create a presentation (and improve your narrative in the process), this week we try again with a completely different use case.

I thought “What is the thing that people hate doing the most after writing presentations?” and, of course, the answer is attending meetings. But we can’t send an AI to attend meetings on our behalf, yet.

Another detested but much-needed activity in a business environment is writing a procedure.

Procedures are the documentation of business processes. Critical to avoid single points of failure when key employees leave the company, they are also the foundation of a company’s ability to scale its operations.

But writing procedures is a tedious and time-consuming activity. The aforementioned key employee has to make explicit all the knowledge accumulated over the years. And they have to do it in a way that is clear and…