- Accenture announced plans to double its AI staff to 80,000, just three months after shedding 19,000 jobs in a cost-cutting effort.

- India’s Happiest Minds Technologies is planning to hire 1,300 software engineers, the IT services firm’s biggest staff growth push ever.

- The Challenger Job Cuts May 2023 Report highlights 3,900 positions eliminated in the US because of AI.

- St. Paul’s church in the Bavarian town of Fuerth arranged an AI church service that drew hundreds.

- Somebody working in pediatric cancer diagnostics thinks that the only sensible thing to do is to use every means possible to enhance the work of his/her colleagues with GPT-4 to potentially save the lives of children.

- Salesforce decided that the world needs Sales GPT, Service GPT, Marketing GPT, Commerce GPT, Slack GPT, and many other products with poorly chosen names.

P.s.: This week’s Splendid Edition of Synthetic Work is titled How to prompt to buy a burger.

In it, you’ll read what White Castle, Chegg and Netflix are doing with AI.

Also, in the Prompting section, you’ll learn about two new techniques: Ask for Variants and Choose the Best Variant.

Finally, in The Tools of the Trade section, you’ll discover why Vivaldi is the best browser in the world.

Well, for once, I’m glad to see that you survived the very intense introductions of Issue #15 and Issue #16.

I hope you’ll see how important the topics we discuss on Synthetic Work are and you’ll talk about them with your friends and colleagues and with strangers.

I hope you’ll talk about this newsletter with your friends and colleagues and with strangers.

As I recently wrote on social media, Synthetic Work is, by far, the most demanding R&D endeavor I’ve ever started. Not only because artificial intelligence is an enormous topic. But also because, to do it right, I have to operate in a Dr Jekyll and Mr Hyde fashion:

- with the Free Edition of the newsletter, I warn people about the impact of AI on jobs and the economy while, with the Splendid Edition, I help people to learn and adopt AI so they can control and master that impact.

- on the research front, on one side I test/evaluate/implement all AI models I have access to (so very technical) while, on the other side, I focus on very non-technical things like regulation and policy-making, legal implications, licensing, bias, business models, GTM, competition, etc.

- on the consulting side, on one side I help organizations build programs to upskill the workforce and refine their AI adoption strategy while, on the other side, I advise them on the risks (bias, prompt injection, information leaks, non-compliance, etc.) of the adoptions in production.

I hope that this effort will pay off and the information I’m sharing and the tools I’m building will help you to make better decisions for yourself, your family, your company, and your community.

And yes. I’ve started offering Advisory & Consulting services in a new section of Synthetic Work: https://synthetic.work/consulting.

The demand is simply enormous and this is a more efficient way to answer the most frequently asked questions I keep receiving.

If you need help with the most transformational technology, and the biggest business opportunity, of our times, reach out.

End of the plug. End of the intro.

Alessandro

The first story worth your attention this week is about Accenture, one of the largest consulting firms in the world, which is mass-firing and mass-hiring in a way that I’m afraid we’ll see more and more often in the future.

Jesse Levine, reporting for Bloomberg:

Accenture Plc announced plans to double its AI staff to 80,000, just three months after shedding 19,000 jobs in a cost-cutting effort.

The professional-services company will invest $3 billion in its Data & AI practice over the next three years to help companies develop the new strategies they’ll need to capitalize on the boom in artificial intelligence, Accenture said in a statement on Tuesday. Much of the investment will focus on helping clients maximize generative AI, which creates visual works or text based on simple prompts.

…

Accenture’s announcement comes after the company cut 2.5% of its workforce in March, with over half of the cuts affecting individuals in non-billable corporate functions including human resources, financial and legal departments.

The jobs that Accenture cut are the ones that more easily can be replaced with AI, as we learned from multiple academic research in Issue #4 – A lot of decapitations would have been avoided if Louis XVI had AI, Issue #5 – You are not going to use 19 years of Gmail conversations to create an AI clone of my personality, are you?, and others.

And part of the job of these new 40,000 AI experts that Accenture is hiring will be to improve today’s AI models so that the 19,000 professionals that are gone will not be missed.

Eventually, some of those 80,000 AI experts will be replaced by AI too. Not necessarily because Accenture has over-hired compared to the demand for AI services, but because the generative AI models will become so mature that a lot of the adjustments that today require human intervention will not be necessary anymore.

In the meanwhile, expect more and more companies to go through this workforce reconfiguration exercise. The race to the bottom will make it inevitable.

—

The second story worth your attention this week is about Happiest Minds, a public Professional Services company on the Indian stock market that, like Accenture, is hiring a big number of AI experts.

Alex Gabriel Simon, reporting for Bloomberg:

India’s Happiest Minds Technologies Ltd. is planning to hire 1,300 software engineers, the IT services firm’s biggest staff growth push ever, as it builds out its artificial intelligence business to cater to clients in sectors such as education and health care seeking to tap the emerging technology, co-founder Joseph Anantharaju said in an interview. It now employs about 5,000 people.

Happiest Minds is also in partnership talks with OpenAI backer Microsoft Corp. for better access to ChatGPT, Anantharaju said, as it seeks an edge over rivals in India’s massive IT industry. While AI still accounts for a small portion of Happiest Minds’ sales, it is one the fastest-growing segments for the 12-year-old company.

The firm, which gets its revenue from helping companies digitize their services, is building tools that run on top of ChatGPT, customized to its clients’ needs, he said. These include safeguards to allow businesses to use ChatGPT without sharing proprietary data and intellectual property, a major privacy concern for its customers.

Just like a gazillion startups are rushing to build non-defensible products on top of OpenAI models, a gazillion consulting firms are rushing to build non-defensible services to help customers use those models.

They are all driven by the hope to gain the so-called first-mover advantage. Who comes first, has a chance to grab a large chunk of the total addressable market. Which, in the case of AI, is…everything digitally produced by humans.

Except that, the offering of these firms is not defensible. Professional services firms don’t control the foundation AI models that they depend on, and the business value they add on top of these models is slowly added to the models themselves as the technology matures.

They could and will specialize in improving the efficacy of these models via a process known as fine-tuning, but even that process will eventually become trivial for their customers to do on their own.

Because of this, at some point, the offer side will become so cheap that it will be financially unsustainable.

The quality of the consulting services will go further down (if that’s even possible) and companies all around the world will be left with AI models that are insecure, biased, and not compliant with the regulations.

This is why, on more than one occasion, publicly, I advocated for end-user organizations to build their own AI teams and a decent competence in artificial intelligence.

It’s an advice that I’ve never given in my career.

In fact, while most people have suggested a similar approach for other technology waves (virtualization, cloud computing, etc.), I always advocated for the exact opposite.

For almost two decades, including the time I served as a Gartner research director, I recommended the biggest firms in the world to focus on their core business rather than getting distracted by building competence on emerging technologies.

Sure enough, year after year, company after company, I have seen my former clients and many other organizations publicly vouch to return to their core business and let go of everything else.

Yet, today, and exclusively about AI, I see no other choice but to advocate for the opposite.

I believe that not building in-house AI expertise is a strategic mistake and the consequences of a similar choice will have profound implications in the future.

—

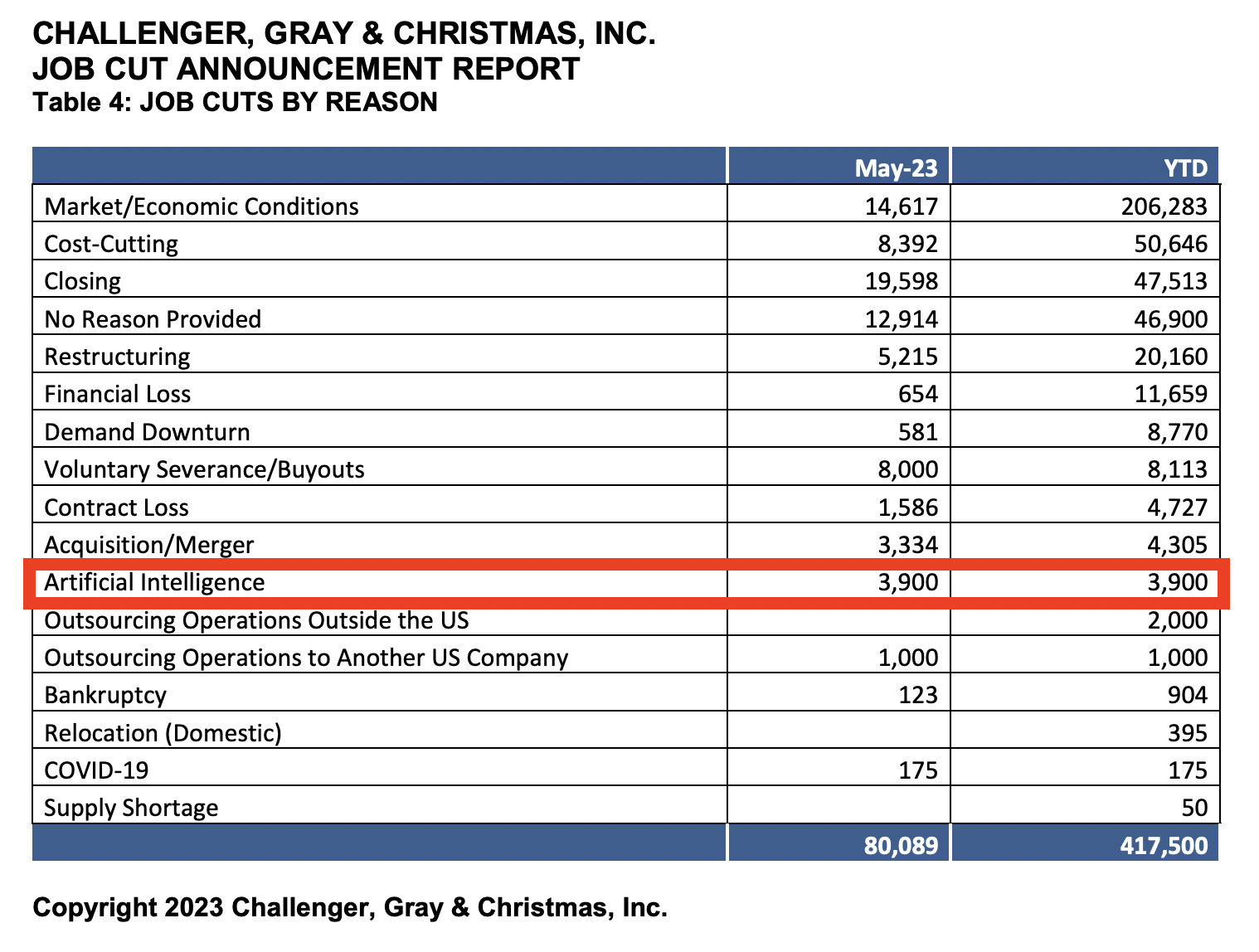

The last story worth your attention this week is about the executive coaching firm Challenger, Gray & Christmas Inc. which has started reporting jobs lost to AI for the first time in their Challenger Job Cuts Report.

More specifically, their May 2023 report highlights 3,900 positions eliminated in the US because of AI.

The report, which has been published for 30 years, doesn’t detail what positions were eliminated. An executive at the company only said that all positions were in the tech industry.

We’ll see what happens in June.

This is the material that will be greatly expanded in the Splendid Edition of the newsletter.

For the long-time readers of Synthetic Work: do you remember Issue #2 – 61% of the office workers admit to having an affair with the AI inside Excel?

It was in March. It seems like a million years ago.

In that issue, I told the story of the religious leaders of the world gathering to talk about artificial intelligence.

At that time, I quoted a deeply meaningful portion of the chapter titled The Data Religion, from Homo Deus, by the Israeli historian Yuval Noah Harari. If you missed that, I highly recommend you to go back and read it.

I also said: “Today’s religious people have a job, too.”

With that in mind, here’s Kirsten Grieshaber, reporting for Associated Press:

The artificial intelligence chatbot asked the believers in the fully packed St. Paul’s church in the Bavarian town of Fuerth to rise from the pews and praise the Lord.

The ChatGPT chatbot, personified by an avatar of a bearded Black man on a huge screen above the altar, then began preaching to the more than 300 people who had shown up on Friday morning for an experimental Lutheran church service almost entirely generated by AI.

…

The 40-minute service — including the sermon, prayers and music — was created by ChatGPT and Jonas Simmerlein, a theologian and philosopher from the University of Vienna.“I conceived this service — but actually I rather accompanied it, because I would say about 98% comes from the machine,” the 29-year-old scholar told The Associated Press.

…

The AI church service was one of hundreds of events at the convention of Protestants in the Bavarian towns of Nuremberg and the neighboring Fuerth, and it drew such immense interest that people formed a long queue outside the 19th-century, neo-Gothic building an hour before it began.

…

“I told the artificial intelligence ‘We are at the church congress, you are a preacher … what would a church service look like?’” Simmerlein said. He also asked for psalms to be included, as well as prayers and a blessing at the end.“You end up with a pretty solid church service,” Simmerlein said, sounding almost surprised by the success of his experiment.

…

The entire service was “led” by four different avatars on the screen, two young women, and two young men.At times, the AI-generated avatar inadvertently drew laughter as when it used platitudes and told the churchgoers with a deadpan expression that in order “to keep our faith, we must pray and go to church regularly.”

Some people enthusiastically videotaped the event with their cell phones, while others looked on more critically and refused to speak along loudly during The Lord’s Prayer.

Heiderose Schmidt, a 54-year-old who works in IT, said she was excited and curious when the service started but found it increasingly off-putting as it went along.

“There was no heart and no soul,” she said. “The avatars showed no emotions at all, had no body language and were talking so fast and monotonously that it was very hard for me to concentrate on what they said.”

Oh, there will be heart and soul. I guarantee you that. And that will be the problem.

The power of manipulating human emotions and opinions, due to the Eliza effect, which I mentioned in many issues of Synthetic Work won’t be exploited only by advertisers and politicians.

Even if you don’t see it today, I guarantee you that the latest AI models I work with, are increasingly capable of expressing emotions:

- Generated text can easily convey emotion today. You just need to know the right prompt to use.

- Synthetic voices are also already capable of expressing emotions. I showed you an example in the promo video of Fake Show, an AI-generated podcast that I’m building.

- Synthetic faces still require a bit of work, as these models are trained to learn how human facial muscles move, but we are getting there.

Once all these pieces are in place, there are endless possibilities to lure people into believing anything. Criminals, advertisers, governments, and leaders of existing religions and new religions will try to exploit this power. Not necessarily in this order.

And yes, priests, too, might want to start looking for a new job.

For any new technology to be successfully adopted in a work environment or by society, people must feel good about it (before, during, and after its use). No business rollout plan will ever be successful before taking this into account.

A Reddit user, working in pediatric cancer diagnostics, writes:

After I started using GPT-4, I’m pretty sure I’ve doubled my efficiency at work. My colleagues and I work with a lot of Excel, reading scientific papers, and a bunch of writing reports and documentation. I casually talked to my manager about the capabilities of ChatGPT during lunch break and she was like “Oh that sounds nifty, let’s see what the future brings. Maybe some day we can get some use out of it”. And this sentiment is shared by most of the people I’ve talked to about it at my workplace. Sure, they know about it, but nobody seems to be using it. I see two possibilities here:

My colleagues do know how to use ChatGPT but fear that they may be replaced with automation if they reveal it.

My colleagues really, really underestimate just how much time this technology could save.

Or, likely a mix of the above two.

In either case, my manager said that I could hold a short seminar to demonstrate GPT-4. If I do this, nobody can claim to be oblivious about the amount of time we waste by not using this tool. And you may say, “Hey, fuck’em, just collect your paycheck and enjoy your competitive edge”.Well. Thing is, we work in pediatric cancer diagnostics. Meaning, my ethical compass tells me that the only sensible thing is to use every means possible to enhance our work to potentially save the lives of children.

So my final question is, what can I except will happen when I become the person who let the cat out of the bag regarding ChatGPT?

The reason why this is interesting is that, in this case, AI is perceived not as a threat to jobs but as a life-saving tool.

It’s also interesting because, even in non-mission critical work environments, I wonder how many people are being silent about their knowledge of AI to not face the implications of a wider adoption.

Since OpenAI published its official app on the App Store, ChatGPT has been the most downloaded app in the world. So, a lot of people know.

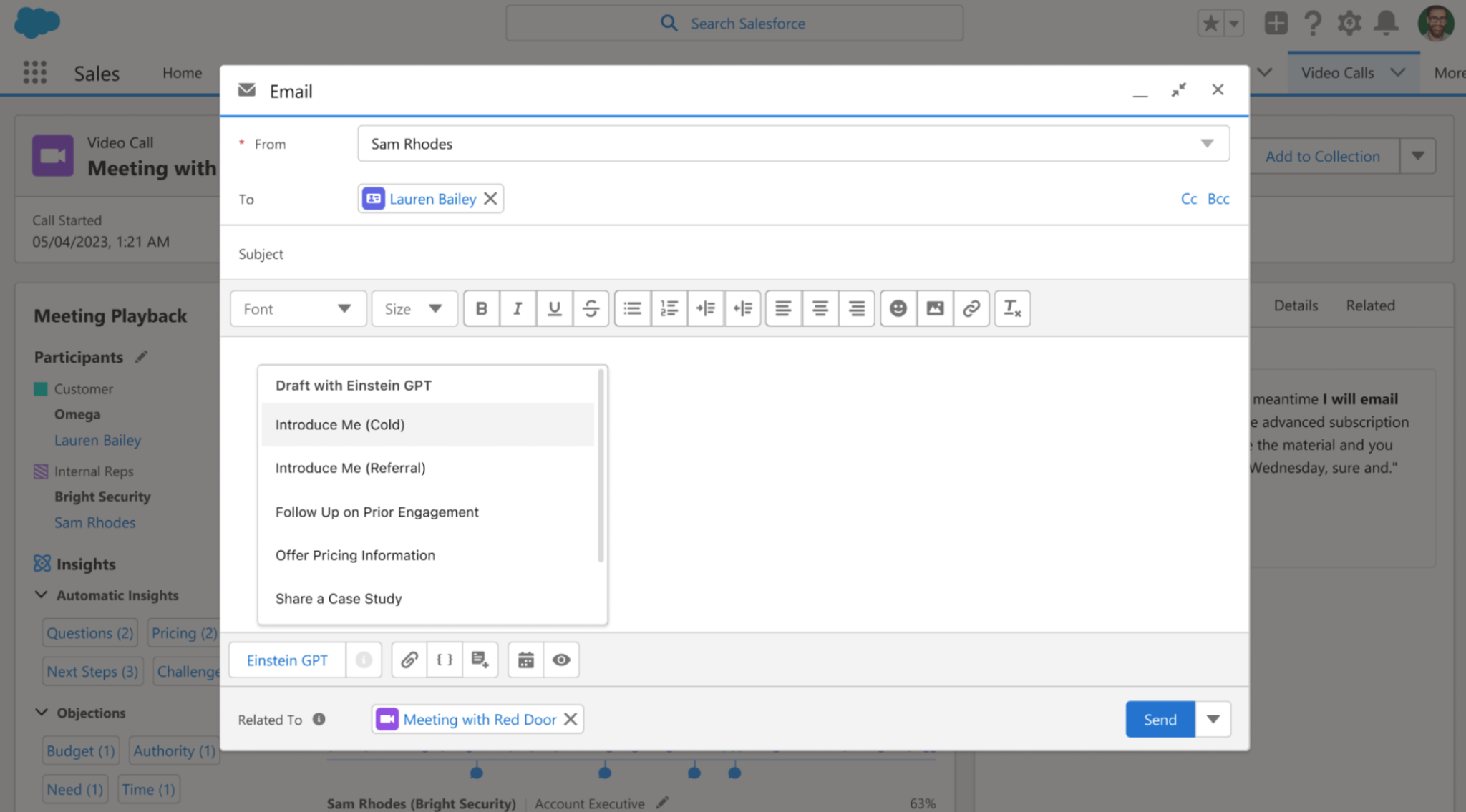

One of my favorite sections of the newsletter returns, and its protagonist of the week is Salesforce.

Kyle Wiggers, reporting for TechCrunch:

The Salesforce-built models in AI Cloud power new capabilities in Salesforce’s flagship products, including Data Cloud, Tableau, Flow and MuleSoft. There’s nine models in total: Sales GPT, Service GPT, Marketing GPT, Commerce GPT, Slack GPT, Tableau GPT, Flow GPT and Apex GPT.

…

Sales GPT can quickly auto-craft personalized emails, while Service GPT can create service briefings, case summaries and work orders based on case data and customer history. Marketing GPT and Commerce GPT, meanwhile, can generate audience segments for targeting and tailoring product descriptions to each buyer based on their customer data, or provide recommendations like how to increase average order value.

…

Slack GPT, Tableau GPT, Flow GPT and Apex GPT are a bit more specialized in nature. Slack GPT and Flow GPT let users build no-code workflows that embed AI actions, whether in Slack or Flow. Tableau GPT can generate visualizations based on natural language prompts and surface data insights. As for Apex GPT, it can scan for code vulnerabilities and suggest inline code for Apex, Salesforce’s proprietary programming language.

…

One glaring omission in Cloud AI is an image-generation model along the lines of DALL-E 2 and Stable Diffusion. Caplan said that it’s in the works, acknowledging the usefulness for creating marketing campaigns, landing pages, emails and more. But he added that there’s a range of barriers — from copyright to toxicity — Salesforce aims to overcome before it releases it.

I’m confident that Salesforce, like many other organizations, means well.

They want to empower their customers to do a lot more, faster, and better thanks to generative AI. Which is exactly what I hope to achieve with the Splendid Edition of Synthetic Work.

However, as I read this description and look at the screenshot below, I can’t help but think that this will boost a lot of people that shouldn’t be boosted.

In Issue #13 – If you create an AGI that destroys humanity, I’ll be forced to revoke your license, I theorized a scenario that starts like this:

Today, the most vocal AI evangelists will tell you that AI is not going to have any negative effect on our society. AI will only make things better. People will write better, will present better, will draw better, will code better.

Perhaps. But…

What if this is true ONLY for people that are already inclined to better themselves and/or are already gifted in terms of writing, presenting, drawing, coding?

AI as a skill amplifier.What if all the other people will simply use AI to conserve energy? Less work, mental and physical. Less time dedicated to the job, partner, kids. More time for myself.

AI as a free time generator.

Now I ask you:

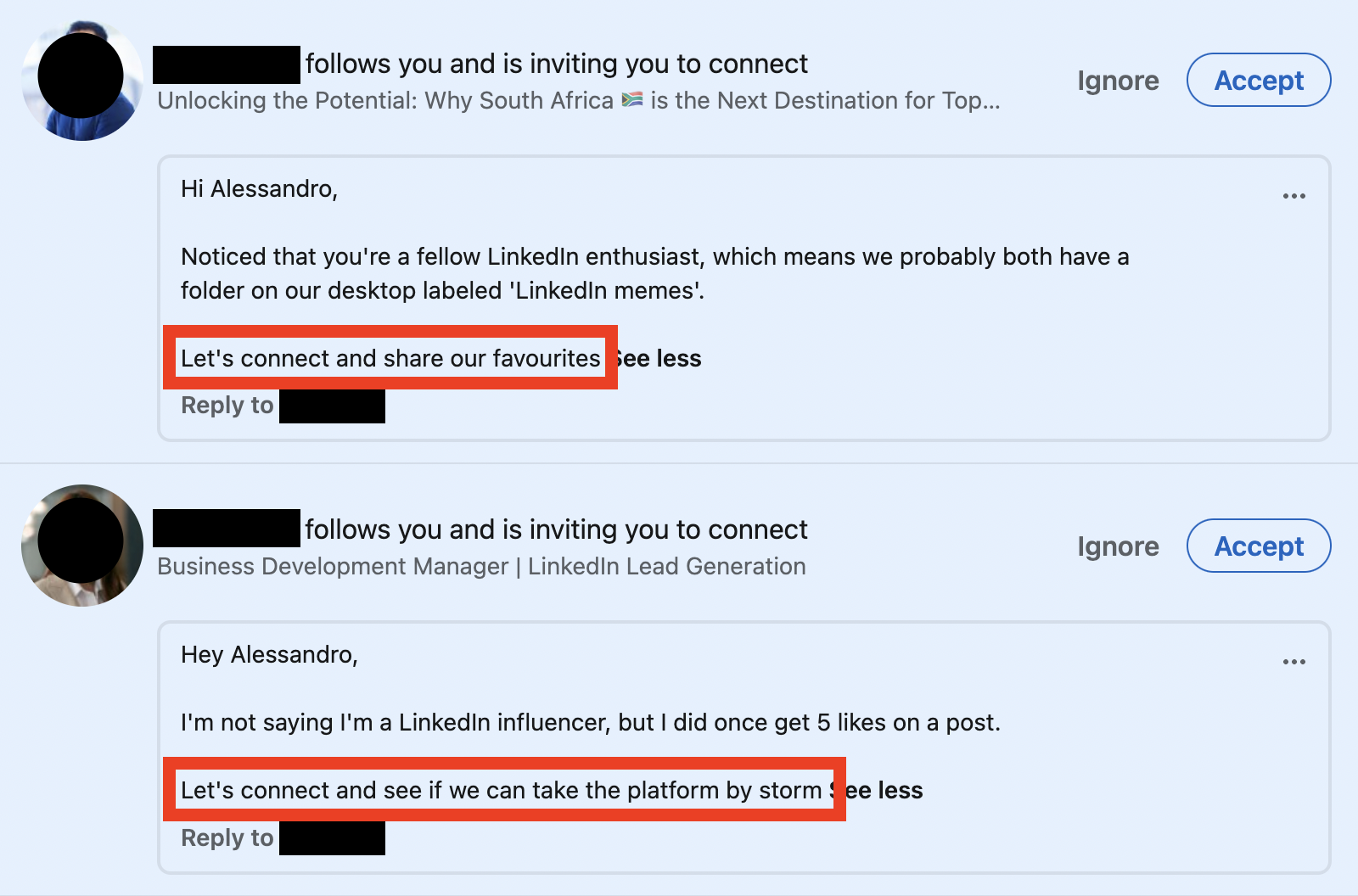

How would you feel if you knew that 50% of all emails you receive every day from other people are generated by AI?

And, literally as I type these words, these two LinkedIn invites come in at almost the same time:

I receive hundreds a week. I now expect thousands a week.

So, a follow-up question comes to mind:

Is this the world we want to live in?

P.s.: speaking of ChatGPT for sales, marketing, etc., the same author of the article above published a post titled ChatGPT prompts: How to optimize for sales, marketing, writing, and more.

If you are a paying subscriber of Synthetic Work and used the new How to Prompt database, hopefully, you can see the enormous difference that exists between the prompts in this article and the technique I share with you in the Splendid Edition of this newsletter.

Today we take a look at two techniques, one very simple, and one very complicated. The latter builds on the former.

Let’s start with the simple one.

Ask for Variants

When you write your prompt, you are psychologically prepared to either accept the answer you get from the AI or iterate on that answer until you get a satisfactory outcome.

Totally normal. That’s the way we interact with people, so we bring the same approach in our interactions with the AI.

But there’s something that large language models like ChatGPT can do and people can’t (at least, not easily):…