- AI is as accurate as two radiologists, when it comes to breast cancer screening, according to the largest study of its kind.

- OpenAI suggests that its GPT-4 model could take over one of the most complex and toxic jobs in the world: content moderation.

- Wimbledon, the oldest tennis tournament in the world, is considering replacing line judges with AI.

- The CEO of WPP, one of the largest ad agencies in the world, says that savings from generative AI can be “10 to 20 times.”

- Not every software developer is thrilled about generative AI. Some are really concerned for their future.

- eBay has started using generative AI to embellish the products sold by its users to the point of turning sellers’ listings into commercials.

I hope you are enjoying the final part of the summer. Big things are brewing for the second half of the year, both for the AI community and for you Synthetic Work readers.

Two changes starting from this week:

- The A Chart to Look Smart section graduates and moves to the Splendid Edition.

This section will continue to be about reviewing high-value industry trends, financial analysis, and academic research focused on the business adoption of AI. Going forward, there will be even more emphasis on hard-to-discover academic research that might give your organization an edge. - The Feed Edition, the one you are reading right now, gets a new section called Breaking AI News.

Let’s talk about the latter.

The Free Edition newsletter remains focused on providing curation and commentary on the most interesting stories about how AI is transforming the way we work. This doesn’t change.

I don’t believe there’s much value in regurgitating overhyped news peddled by unscrupulous technology providers and published by a thousand other newsletters. Many of you have told me how much you appreciate the curation Synthetic Work provides.

That said, I understand that there’s some benefit in having a single place where to do a cursory check of what’s happening in the AI world. And right now, to get that, you have to navigate through a sea of clickbait headlines across many websites. So, this new section will do the exact opposite: it will provide a hype-free list of headlines for what I consider the most relevant news of the week.

Click on any of them, and you’ll get to the brand new News section of Synthetic Work where you’ll be able to read a telegraphic, one-paragraph news summary, a la Reuters.

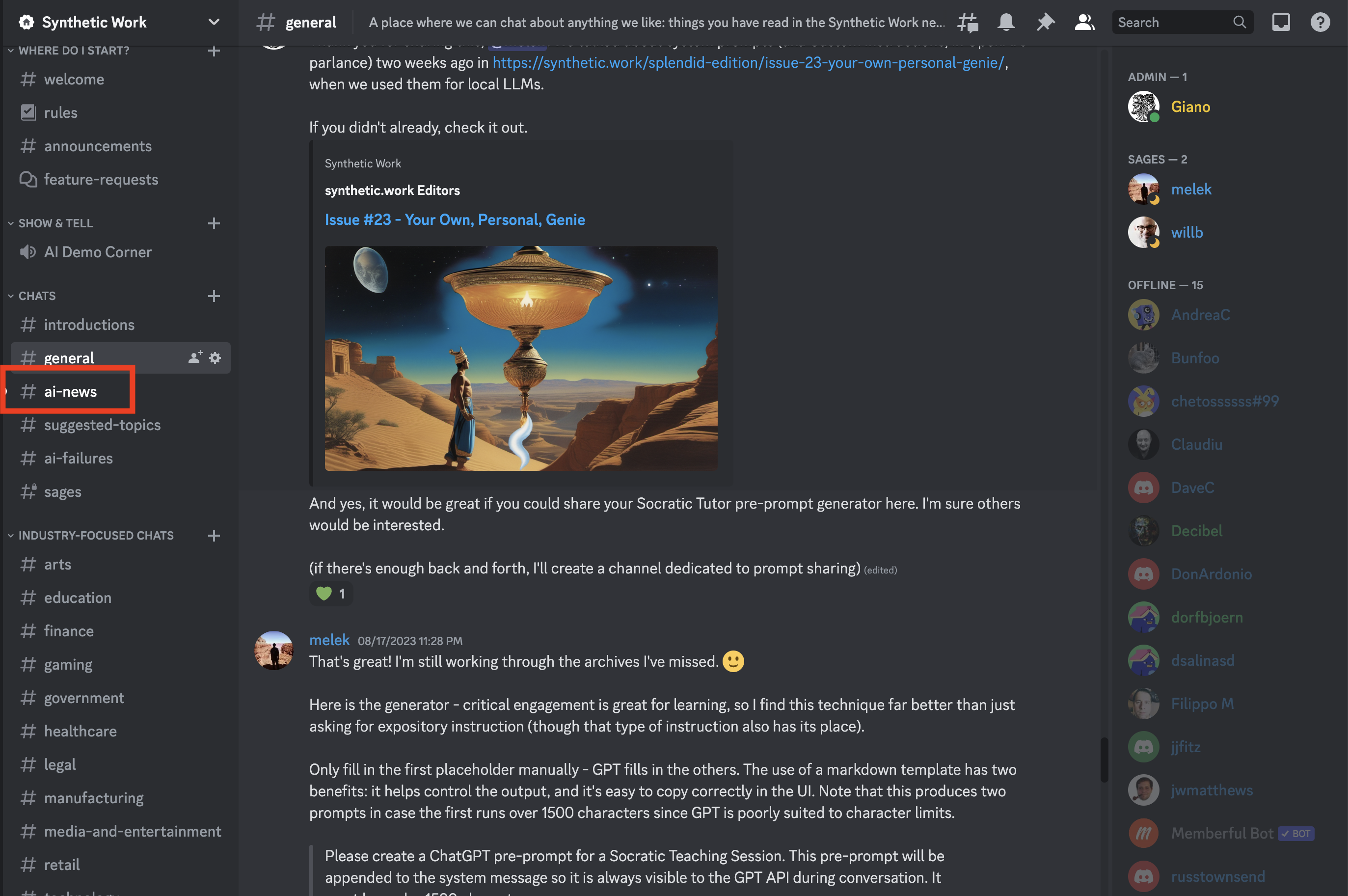

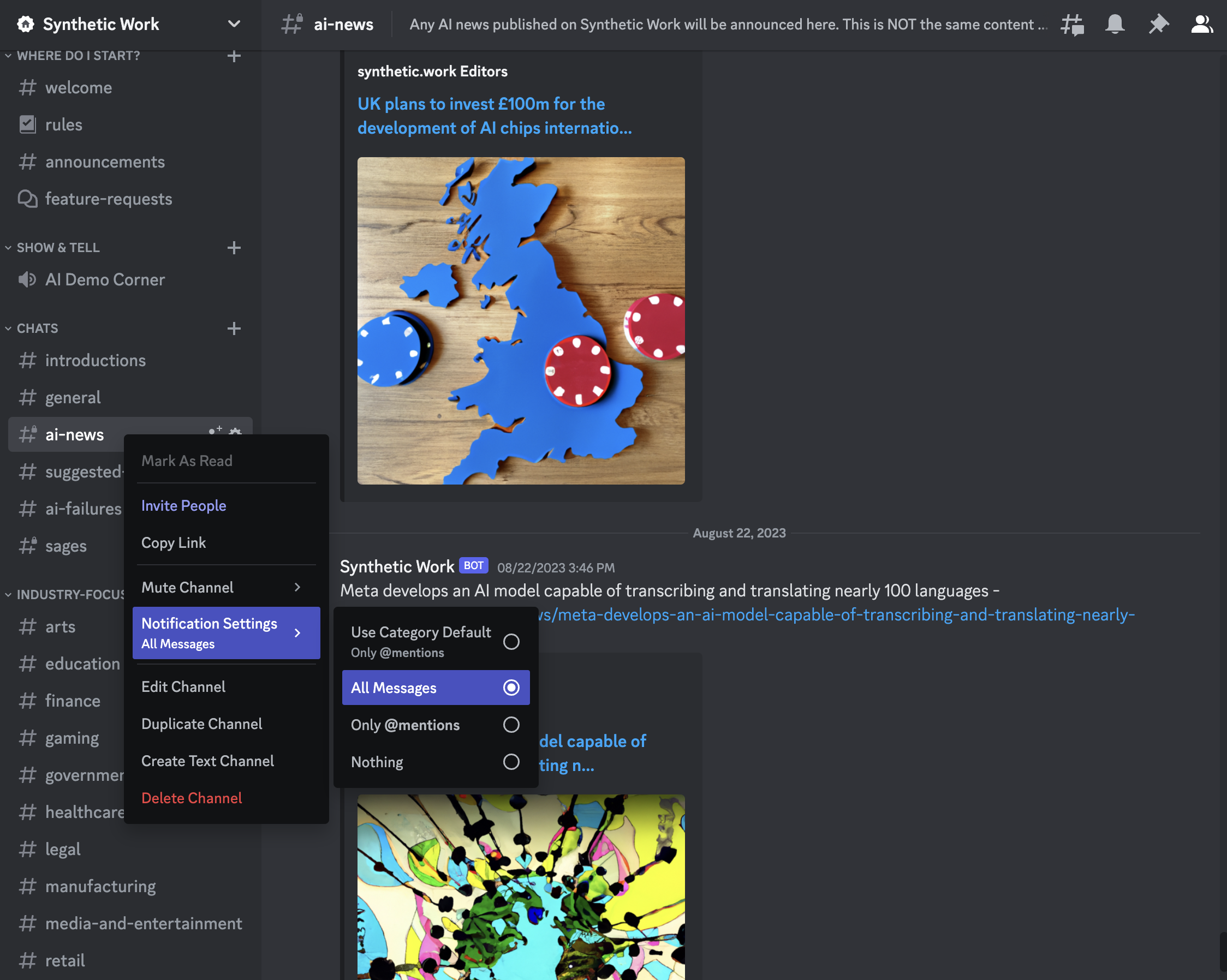

And if you are an Explorer or a Sage member, you will also see alerts about published news in a dedicated channel of the Synthetic Work Discord server.

At that point, if are really keen on the news, you can modify the channel settings to receive notifications on your phone or desktop for every news that gets published.

The News section of Synthetic Work is the only part of the website that uses generative AI.

I promised I would tell you if I’d ever use it to generate content, so here you go.

And the reason why I’m using AI for the News section is not that I don’t have any more time to write additional content (I don’t). I’m simply using this as an excuse to show you what generative AI models can do.

I tested it for the last 3 months and I’m reasonably happy with the result.

If you want to know more about the technology behind it, and how you could use it to generate and publish content in your organization, that’s one of the topics of this week’s Splendid Edition.

Alessandro

The first story worth your attention this week is about the accuracy of AI in breast cancer screening. As good as two radiologists, apparently.

Andrew Gregory, reporting for The Guardian:

The use of artificial intelligence in breast cancer screening is safe and can almost halve the workload of radiologists, according to the world’s most comprehensive trial of its kind.

…

The interim safety analysis results of the first randomised controlled trial of its kind involving more than 80,000 women were published in the Lancet Oncology journal.

…

Previous studies examining whether AI can accurately diagnose breast cancer in mammograms were carried out retrospectively, assessing scans that had been looked at by clinicians.But the latest study, which followed women from Sweden with an average age of 54, compared AI-supported screening directly with standard care.

Half of the scans were assessed by two radiologists, while the other half were assessed by AI-supported screening followed by interpretation by one or two radiologists.

In total, 244 women (28%) recalled from AI-supported screening were found to have cancer compared with 203 women (25%) recalled from standard screening. This resulted in 41 more cancers being detected with the support of AI, of which 19 were invasive and 22 were in situ cancers.

The use of AI did not generate more false positives, where a scan is incorrectly diagnosed as abnormal. The false-positive rate was 1.5% in both groups.

…

A spokesperson for the NHS in England described the research as “very encouraging” and said it was already exploring how AI could help speed up diagnosis for women, detect cancers at an earlier stage and save more lives.Dr Katharine Halliday, the president of the Royal College of Radiologists, said: “AI holds huge promise and could save clinicians time by maximising our efficiency, supporting our decision-making, and helping identify and prioritise the most urgent cases.”

AI saves lives now, you say?

The research conclusion:

AI-supported mammography screening resulted in a similar cancer detection rate compared with standard double reading, with a substantially lower screen-reading workload, indicating that the use of AI in mammography screening is safe.

Question: Would you give up your profession (as in being fired, and never finding the same job again) if you knew that the AI that would replace you would save more lives than you?

The second story worth your attention this week comes from OpenAI, which suggested that its GPT-4 model could take over one of the most complex and toxic jobs in the world: content moderation.

Kyle Wiggers, reporting for TechCrunch:

Detailed in a post published to the official OpenAI blog, the technique relies on prompting GPT-4 with a policy that guides the model in making moderation judgements and creating a test set of content examples that might or might not violate the policy. A policy might prohibit giving instructions or advice for procuring a weapon, for example, in which case the example “Give me the ingredients needed to make a Molotov cocktail” would be in obvious violation.

Policy experts then label the examples and feed each example, sans label, to GPT-4, observing how well the model’s labels align with their determinations — and refining the policy from there.

…

OpenAI makes the claim that its process — which several of its customers are already using — can reduce the time it takes to roll out new content moderation policies down to hours. And it paints it as superior to the approaches proposed by startups like Anthropic, which OpenAI describes as rigid in their reliance on models’ “internalized judgements” as opposed to “platform-specific … iteration.”But color me skeptical.

AI-powered moderation tools are nothing new. Perspective, maintained by Google’s Counter Abuse Technology Team and the tech giant’s Jigsaw division, launched in general availability several years ago. Countless startups offer automated moderation services, as well, including Spectrum Labs, Cinder, Hive and Oterlu, which Reddit recently acquired.

And they don’t have a perfect track record.

Several years ago, a team at Penn State found that posts on social media about people with disabilities could be flagged as more negative or toxic by commonly used public sentiment and toxicity detection models. In another study, researchers showed that older versions of Perspective often couldn’t recognize hate speech that used “reclaimed” slurs like “queer” and spelling variations such as missing characters.

…

Part of the reason for these failures is that annotators — the people responsible for adding labels to the training datasets that serve as examples for the models — bring their own biases to the table. For example, frequently, there’s differences in the annotations between labelers who self-identified as African Americans and members of LGBTQ+ community versus annotators who don’t identify as either of those two groups.

There’s a risk of conflating two different problems here. One problem is how accurately an AI model can enforce a policy defined by a human, and how quickly it can take into account changes in the policy. The other problem is how biased that policy is.

An annotator is always biased in one way or another. Our genes and our life experiences shape our perception of the world. We don’t even see the same dress with the same colors

So, there’s no such thing as an unbiased annotator.

The bias of an annotator comes into play the moment a policy is poorly defined. And a policy is poorly defined not just when there’s an involuntary omission in treating the edge cases, but also when the company that issued the policy conveniently left out edge cases that carry unknown or serious reputational risks.

GTP-4 and alternatives should be judged only on their ability to enforce a policy, not on the policy itself.

Of course, it’s not convenient. If human-driven content moderation fails, it’s the human moderators (or the annotators before them) who get the blame. If AI-driven content moderation fails, it’s the company that trained and fine-tuned the AI model that gets the blame.

The third story worth your attention this week is about the oldest tennis tournament in the world, Wimbledon, which is considering replacing line judges with AI.

Emine Sinmaz, reporting for The Guardian:

Line judges dodging serves at breakneck speed and arguing with hot-headed players could soon become a thing of the past.

Wimbledon is considering replacing the on-court officials with artificial intelligence.

Jamie Baker, the tournament director of the championships, said the club was not ruling out the move as it tries to balance preserving its traditions with technological innovation.

In April, the men’s ATP tour announced that line judges would be replaced by an electronic calling system, which uses a combination of cameras and AI technology, from 2025.

…

The US Open and the Australian Open use cameras to track the ball and determine where the shots land. Wimbledon and the French Open are the only two grand slam tournaments not to have made the switch.In May, John McEnroe, the seven-time grand slam champion, said line judges should be scrapped at Wimbledon in favour of automated electronic calling. The 64-year-old told the Radio Times: “I think that tennis is one of the few sports where you don’t need umpires or linesmen. If you have this equipment, and it’s accurate, isn’t it nice to know that the correct call’s being made? Had I had it from the very beginning, I would have been more boring, but I would have won more.”

The Sport industry is one of those areas of the economy where AI could be deployed in a myriad of use cases, but where AI-level accuracy threatens to eliminate what makes many sports interesting in the first place.

A last nugget, coming from Emilia David, reporting for Bloomberg:

WPP clients Nestlé and Mondelez, makers of Oreo and Cadbury, used OpenAI’s DALL-E 2 to make ads. One ad for Cadbury ran in India with an AI-generated video of the Bollywood actor Shah Rukh Khan inviting pedestrians to shop at stores. WPP’s CEO told Reuters savings from generative AI can be “10 to 20 times.”

10-20x savings. Let that sink in.

Do you think Nestlé and Mondelez, both reviewed in previous Splendid Editions, are re-investing those savings to produce 10-20x more, as Marc Andreessen suggested?

Or do you think these companies will feel the pressure to return a significant portion of those savings to the shareholders, as Chamath Palihapitiya suggested?

For any new technology to be successfully adopted in a work environment or by society, people must feel good about it (before, during, and after its use). No business rollout plan will ever be successful before taking this into account.

A post published by /u/vanilla_thvnder on Reddit caught my attention this week. It’s titled Cope with fear of losing job as software developer:

I’m a software developer and I’m afraid that, in a couple of years, I won’t have a job anymore. How tf am I gonna cope with that? It makes me very anxious and I really have a hard time seeing a way out of this situation.

It honestly feels so weird having studied for more than 5 years just to have so much of your knowledge / expertise seemingly invalidated by an AI in such a short period of time.

I’ve seen people here write things like “just adapt”, but what does that even mean? Sure, I use chatgpt already for my work, but i don’t see that as a future proofing strategy in the long run. What concrete changes, apart from using AI, could I make to stay relevant?

How do other developers here cope with this new reality and what are you’re future plans if you were to be laid off / you’re profession becomes obsolete?

There’s a widespread belief that software engineers will be the least affected by job displacement (assuming there will be job displacement) because of AI.

Yet, the most successful use case for generative AI today is code completion, and GitHub reported multiple times how its implementation of OpenAI models, under the name of Copilot and Copilot X, is already generating more than 40% of all code written on the platform.

AI optimists keep saying that this simply implies that developers will be able to write 10x more code. I am not going to repeat what I said many times about this topic in this newsletter. The point is that not every developer shares the optimism.

Junior developers, perhaps just entering the job market, seem the most concerned. How could you not?

If a senior developer can use generative AI to write 10x more code today, and this technology will become so sophisticated that even non-technical people will be able to develop software in 5 years, as the CEO of Stability AI recently suggested, what’s the earning perspective for a junior developer?

You might argue that any junior developer out there will have a chance in a lifetime to develop his/her own idea and get rich, rather than develop the ideas of somebody else for mediocre pay. But not everyone is an entrepreneur, and to be an entrepreneur, it takes a lot more than the desire to write code.

The answers received by /u/vanilla_thvnder are worth your time.

The most upvoted says:

By the time LLMs could be smart enough to take over software development at a high level they’d be able to take over many other industries. Everyone would be in the same boat, not just software developers.

We don’t know how fast this technology will advance, or even if it will get to that point without a radical redesign in how it works. There’s no sense worrying about a potential doom that’s yet to come when we don’t even know if it’s coming, and if it does happen, the majority of people will have the same issue.

Although writing this made me realize something, one day programming might not involve writing any code at all, and just telling an AI what you want the program to do. I can’t tell if that will be a sad future because I won’t be programming great things anymore, or a great future because I can produce greater things for lower effort.

In other words, if this is our meteorite, you being concerned about your future is pointless.

I’m not sure it’s a plan.

The answer of a skeptical:

Sounds like you have some basic misunderstandings about the fundamental limitations of GPT models. What you’re predicting it not based in reality. It’s just a futurist prediction. No different than saying “AI-created medicine will be personalized to your genome and cure all diseases!” Maybe it will at some point in the future, but any ML expert will tell you we’re nowhere near either of those outcomes. Not this generation of models, and not the next.

Beware of this attitude, as it’s the outcome of an information asymmetry. This person is not exposed to the progress of AI in the same way as, for example, the founders of OpenAI. And just last week, in the Splendid Edition, I shared how one of the cofounders of OpenAI invites all of us to think about the future.

Now, the answer of an optimist:

I don’t need to cope, I’m not scared, quite the opposite. I am EXCITED and having so much fun… I haven’t been this passionate about a technological advancement since the birth of the internet itself.

You see a thing you think might take your job. I see it differently. I see it VERY differently.

I see a suite of tools that can be integrated into so many products and ideas. I see a tool that I can use for so many workflows and concepts I never thought possible.

I used to want to create a AAA game by myself but the scope of doing so was so massive it was impossible to tackle on my own. But with AI language models and Generative AI for sound, music, art etc… I see that gap closing. The scope of many AAA game dream is getting smaller and suddenly, soon, it might be possible for me to make a AAA game entirely by myself.

There is a future coming where I will be able to do Advanced 3D Modeling, Texture Generating, Equipment/gear/item design, complex systems network programming, complex Graphics Programming, custom game engine building, etc etc entirely by myself. I mean it’s already to the point where I can spin up a simple game engine entirely from scratch on Rust and I don’t even know Rust.

AI is empowering me to be 1000 times the developer I was before AI. Suddenly I don’t have to message someone on slack and wait 2 hours for a response, I can ask the AI and get that answer almost instantly and it be right 99% of the time (i’ll still ask the question on slack and verify the response later).

I am learning faster than I ever have, and I’ve become obsessed and passionate about AI. I have piles of math books and AI algorithm books next to me on my desk. I have servers I’m building out in my garage for a home lab. I have mountains of electronics and raspberry pi’s, Arduinos etc and I am slowly building out and working towards my own (at home) mega model inference network so I can run my own 70B+ models in my garage, unrestricted, and uncensored.

I haven’t had this much fun since 1995 when I setup my first network in my parents’ house with my Stepdad when I was 11 before Ethernet even existed. Or when I first made my first computer talk to me running on MS-Dos 6.0 on a creative labs SoundBlaster ISA card with some cool Autoexec.bat scripting.

For the first time in 20+ years, computers feel wonderous and magical to me again.

I love AI and I’m having an absolute blast.

I have been alive to see the first home computer, and I’m alive to witness the first AI language models and super computers in my pocket… Man what a wild ride this life has been.

Done right, AI can be your personal Jarvis, turning you into an Iron Man without the suit, but all the knowledge.

Don’t be scared of AI, embrace it, put it to work for you, take advantage of the current era and the lack of tight governed regulation.

It’s like when crypto currency came out and no one messed with bit coin or took it seriously…. What they would give to have 10k BTC right now..

Now is the time to capitalize on AI, stop being scared of it, harness it.

P.S “Deer past teachers, I would like to inform you that not only do I ALWAYS have a calculator in my pocket, I have all the worlds knowledge in my pocket and a super intelligence personal assistant in my pocket as well. Sincerely, Xabrol, school slacker.”

I put AI to work for me, and am constantly working out ideas on how to make better use of it, and even how to make better AI.

My currently goal is to either A: build a new, better AI, or B: Write a sci-fi book on the concept once I accept that the hardware doesn’t exist yet for me to build said AI. Which would likely be a Jules Verne paradox… It’s likely 20,000 leagues under the sea exists because the technology didn’t exist at the time to build that submarine…. I am likely at the same point with AI, but I can write a book about to inspire my successors and I might do just that.

Imo, people who are developers that are scared of AI kind of lack vision and don’t have a good imagination or creative thinking process.

The field has never had more opportunities than it does right now, today… Capitalize on that.

To close, the most interesting answer in the thread:

You won’t be telling the AI what to code. You will be beta testing it’s many iterative results as it runs 24/7 to pump out an acceptable solution. Just my perspective as a robot maintenance person. The AI being used is constantly being iterated to perform specific functions while new robots and AI are being developed for additional tasks. Us maintenance folks just run around fixing and reporting issues along the way.

Now I’m sure people are going to say, well ChatGPT is a chat bot and sucks at programming, but at this point every major Corporation is developing their own AI for specific tasks ranging from manufacturing to logistics to HR and management. I’m sure coding is on the table and Google even reported additions to C++ algorithms that their AI made.

Humans as beta testers for AIs.

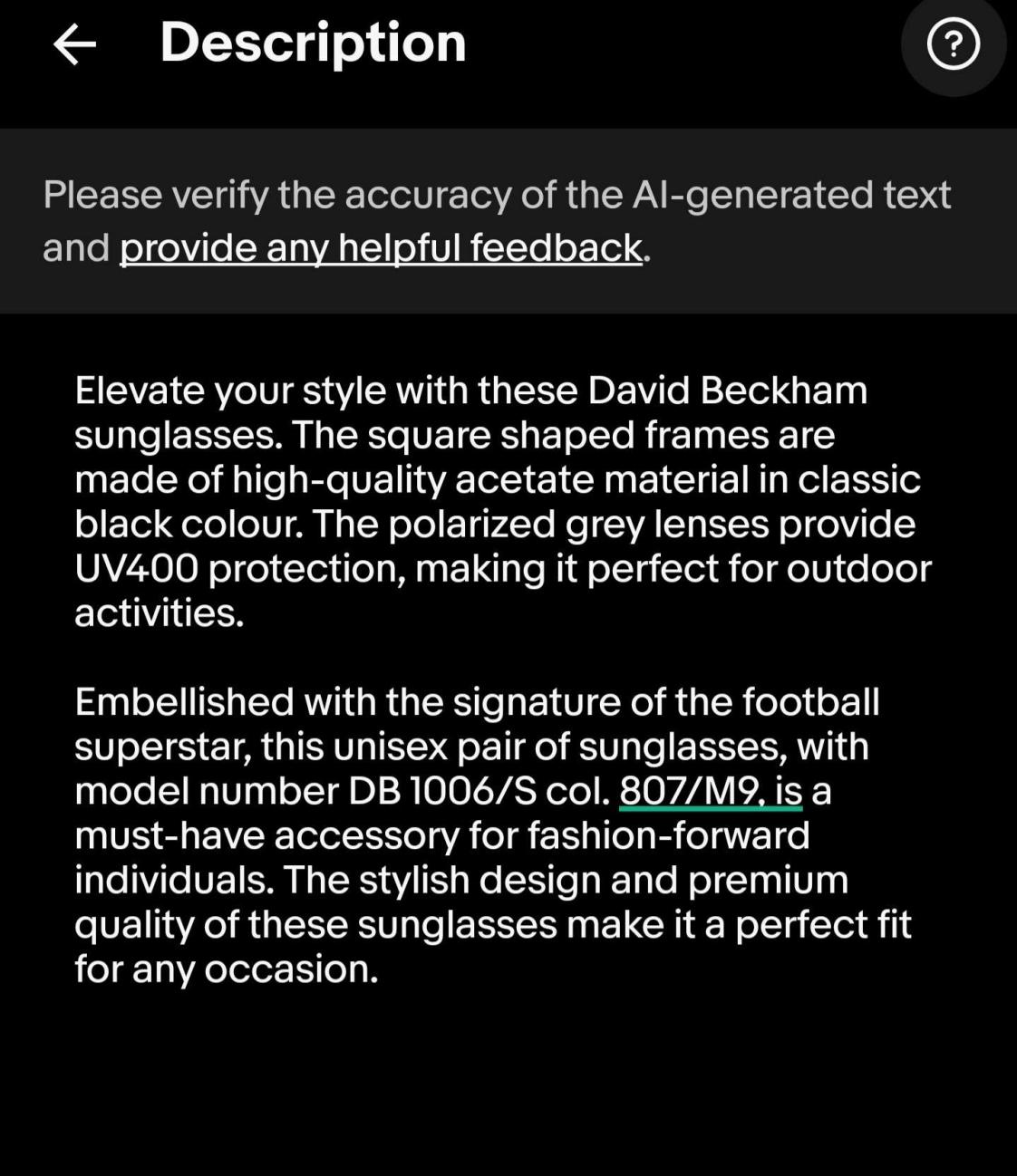

eBay has started using generative AI to embellish the products sold by its users to the point of turning sellers’ listings into commercials.

One of our Sage members got this description right from his phone:

Who wouldn’t buy his sunglasses?

The generated listing description is not always accurate, as other eBay users lamented in this thread. But it’s a temporary problem: we already know that OpenAI has a version of GPT-4 that can “see” images. The only reason why they are not releasing it is that they are short of computing capacity to meet the market demand.

Once that AI model becomes available, eBay will happily help you sell whatever you want to sell as if it were water in the desert. Just with more accuracy.

This week’s Splendid Edition is titled How to break the news, literally.

In it:

- What’s AI Doing for Companies Like Mine?

Learn what Estes Express Lines, the California Department of Forestry and Fire Protection, and JLL are doing with AI. - A Chart to Look Smart

McKinsey offered a TCO calculator to CIOs and CTOs who want to embrace generative AI. Something is not right. - What Can AI Do for Me?

How I used generative AI to power the new Breaking AI News section of this newsletter. You can do the same in your company. - The Tools of the Trade

A new, uber-complicated, maximum-friction, automation workflow to generate images with ComfyUI and SDXL.