- Intro

- A new YouTube channel and an upcoming podcast

- What Caught My Attention This Week

- Project Gutenberg, Microsoft, and MIT worked together to create an audiobook version of 5,000 open access books.

- A new bill in the US state of California could force companies to hire a trained human safety operator for self-driving trucks.

- Freelance job websites are seeing an explosion of demand for AI skills.

- The Way We Work Now

- Comedians have started using AI to write jokes.

- How Do You Feel?

- Voice actors discover their voices cloned and used to say dirty things in video games.

- Putting Lipstick on a Pig

- Dating websites want to use AI to coach people on how to flirt online.

After way a surprising number of people pushed me, for years, relentlessly, to start a YouTube channel, I finally capitulated:

This channel will be a complement to Synthetic Work, not a replacement of any sort.

And it will follow the opposite logic of Synthetic Work: while the newsletter focuses on long, in-depth content that you may want to archive, discuss with your team, or research further, the YouTube channel will focus on short, to-the-point content that will focus on one thing only in each video.

Even the format is different.

As some of you know, I already produce a weekly video series in collaboration with a leading tech publisher called 01net: Le Voci dell’AI.

That is a 10-minute pill, in Italian, where I mostly comment on the news about artificial intelligence and the business implications of what’s being announced. Nothing to do with the laser focus of Synthetic Work on the impact of AI on jobs.

And so, for this new YouTube channel, I wanted a format different from Synthetic Work and different from Le Voci dell’AI.

I thought it would be interesting to interview successful peopl…

I’m joking.

We have enough interviewers in the world, and they are all wonderful.

No.

I thought it would be interesting to answer your questions. And not just about artificial intelligence, but also about many other emerging technologies that I dedicate my time on.

I regularly get questions from the readers of Synthetic Work, clients, former colleagues, friends, and random strangers on Reddit. LinkedIn, or X.

So why not answer them in a video so that others could benefit from the answers as well?

That’s it. That’s the idea.

I put together a team, invited them to my house, and we recorded the first episodes to see how it would work.

It’s a unique Q&A format (you’ll see why over time) which we’ll use to answer your questions in every episode.

Feel free to ask anything you want about AI and other emerging technologies. It can be a question about the topic we cover in this newsletter or about something else entirely. It can be a business-oriented question, a competition-related question, a product question, a technical question, a question about my cat…anything you need to know to succeed.

And don’t worry about being judged. Your identity will remain anonymous unless you specify otherwise.

Just send an email to [email protected]

We’ll choose the best questions and answer them. And if you know me, you know what to expect: strong opinions and straightforward answers.

Subscribe to the channel and don’t miss the first video we’ll publish on Tuesday.

Wednesday, Sep 20, at 4pm BST, I’ll livestream a podcast with Dror Gill, a top generative AI expert who also happens to be a reader of Synthetic Work.

It’s titled Jobs 2.0 – The AI Upgrade

We want to talk about three things:

- What is the real impact of AI on jobs.

- How to address workforce fear, uncertainty, and doubts, and upskill people.

- How AI is impacting the career outlook of our kids.

We’ll also have a Q&A question at the end, so feel free to join us live and ask your questions.

Find all the details here: https://perilli.com/podcasts/jobs-2-0-the-ai-upgrade/

See you (in video) soon.

Alessandro

The first thing that caught my attention this week is a collaboration between the Project Gutenberg, Microsoft, and MIT to create an audiobook version of 5,000 open access books.

The researchers behind this project released a short paper about it with some interesting information:

An audiobook can dramatically improve a work of literature’s accessibility and improve reader engagement. However, audiobooks can take hundreds of hours of human effort to create, edit, and publish. In this work, we present a system that can automatically generate high-quality audiobooks from online e-books. In particular, we leverage recent advances in neural text-to-speech to create and release thousands of human-quality, open-license audiobooks from the Project Gutenberg ebook collection. Our method can identify the proper subset of e-book content to read for a wide collection of diversely structured books and can operate on hundreds of books in parallel.

Our system allows users to customize an audiobook’s speaking speed and style, emotional intonation, and can even match a desired voice using a small amount of sample audio. This work contributed over five thousand open-license audiobooks and an interactive demo that allows users to quickly create their own customized audiobooks.

Last week, to show you how mature synthetic voices have become, I unveiled an audio version of the Free Edition: Issue #28 – Can’t Kill a Ghost.

So this story is perfect to further validate the point I tried to make last week: synthetic voices are ready for prime time and readily accessible to anyone.

Of course, there’s a little problem.

Readily accessible technology that can parallelize the creation of thousands of audiobooks takes away jobs.

Microsoft researchers try to spin this as a positive thing in their paper:

Traditional methods of audiobook production, such as professional human narration or volunteer-driven projects like LibriVox, are time-consuming, expensive, and can vary in recording quality. These factors make it difficult to keep up with an ever-increasing rate of book publication.

…

LibriVox is a well-known project that creates open-license audiobooks using human volunteers. Although it has made significant contributions to the accessibility of audiobooks, the quality of the produced audiobooks can be inconsistent due to the varying skills and recording environments of the volunteers. Furthermore, the scalability of the project is limited by the availability of volunteers and the time it takes to record and edit a single audiobook. Private platforms such as Audible create high-quality audiobooks but do not release their works openly and charge users for their audiobooks.

Audible charges users because, besides making a profit, it also pays professional voice actors.

The argument that professional narrators cannot keep up with the ever-increasing rate of book publication (especially now that books are being written with the help of large language models like GPT-4) is a moot one.

Synthetic voices will not be used just to help professional narrators cope with the scale of book publishing. They will exterminate the profession.

And professional narrators understood this since the beginning of this year, when Apple introduced synthetic voice narration for a subset of the books in its Apple Books platform.

And if it’s not cost and scalability issues that will exterminate the profession, market demand will. Generative AI can be used to clone the voices of celebrities and singers, who can comfortably retire at the age of 15 and live off royalties for the rest of their lives.

Who wouldn’t want all his/her audiobooks narrated by Morgan Freeman?

And now that we prematurely declared dead the profession of professional narrator, feel free to browse the collection of books that Microsoft and MIT have put together.

The second thing that caught my attention this week is an attempt to pass a new bill in the US state of California that would impose the employment of a trained human safety operator for self-driving trucks.

Rebecca Bellan, reporting for TechCrunch:

In a blow to the autonomous trucking industry, the California Senate passed a bill Monday that requires a trained human safety operator to be present any time a self-driving, heavy-duty vehicle operates on public roads in the state. In effect, the bill bans driverless AV trucks.

AB 316, which passed the senate floor with 36 votes in favor and two against, still needs to be signed by Gov. Gavin Newsom before it becomes law. Newsom has a reputation for being friendly to the tech industry, and is expected to veto AB 316.

In August, one of the governor’s senior advisers wrote a letter to Cecilia Aguiar-Curry, the bill’s author, opposing the legislation. The letter said such restrictions on autonomous trucking would not only undermine existing regulations, but could also limit supply chain innovation and effectiveness and hamper California’s economic competitiveness.

Advocates of the bill, first introduced in January, argue that having more control over the removal of safety drivers from autonomous trucks would protect California road users and ensure job security for truck drivers.

…

The authors of the bill previously told TechCrunch they don’t want to stop driverless trucking from reaching California in perpetuity — just until the legislature is convinced it’s safe enough to remove the driver.

Why is this interesting?

Because the “ensuring job security for truck drivers” argument is thrown in there seemingly as an afterthought.

Now, is it an afterthought and the real concern is safety, as it seems from the last sentence of the quote above? Or is it the other way around: the real concern is job security and the safety argument is just a convenient excuse?

Whichever is, once a few US states start approving driverless self-driving trucks, many, many other countries will follow. And short of a mass slaughter of humans due to AI miscalculations, there will be no turning back. With or without California’s participation.

Self-driving technology is not perfect, yet. It has been not perfect yet for years. But betting it will never be in our lifetime is foolish.

And so, what will happen to the millions of car and truck drivers out there?

The techno-optimist we quoted a few Issues ago would say: “They will be free to go and do greater things.”

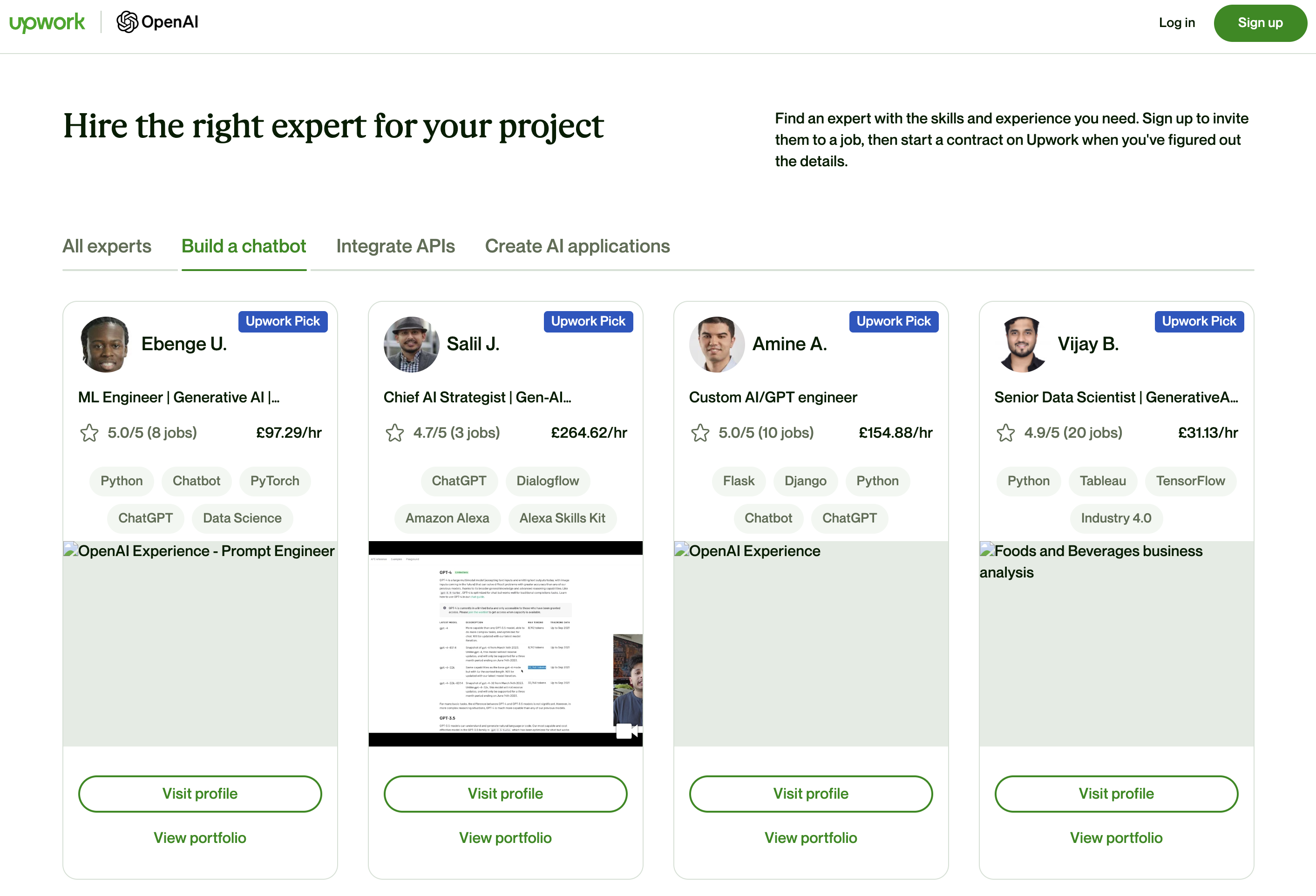

The third thing that caught my attention this week is the explosion of demand for AI skills on freelance job websites like Upwork.

Sean Michael Kerner, reporting for VentureBeat:

Ever since OpenAI’s ChatGPT dramatically entered the AI scene in November 2022, there has been an explosion of interest and demand in AI skills — and particularly OpenAI skills. A new partnership announced today (July 31) between OpenAI and Upwork aims to help meet the demand.

The OpenAI Experts on Upwork services is a way for OpenAI users to get access to skilled professionals to help with AI projects. The two organizations worked together to design the service to identify and help validate the right professionals to help enterprises get the AI skills that are needed.

…

“We’ve seen widespread adoption of generative AI on the Upwork platform across the board,” Bottoms said. “Gen AI job posts on our platform are up more than 1,000% and related searches are up more than 1500% in Q2 2023 when compared to Q4 2022.”

…

Bottoms said that Upwork partnered with OpenAI to identify the most common use cases for OpenAI customers — like building applications powered by large language models (LLMs), fine-tuning models and developing chatbots with responsible AI in mind.

This is probably the most positive AI impact on jobs we’ve seen so far. But be careful: I wouldn’t put my corporate in the hands of a freelancer to do model fine-tuning or chatbot development.

As I said many times before, one of the most valuable things companies can do now is build an internal AI team that is capable of in-house fine-tuning.

The bonus thing that caught my attention this week is the video of a huge 3D printer printing with Titanium a complicated part designed by artificial intelligence.

Even if you don’t care much about algorithmic design, this is worth watching to understand how AI is infiltrating in every aspect of our economy:

This is the material that will be greatly expanded in the Splendid Edition of the newsletter.

Even comedians are starting to use AI in their line of business.

Vanessa Thorpe, reporting for The Guardian:

This summer’s Edinburgh festival fringe lineup of acts has taken up the threat of artificial intelligence and run with it.

…

London-based comedian Peter Bazely has confessed to being “out of ideas”, so has turned to AI for help in creating a “relatable” show at the Laughing Horse venue. As a result, he plans to play straight man to a computer-generated comic called AI Jesus – also the name of his show.

…

Equally unafraid of an algorithm is a show at Gilded Balloon Teviot called Artificial Intelligence Improvisation, hot from the Brighton fringe. Presented by Improbotics theatre lab co-founder Piotr Mirowski, a research scientist on Google’s DeepMind project, the show features both actors and bots responding to audience prompts as chatbots compete with humans to the best punchline.Behind the theatrics lies a serious purpose, according to Mirowski. The future security of any performer who relies on their imagination rests on the public’s appetite for quality, he argues. “We do not use humans to showcase AI; instead we use AI, demonstrating its obvious limitations, to showcase human creativity, ingenuity and support on the stage,” he clarified this weekend.

As always, these attempts to show the limitations of today’s AI models, often fueled by job insecurity, are poorly framed.

GPT-3.5-Turbo is less than one year old. GPT-4 was released in March.

Of this year.

Yes.

It feels like it was released 50 years ago, but not. It’s just six months old.

And we already have rumors of a new large language model developed by DeepMind/Google AI that is significantly more powerful than GPT-4.

And then, you know that GPT-5 is coming? And 6? and so on?

So, on a performance stage or in the board room, our framing cannot be “Oh, look at how bad AI is at this task. It will never replace me in this job.”

To make that claim, we need to see at least three generations of large language models failing at the task, year over year.

If we don’t think in this way, we are just wasting a lot of energy in reassuring ourselves and others about something that we have no evidence to support.

For any new technology to be successfully adopted in a work environment or by society, people must feel good about it (before, during, and after its use). No business rollout plan will ever be successful before taking this into account.

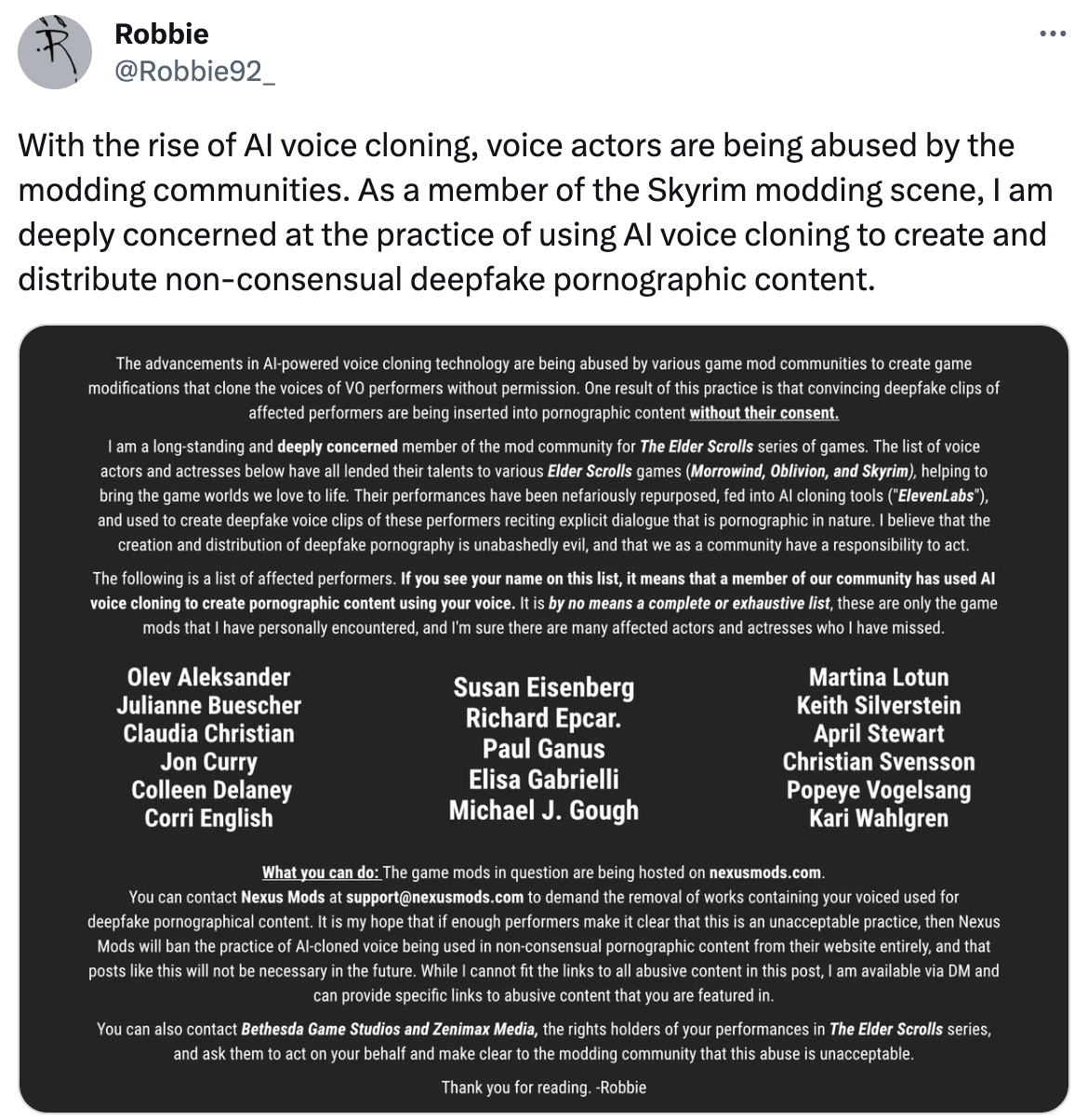

In Issue #20 – £600 and your voice is mine forever, we talked about the growing number of lawsuits involving voice actors who find out their voices are being cloned and used to compete against them in the marketplace.

But there’s another aspect of voice cloning that is worth discussing.

Ed Nightingale, reporting for Eurogamer:

“Is my voice out there in the modding and voice-changing community? Yes. Do I have any control over what my voice can be used for? Not at the moment.”

David Menkin most recently starred in Final Fantasy 16 as Barnabas Tharmr, following work in Lego Star Wars, Valorant, Assassin’s Creed and more. And like many other voice actors in the games industry, he’s concerned about the increasing use of AI.

“AI is a tool, and like any tool, it requires regulation,” Menkin says. “We simply want to know what our voices will be used for and be able to grant or withdraw consent if that use is outside the scope of our original contract. And if our work is making your AI tool money, we want to be fairly remunerated.”

…

A tweet earlier this month from Skyrim modder Robbie92_ highlighted the issue of actors having their voices used by modding communities for pornographic content. “As a member of the Skyrim modding scene, I am deeply concerned at the practice of using AI voice cloning to create and distribute non-consensual deepfake pornographic content,” they wrote, including a list of affected performers.

Some popular voice actors found out how their voice was used in that way with that tweet. Imagine that.

The main point here, one that I probably didn’t emphasize enough in these first six months of Synthetic Work, is that it’s incredibly easy to clone a human trait with generative AI.

How you sound, how you look, your choice of words.

Humans are algorithms themselves and we are the sum of a multitude of patterns that define our aspect and our behaviour. Generative AI learns our patterns better than any other technology we ever invented. And it allows anybody to replicate our patterns trivially.

Given enough data, which is less and less as weeks pass and the AI community makes huge progress, generative AI can clone anything about any of us.

This is a central point in the hypothesis that AI will replace us in the workplace for many professions. I’ve been working to show you how incredibly easy it is to clone a person for some time now.

You’ll see what’s possible in a future Splendid Edition.

We previously discussed how dating apps are using AI to embellish the profiles of their users and how much more they could do. But out of the many scenarios we contemplated, I didn’t see this one coming.

Emily Chang, interviewing Bumble CEO for Bloomberg:

“The average US single adult doesn’t date because they don’t know how to flirt, or they’re scared they don’t know how,” Wolfe Herd said on this week’s episode of The Circuit With Emily Chang. “What if you could leverage the chatbot to instill confidence, to help someone feel really secure before they go and talk to a bunch of people that they don’t know?”

Something tells me that lack of flirting skills is not the reason why the average US single adult doesn’t date, but OK.

Let’s continue:

Humanity could use some assistance. The US Surgeon General declared loneliness an epidemic, with more than half of adults reporting feelings of loneliness.

Something tells me that dating and loneliness are not correlated in the way it’s portrayed here, but OK.

There’s more:

AI could improve the quality of matches by, as she put it, “supercharging fate.”

…

“We’re building an entire relationship business.”

The full video, titled Modern Love, perfect for a Black Mirror episode, is here.

While the whole interview is interesting if you don’t know the story of Bumble, or its business model, this one quote at around 16:00 is what matters:

“Is there anything about an AI-powered dating future that makes you worry?”

…

“You just have to engineer things to operate within certain guardrails and boundaries, and you have to steer things in the right direction. So, is the world suggesting that the whole world replaces human connection with a chatbot? If that is truly the case, I promise you, we have bigger issues than Bumble.I don’t think you will ever replace the need for real love and human connection.”

So these dating apps are embracing AI and evolving to make us look better, to make us come across better, and to make us win more, by presenting us to an audience that is more likely to find us highly attractive, interesting, loving, etc.

To achieve the goal, they will have to know more about us, profiling us more extensively than today. And then, progressively, the effort to find a partner might be reduced to zero.

But before you get to zero, somebody will start thinking: “Wait a second. If we are getting all this data about this user, and we are learning so much more about him/her, why don’t we skip the whole matching partner circus and just create a chatbot that is a perfect match for him/her according to the same parameters we would use to find a human partner?”

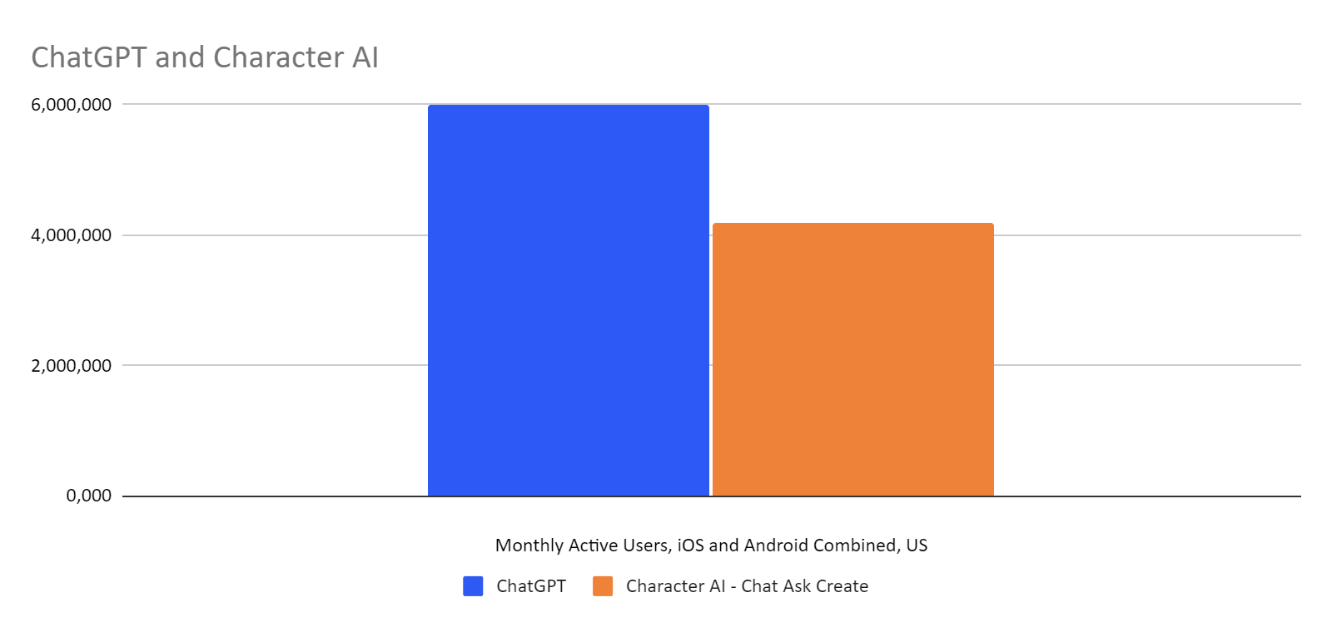

Coincidentally, Character.ai, which we talked about in one of the first Issues of Synthetic Work, is exploding in popularity and it’s getting closer and closer to ChatGPT in terms of monthly active users:

Now.

Why are we talking about all of this?

Because what happens in the personal lives of people influences how they behave in their professional lives and what they find acceptable or not.

If it becomes culturally acceptable to get an “AI makeover” on a dating app, it might become culturally acceptable to get an “AI makeover” on a professional networking app.

If it becomes culturally acceptable to get an “AI personal partner” on a dating app, it might become culturally acceptable to get an “AI professional partner” at work.

Not for you and me, perhaps, but for the generation of people who are growing up with these technologies and have yet to enter the workforce.

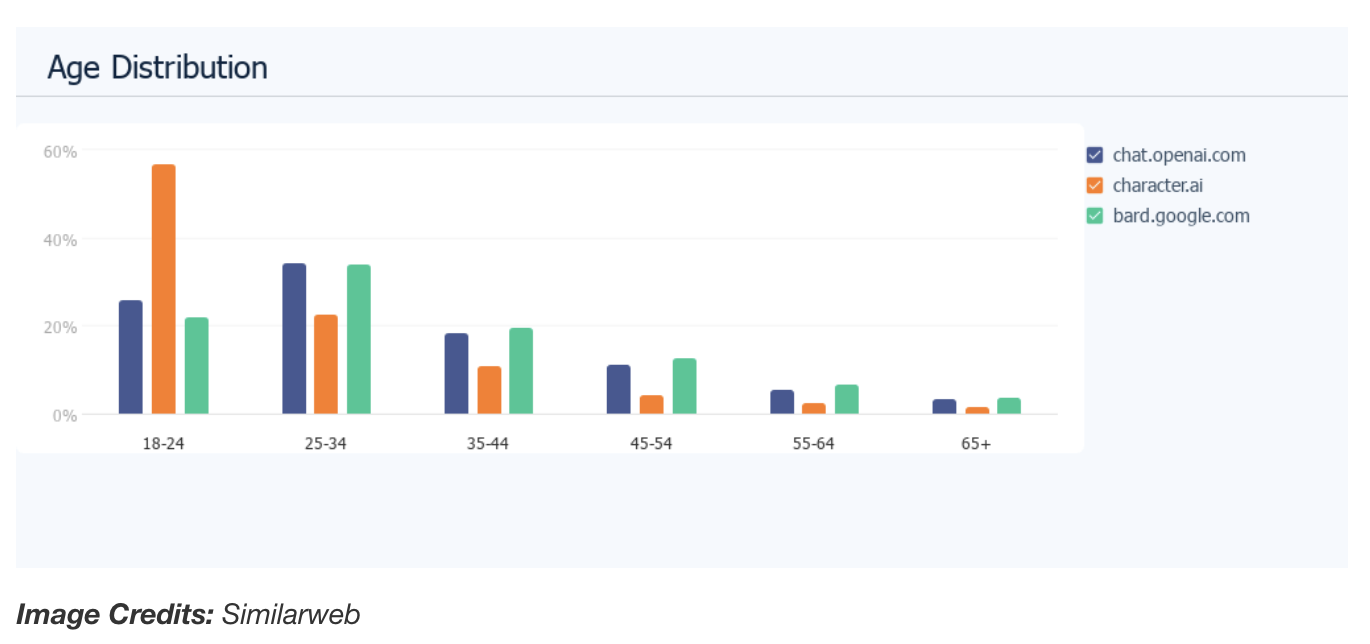

Look at the age distribution of Character.ai users:

We say “lipstick on a pig” in a pejorative way, but what if it becomes the social norm?

This week’s Splendid Edition is titled The AI That Whispered to Governments.

In it:

- Intro

- If you were one of the most powerful tech companies in the world, and you were given the possibility to whisper in the ears of your government, could you resist the temptation of taking advantage of it?

- What’s AI Doing for Companies Like Mine?

- Learn what Propak, JLL, and Activision are doing with AI.

- A Chart to Look Smart

- The better the AI, the less recruiters pay attention to job applications.

- Prompting

- Let’s take a look at a new technique to generate summaries dense with information with large language models. Does it really work?

- The Tools of the Trade

- Is lipsynched video translation ready for industrial applications?