- Intro

- How much do governments really think about the impact of AI on jobs and careers?

- What Caught My Attention This Week

- Vinod Khosla articulates his optimistic vision about the future of work in an interview at the All-In Summit.

- The London School of Economics (LSE) and Google published an invaluable 90-page report on how newsrooms across the planet are using generative AI.

- Geoffrey Hinton, the godfather of Artificial Intelligence, talks about the risk of mass unemployment by AI in a new interview with 60 Minutes.

Last weekend, I visited a couple of friends for a barbecue. We have 24 degrees in London and, yes, it’s the second week of October. In fact, we plan to cancel the temporary amusement park called Winter Wonderland that gets installed in Hyde Park ever year. This year, we’ll replace it with a more appropriate Tropical Pool Party amusement park.

At the BBQ, I met a top civil servant advising on welfare policies for a foreign government. Of course, we talked about the impact of AI on jobs and how governments should prepare for the future.

Even before we got into that conversation, this high profile lawyer candidly admitted using GPT-4 in every aspect of his work: policy drafting, summarization, speech writing, and more.

Takeaway #1: Generative AI has reached the highest levels of governments all around the world, regardless of their official policy on AI. And that means that, directly or indirectly, governments can be influenced by how AI models are trained or constrained in their outputs by system prompts decided by AI providers.

In discussing the risk of mass unemployment, this person proposed the well-known position that, like every other technology in the past, some jobs will be displaced while others will be created.

Once we established that AI might be very different for all the reasons I’ve articulated in Issue #15 – Well, worst case, I’ll take a job as cowboy, we inevitably ended up discussing about the universal basic income (UBI).

Takeaway #2: Even within the government ecosystem, there might not be enough awareness on how little progress we have made in our UBI experiments, and how quickly we’ll have to act if we want UBI to become a possible solution for a hypothetical mass unemployment scenario.

We also talked about a hypotentical employment tax on AI providers that governments could enforce as an alternative or complementary measure to UBI.

The person I was talking to suggested that such a tax might hurt a growing economy, fearing that, rather than paying, companies like OpenAI might simply decide not to operate in that country. In his mind, there would be a scenario where AI providers would accept to pay the tax in wealthy countries, but not in poorer ones.

Takeaway #3: There are many ways in which artificial intelligence could create more inequality instead of reducing it. We might have to spend more time thinking about the short- and long-term ones.

Overall, the conversation was interesting and constructive, but it was clear that the assessment of future scenarios, as described by the various guests at the BBQ, was not driven by data like the one Synthetic Work collects, but by the natural attitude of the people.

Optimists tended to remain optimists about the future, even if they couldn’t articulate in what way the economy might benefit from a super intelligent AI model that does 80% of the human tasks for every job in almost every industry.

Pessimists tended to remain pessists and couldn’t acknowledge the possibility that humans might rise to the challenge, just like we did during the COVID pandemic, and minimize the impact of AI on jobs even if today we look extremely unprepared.

Takeaway #4: We need to make the conversation about the future of work more data-driven and less opinion-driven. And before that, we need to have the conversation a lot more than what we do today.

Alessandro

The famed venture capitalists Vinod Khosla articulates his optimistic vision about the future of work in an interview at the All-In Summit.

Synthetic Work quoted Vinod’s thoughts on the subject, expressed on social media, multiple times. They are often pessimistic but, as it turns out, they are just half of his perspective.

From the transcript:

Q: There’s a lot of discussion about how jobs might be displaced because of AI. You’ve seen three or four of these amazing efficiency cycles. What’s your take on that? Because this one does feel qualitatively different, and it does seem to be moving at a faster pace. So, the speed at which AI is movin, and the leaps and bounds of how it’s making people augmented. You’re saying 80% AI 20% doctor…What do you think this does to jobs, and do you have those concerns of displacement that a lot of people seem to be concerned about?

A: I think it’s a genuine concern. Let me start with 20-25 years from now. I think the need to work will disappear. People will work because they want to work, not because they need to work. And nobody wants a job doing the same thing for 30 years on an assembly line at GM.

There’s a set of jobs nobody wants to do, that we shouldn’t really call jobs. They’re absolute drudgery, so hopefully those disappear.

…

There will be new jobs. What you call jobs or what people get paid for…I do think the nature of jobs will disappear.Now, societies will have to transition from today to that vision of not needing to work if you don’t want to. It will be very messy, very political, very I would say controversial. And I think some countries will move much faster than others.

…

Let’s take something I’m very hawkish on: China. They will take a Tiananmen Square tactic to enforcing what they think is right for their society over a 25 years period. We will have a political process, which I prefer to the Chinese style.Q: You like the debate over the tanks.

A: Well, debate always improves things, but it also does slow down, and certain societies may choose not to adapt these.

I think the societies that do that will fall behind, and I think that’s a massive disadvantage for our political system. We may choose not to do it. We’ve done it before. But we’ve also seen massive transitions before.

Agriculture was 50% of US employment in 1900. By 1970, it was 4%. So most jobs in that area, the biggest area of employment, got displaced. We did manage the transition well.

…

T he one thing I would say is: capitalism is by permission of democracy, and it can be revoked…that permission. And I worry that this messy transition to the vision I’m very excited about may cause us to change systems.I do think the nature of capitalism will have to change.

I’m a huge fan of capital capitalism. I’m a capitalist. But I do think the set of things you optimize for when economic efficiency isn’t the only criteria will have to be increased. Disparity is one that will have to be increased.

I first wrote a piece of 2014 in Forbes. It was a long piece that said AI will cause great abundance, great GDP growth, great productivity growth. All the things economists measure, and increasing income disparity. That was 2014. And I think I ended that to close this answer off with we will have to consider Ubi in some form. And I do think that will be a solution, but meaning for human beings will be the largest problem we face.

Q: If you give people UBI, do you have concerns of the idle mind and what happens? We’ve seen in other states that when 25% of young men, maybe, don’t have jobs their minds wander, and bad things happen. What do you think the societal issues will be if we do offer UBI?

A: I think we will have issues, but we will eliminate other issues. There’s pros and cons to everything. It’s very hard to predict how complex systems behave, and it’s very hard for me to sit here and say I have a clear vision of how that happens, but I for one am addicted to learning. I know other people addicted to producing music. All of those are reasonable things for people to find meaning in, or personal meaning.

…

Passions will emerge, and I think if people have very early in their life the ability to pursue passions, I hope that adds the meaning. Human beings will need and in some sense that’s what I was talking about in 2000 when I said we’ll have to redefine what it means to be human.Your assembly line job won’t define you for your whole life. That’d be a terrible thing.

How governments might react to a scenario where people work only if they want to is fascinating and barely discussed.

We don’t know what would happen in a society where people are free to spend the totality of their time on whatever they want without having to fight for survival.

Would the society implode due to the violence of individuals with too much free time in their hands?

Would it reach a golden age of productivity as every person is free to explore and find what they really enjoy doing?

Would people continue to live closely together in cities or spread out in more isolated communities?

Would education matter as much as it does today? And if so, would it focus on the same disciplines?

Would governments and religion have the same role as they have today in regulating and influencing the life of individuals?

The impact of AI on jobs, at the scale we focus on with Synthetic Work, is not just about mass unemployment vs. prosperity boom. It’s about the very fabric of our society and how we live together.

The whole interview is worth watching:

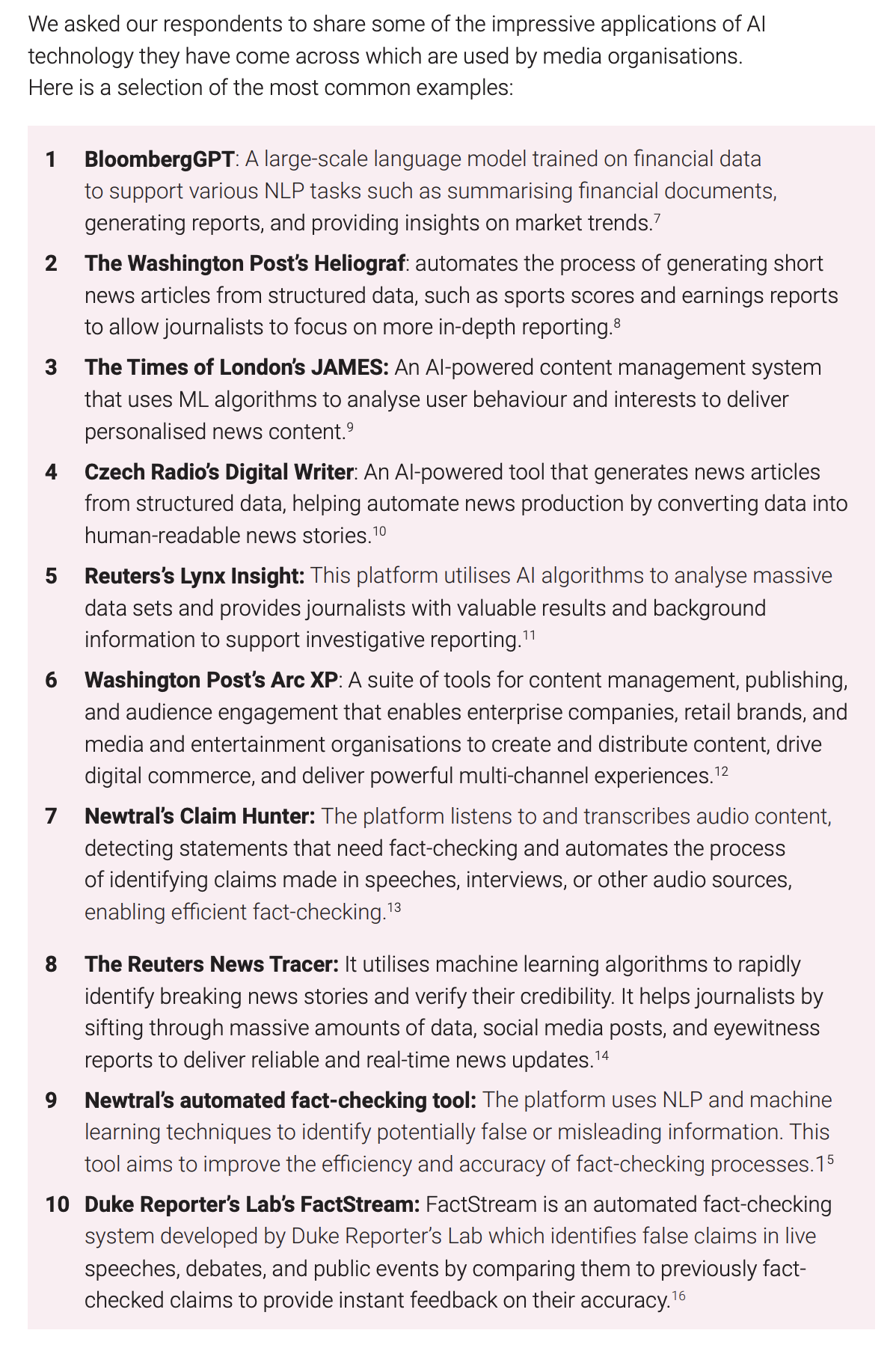

The London School of Economics (LSE) and Google published an invaluable 90-page report on how newsrooms across the planet are using generative AI.

The report is based on a survey conducted between April and July 2023, among 105 news and media organizations from 46 different countries, including broadcasters, newspaper magazines, news agencies, publishing groups, and others.

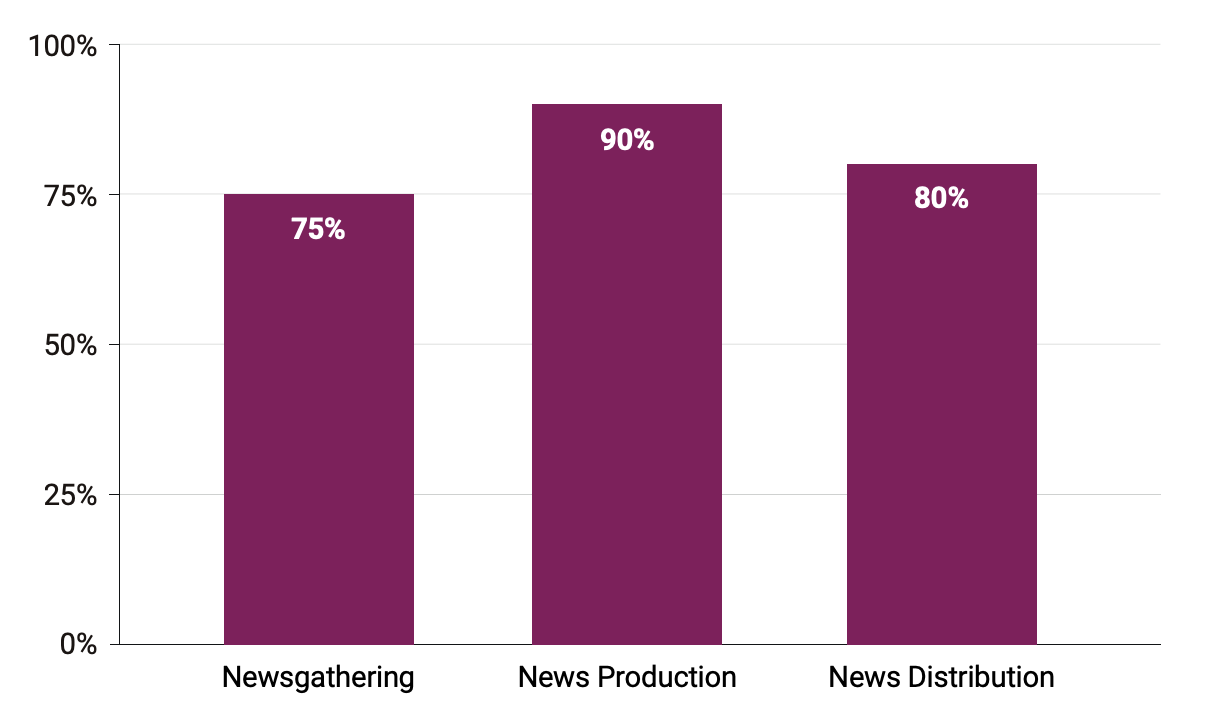

The work comes with many important charts about the AI impact on productivity. For example, how AI is being used across the surveyed organizations:

The insights are equally interesting:

fact-checking chatbots are leveraged in news production to validate or refute certain claims. At the same time, the data collected could assist in detecting misinformation trends and inspire a topic for a feature article, thus contributing to the newsgathering process.

…

a large majority, almost three quarters of organisations, use AI tools in newsgathering. The responses focused on two main areas:

- Optical character recognition (OCR), Speech-to-Text, and Text Extraction: Using AI tools to automate transcription, extract text from images, and structure data after gathering.

- Trend Detection and News Discovery: AI applications that can sift through large

amounts of data and detect patterns, such as data mining.The use of AI-powered tools for speech-to-text transcription and automated translation, such as Colibri.ai, SpeechText.ai, Otter.ai, and Whisper, was a widely cited area of use. They help streamline the production process and allow newsrooms to engage with

content in different languages.

…

Several respondents mentioned using tools like Google Trends, web scraping, and data mining services like Dataminr and Rapidminer to identify trending topics, detect news of interest, and gather data from various sources to uncover stories.

…

In addition to text automation and trend detection, respondents provided various other uses of AI technologies that help streamline routine, daily processes, previously performed manually or through lengthy processes, such as data classification

and content organisation. Examples from our respondents include tag generation, notification services, chatbots and language models that assist in automating responses and extracting data.

This is why Elon Musk claims that all mainstream media does is read the conversations happening on X and writing about it the day after.

The irony of all of this is that the same newsrooms are actively blocking access to their content to AI bots from OpenAI and others. For example, the BBC.

As you will read in this week’s Splendid Edition, the BBC is happy to use generative AI, but wants to be paid for having contributed to its training. Expect a similar demand from the rest of the Publishing industry in the months to come.

To recap: traditional news media wants to be paid by AI providers to scrape their content, while X wants to be paid by traditional news media for the same identical reason.

As we said multiple times on Synthetic Work, the risk is that traditional newsrooms could become unnecessary middlemen and human journalists could be replaced by AI models fine-tuned as synthetic journalists. All an AI provider would have to do is pay social media networks for unrestricted access to the conversation happening between users, and automatically generate news articles about it.

Once today’s limitations in large language models would be addressed (e.g., bias, hallucinations, language diversity), unlimited reach and automated production would allow the publishing of far more comprehensive news articles, tailored to appeal to the interest and political leaning of every single reader.

Human journalists cannot do that.

Let’s go back to the LSE report:

Around 90% said they used AI technologies in news distribution in a variety of ways, such as fact-checking and proofreading, using natural language processing (NLP) applications, trend analysis, and writing summaries and code using genAI technologies.

For instance, NLP applications are assisting with factual claim-checking. They identify claims and match them with previously fact-checked ones. Reverse-image search is also used in verification.

…

Newsrooms are already experimenting with and using genAI technologies like ChatGPT in content production tasks, including the production of summaries, headlines, visual storytelling, targeted newsletters and in assessing different data sources:“Our CMS has a Watson-powered tagging engine. We’re working on a ChatGPT=powered headline suggestion tool, as well, but it’s in the early phases.”

“[We use] GPT-4 to create summaries and translation of articles written by journalists for use on various platforms. We are also experimenting with AI-generated images, headline alternatives, tagging articles, audio and video production/”

You have already seen all of this here on Synthetic Work. I showed you how I’m doing all of these things to power the Breaking AI News section of the website in Issue #26 – How to break the news, literally

More from the LSE report:

Around 80% of respondents reported using AI technologies in news distribution, a slightly smaller percentage compared to production, but the range of use cases was the widest. Overall, the aim of using AI in distribution is to achieve higher audience reach and better engagement. News distribution was also the most frequently mentioned area impacted by AI-powered technologies in the newsroom, with 20% of respondents listing it as one of the areas most impacted by AI technologies.

…

We have a multi-layered set of rules for customising our content to individual news outlets, so it meets all of their internal rules for word-use from British or American spelling, to rules regarding biassed words, opinionated words, cliches,

hyphenated words, and so on.

…

Recommender system for podcast episodes, using the EBU Peach engine.

…

We are using voicebots to convert our text stories to audio format.

We have done this, too: in Issue #28 – Use AI, Save 500 Lives Per Year, we tested the quality of state-of-the-art synthetic voices to create an audio version of Issue #28 – Can’t Kill a Ghost.

Let’s continue:

AI-powered social media distribution tools like Echobox and SocialFlow were mentioned by several respondents, who said they used them to optimise social media content scheduling.

Respondents also mentioned using chatbots to create more personalised experiences and achieve faster response rates:“The WhatsApp chatbot is also used for news distribution, as users immediately receive a link to our debunk if we have already verified the content they sent.”

…

“We mostly make use of SEO to help increase the visibility of our stories on our website. We have found that human interest local stories tend to do better than stories about celebrities or other topics.”“Ubersuggest helps me see which keywords are highly searched online, Google Discover shows me which stories and keywords are trending, CrowdTangle shows me which social media posts are over performing. This helps me create relevant news stories that people are interested in. Using SEO keywords that are searched often increases the likelihood of the stories reaching a higher number of people.”

The entire Publishing industry is transforming under our noses, and while we think what we read is still written by a human being, in many cases, it’s not anymore.

Thanks to AI, what we are reading online is being optimized for maximum engagement, in a vicious circle that will keep repurposing the same type of content over and over, as to feed an addiction that nobody has an interest in curing.

Unsurprisingly, the only thing that is slowing down an even faster adoption is the typical lack disfunction of large organizations:

“The biggest failure has been slow progression on already identified use cases because of organisational issues, lack of focus and resources.”

…

“For some of our third-party available machine learning offerings, we found that we didn’t have a strong onboarding process or clear explanations, so uptake has been slower than anticipated.”

And now, let’s get to the part that matters the most: the impact on people and processes.

“We saved more than 80% in the process of monitoring and searching for verifiable phrases … We are convinced that this field will have a more positive impact in the future.”

…

“AI optimises distribution onsite and on social media. While we are no longer scheduling all posts individually or curating every part of every homepage, that work has shifted. We are more easily able to think big-picture, and to change outcomes more swiftly by adjusting broader rules that may affect dozens of posts or positions on a page. Put another way, AI streamlines workflows, but it doesn’t replace work entirely. It changes the nature of the work and expands our impact.”

…

“Freeing up time for journalists to continue doing their job is the greatest impact achieved.”

…

“[It] will definitely have an impact in the future as AI takes over more of the mundane tasks of newsgathering.”

…

“Currently, the impact of AI is not yet significant and widespread, but it already emerges as an enabler, for sure”

…

“Right now, it does not have a significant impact. However, the impact may be quite significant if we embrace AI…This will free the journalists to work on other sectors, especially when they go in the field and remote areas to conduct very unique interviews and videos for very unique stories that our audiences are interested in.”

It sounds great. Possibly, it’s great. But did they ask the journalists?

You see, in this particular situation, journalists are between a rock and a hard place: they cannot speak against the adoption of generative AI by their employer for fear of retribution until they leave the news organization. But once they leave the news organization, they lose the platform that allows them to speak up and reach a wide audience.

So, if there’s a growing fear about how far this is going, we won’t hear about it until it’s very late.

As usual, it’s possible that the fear is unfounded. What jobs is AI creating, or expected to create, in news organizations?

Responses to whether AI technologies impacted existing roles in newsrooms followed a similar pattern; around 60% said AI integration has not done so. Many, however, expect this to change in the future:

“Not yet, but we are working on new vacancies for AI that include Prompt engineers, AI and ML engineers, and data scientists.”

…

“Not yet because it’s a transition still in early stages. AI is augmenting rather than totally changing roles.”

…

“I could see us creating more AI-specific roles in the future, likely as news technologists who work closely with journalists.”

…

“AP’s NLG of earnings reports nearly a decade ago liberated our reporters from the grind of churning out rote earnings updates and freed them up to do more meaningful journalism. More recently AP has created three new roles that focus on AI across news operations and products.”

…

Yes, we have created at least one new role focused on managing AI experiences, and expect we may have more, but the growth is slow and deliberate. As much as we can, we are leveraging the talent we have already. For instance: Our real estate and development editors lead our real estate AI content creation. One of our leading digital audience producers is overseeing our social media optimisation.”

…

“Yes there was a need to allocate a dedicated data analyst within the team.”

…

Overall, even though the adoption of AI-powered technologies has not always resulted in the development of new, AI-specific roles, it has prompted the evolution of current roles and the acquisition of new skills by the staff in order to effectively use AI technologies in their journalistic endeavours.

…

“We are convinced our IT department that while prompt engineering does require a certain technological understanding, IT staff are not equipped to assess the result when it comes to journalistic production. On the other hand, designing a successful prompt, “getting the machine to tell what I want it to tell”, has some similarities with journalistic processes. We are already training a journalist in prompt design.”

…

“Yes, there are new AI-specific roles. The digital team helps us monitor trends, but as the digital editor, I do that too. Newsgathering and distribution have also changed. I check trends and write content based on that.”

…

“Yes, the journalist had to train the algorithms and, for doing so, they received training about how the algorithm works, what kind of data do we need and how to gain accuracy. On the other side, the journalistic team have shared the editorial criteria that guide their decisions to the engineers team, by providing keys on why and what is considered a factual claim.”

These quotes suggest that the only new roles envisioned in the future will be in the AI field itself. Not in journalism.

This is a scenario we often consider in Synthetic Work: traditional jobs displaced by AI could be replaced by technical jobs about AI.

While we wait for the utopia of a world where nobody has to walk thanks to the cars, everybody might be asked to become a car mechanic.

Only as long as the cars don’t start fixing themselves. Of course.

Geoffrey Hinton, the godfather of Artificial Intelligence, talks about the risk of mass unemployment by AI in a new interview with 60 Minutes.

Now that he left Google, he’s free to be clearer and more blunt about the future he sees.

From the official transcription:

Scott Pelley: Does humanity know what it’s doing?

Geoffrey Hinton: No. I think we’re moving into a period when for the first time ever we may have things more intelligent than us.

Scott Pelley: You believe they can understand?

Geoffrey Hinton: Yes.

Scott Pelley: You believe they are intelligent?

Geoffrey Hinton: Yes.

Scott Pelley: You believe these systems have experiences of their own and can make decisions based on those experiences?

Geoffrey Hinton: In the same sense as people do, yes.

Scott Pelley: Are they conscious?

Geoffrey Hinton: I think they probably don’t have much self-awareness at present. So, in that sense, I don’t think they’re conscious.

Scott Pelley: Will they have self-awareness, consciousness?

Geoffrey Hinton: Oh, yes.

…

Scott Pelley: What do you mean we don’t know exactly how it works? It was designed by people.Geoffrey Hinton: No, it wasn’t. What we did was we designed the learning algorithm. That’s a bit like designing the principle of evolution. But when this learning algorithm then interacts with data, it produces complicated neural networks that are good at doing things. But we don’t really understand exactly how they do those things.

…

Scott Pelley: The risks are what?Geoffrey Hinton: Well, the risks are having a whole class of people who are unemployed and not valued much because what they– what they used to do is now done by machines.

A word of caution to the readers who really don’t like the doom and gloom news and, being optimistic by nature, prefer to read only positive takes. If you are rolling your eyes right now, please remember that there’s an information asymmetry between us and Hinton: he sees things that most people on the planet don’t get to see until months or years later.

This is a man that placed his professional career and, his lifetime, on a contrarian bet, based on an information asymmetry. A man who’s been happy to wait 50 years to be proven right.

It doesn’t mean he’ll be right again. It just means that when he speaks, probably it’s a good idea to listen with an open mind.

The full interview is here:

This week’s Splendid Edition is titled You’re not blind anymore.

In it:

- What’s AI Doing for Companies Like Mine?

- Learn what BBC, The Washington Post, and Thomson Reuters are doing with AI.

- A Chart to Look Smart

- What can we do by training an AI with the lifetime of the most intelligent person on Earth, educated to be a business/science/engineering/etc. leader, and recorded as he/she goes through life and is successful?

- What Can AI Do for Me?

- Here’s how the new GPT-4V might transform customer support.

- The Tools of the Trade

- Finally, a tool I can recommend to organize ChatGPT chats.