- Intro

- Synthetic Work reaches an important milestone.

- What Caught My Attention This Week

- Creating synthetic advisors vs synthetic analysts, and the legality of AI-led corporations.

- Goldman Sachs lifts US GDP forecast to account for the impact of generative AI.

- Can AI threaten the job of the field researcher? Can it at least decode animal language?

I think it’s time to celebrate as we reached a milestone: 100.

A hundred early adopters across the world are now listed in Synthetic Work’s AI Adoption Tracker.

Of course, there are many more early adopters than a hundred all around the world. But these are confirmed, verifiable, and researchable use cases. Not the handwaving of overexcited (and not necessarily honest) technology vendors.

A tremendous research tool I wish I had when I was an analyst for Gartner a life ago.

I expect to give many presentations to customers about these 100 adopters, as more and more organizations look for guidance and inspiration to adopt artificial intelligence.

I’ll start in Italy, this December, as I’m invited to speak at two different events there: a nationwide gathering organized by an agency of the Minister of Health, where I’ll give a talk about the impact of AI in the Health Care industry, and a corporate AI hackathon, organized by a company in the Professional Services industry for all their employees.

On top of this, Synthetic Work’s archive now contains 72 issues of this newsletter, with hundreds of pages of content, including case studies on how to apply AI to solve real-world problems, prompting techniques, product reviews, editorials, linked articles, videos, etc.

If you believe that generative AI will have a deep impact on human life, reading the archive is a bit like watching history unfolding in front of your eyes. In fact, at least one of our members prints the archive and keeps it stored, for posterity.

I’m not joking. It’s very flattering.

New Sage members (one of our membership tiers, which unlocks access to the Splendid Edition of this newsletter and access to the archive) often tell me they spend weeks reading through the archive, to be sure they have the most complete understanding of how the impact of AI on work has evolved since we started.

Some start from Issue #0 and move forward, while others start from the most recent issue and move backward. It’s pleasing to see them discussing the best strategy in our Discord server (another perk of the Explorer and Sage memberships).

To the next 100.

Alessandro

In the last couple of weeks, I talked a lot about a not-too-distant future where multiple versions of GPT-4 (or what will come next) can be fine-tuned to become world-class experts in a specific field, and then assembled together in what I call an “AI council”, acting as a synthetic board of advisors for a company’s business leaders.

I showed you how this can be done today with the technology we already have in Issue #31 – The AI Council.

I also briefly talked about the future evolution of this approach, and how it could be our biggest invention to help entrepreneurs around the globe, in the video below:

Weeks after all of this, a new article published by Harvard Business Review, and titled How AI Can Help Leaders Make Better Decisions Under Pressure, promotes similar ideas, and I think it’s worth reading.

Mark Purdy, Managing Director of Purdy & Associates, and A. Mark Williams, Senior Research Scientist at the Institute of Human and Machine Cognition:

According to research by Oracle and Seth Stephens-Davidowitz, 85% of business leaders have experienced decision stress, and three-quarters have seen the daily volume of decisions they need to make increase tenfold over the last three years.

Poor decision making is estimated to cost firms on average at least 3% of profits, which for a $5 billion company amounts to a loss of around $150 million each year. The costs of poor decision making are not just financial, however — a delayed shipment to an important supplier, a failure in IT systems, or a single poorly managed interaction with an unhappy customer on social media can all quickly spiral out of control and inflict significant reputational and regulatory costs on firms.

…

AI-powered technologies can drive faster and better decision making in at least three main ways: real-time tracking and improved prediction of on-the-ground business developments, virtual role-play to train workers in life-like business scenarios, and emerging generative AI tools that can answer questions and act as advisors and virtual “sounding boards” for decision makers.

While our interest today is focused on the third use case, we dedicated Issue #18 – How to roll with the punches to a variant of the second use case, prompting GPT-4 to act as a harsh critic to prepare you to face corporate meetings where your ideas will be invariably challenged.

You can easily adapt that tutorial to match the second use case described in this article.

Let’s continue:

We interviewed Konstantinos Mitsopoulos, a research scientist at the Institute for Human and Machine Cognition (IHMC) in Florida, who told us:

“In principle, generative AI systems can help overcome some of the problems affecting human decision making, such as limited working memory, short attention spans, and decision fatigue, especially when it comes to making decisions under pressure. Generative AI tools can potentially help decision makers save time, conserve energy, and free up time to focus on the issues or questions that matter most.”

In health care, for example, AI systems can limit the cognitive load on clinicians by automatically sifting and synthesizing key data needed for effective decision making, reducing volumes of unnecessary medication alerts, and automatically triggering patient follow-up actions and communications. Many other applications are possible, from business continuity and crisis response management, to assessing risk in different types of financial investments.

An emerging application of generative AI is the development of decision “co-pilots” that can assess information in dynamic situations, suggest options and next-best steps, and complete tasks. Fusion Risk Management, a Chicago-based company providing software for managing operational risk, is developing a generative AI-powered assistant called Resilience Copilot that uses AI to sift large volumes of operational risk data, identify the relevant elements for decision makers, and generate executive summaries, instant insights, intelligent recommendations, and best-practice improvements.

…

Generative AI is also being used to help organizations with reputation management — for example, through “social listening” tools that help marketing and social media managers track online feedback and reviews in real time and make effective decisions about how to respond. Reputation, a reputation management software company based in California, provides real-time monitoring of firm’s online reviews across multiple social media channels, with real-time alerts to signal negative sentiment scores, monitoring of crisis events, and prescriptive recommendations for social media managers dealing with negative comments.

Now, we must be careful here. While the author of this article places these examples in the advisory bucket, these solutions are not quite the same thing as a synthetic advisor, and they don’t behave in the way I explained and demonstrated above.

There’s a fundamental difference between an advisor and an analyst:

- The advisor exists to provide a different perspective, coming from knowledge or experience or both, and elicit new ideas or different approaches to problem-solving.

- The analyst is there to distill large quantities of data and connect invisible points across that data, making it easier to identify what could be done next.

The AI systems quoted in the HBR article so far are analysts, not advisors. That won’t be enough, and it won’t be as transformative as a synthetic advisor.

Let’s continue:

One of the biggest potential applications of generative AI is in the checking and testing of ideas, providing a kind of virtual sounding board. We interviewed Matt Johnson, a senior scientist at The Institute for Human & Machine Cognition (IHMC) and former U.S. Navy pilot, who observed:

If used properly, generative AI could function as a really good teammate, in the same way that I might want to talk through a problem with my colleagues even though I think I already have the solution. It also potentially has a long organizational memory, which is useful for people who may be relatively new to an organization and want to find out how issues were handled previously.

In fact, the “creative side” of generative AI is likely to become even more important to decision makers in many different fields and industries in the future. One reason is its ability to create large volumes of “synthetic data” that can mimic the probabilistic structure of real-world processes and events, often from very small samples. Synthetic data can be used to create decision-making models and scenarios for high-impact events that occur infrequently — for example, very sophisticated frauds in insurance that may be missed by human reviewers. Generative AI can also create privacy-compliant versions of very large customer data sets, which can then be safely shared for both AI and human-led decision making. Mostly AI, an Austrian AI company founded in 2017, has used synthetic data models for better decision making in areas as diverse as health care analytics, digital banking, insurance pricing, and HR analytics.

This is a little more in the direction I’m suggesting the world should go. But it’s still not quite there.

A last quote will, hopefully, lead us there:

Pay attention to the experience curve

Research indicates that the skills and experience profiles of workers — whether they are experts or novices, or somewhere in between — makes a big difference in how they interact with AI technology and the expected impacts. In general, experts tend to rely heavily on experience and intuition, using machines to sense check or suggest alternative options. Experts can also be less proficient with new technology if they have been in a field a long time. Novices can use AI to “learn the ropes” and get faster exposure to a range of different scenarios, but need real-world practice to prevent over-reliance on machines. Skill levels, experience, organizational knowledge, and proficiency with technology are all factors that business leaders will need to carefully calibrate in designing AI skills strategies and applications.

This is the main driver for the evolution of synthetic advisors as I describe in the YouTube video above: we want to get to a point where we can encode the experience of the best experts in the world, and offer that experience as a service to anyone who needs it.

This is one of the most important areas of development for generative AI I could think of. And one of the most impactful on the way humans work today.

Why?

For two reasons:

On one side, this technology could be used to help entrepreneurs and small business owners across the globe to make better decisions.

Very few are lucky enough to establish their business in a superpower country, and even fewer can count on the mentorship of a Vinod Khosla, or an Eric Topol, or a Seth Godin, or a Warren Buffet, etc.

Imagine if we could offer the knowledge and experience of our brightest minds to anyone who needs it. How many businesses would thrive and expand, creating more jobs and increasing the world’s GDP?

On the other side, really good synthetic advisors might start replacing much of the executive leadership in public and private companies.

I started talking about this topic in May, in this infamous LinkedIn post:

One thing that I want to make clear: AI is coming for people in the top management positions of large organizations, too.

When we talk about the impact of AI on jobs or, more specifically potential job displacement, we normally think about the bottom of the corporate hierarchy, up to middle management. Allow me to suggest a broader perspective.

Just like we are seeing celebrities and influencers (search for Caryn Marjorie if you must) starting to clone themselves and “rent” their clones for various types of jobs and activities, you should expect that the top C-level executives and the VPs on the planet will eventually do the same.

Why? Because in this way they can serve multiple companies at the same time, earning untold multiples of what they do today, at a fraction of the effort. And because the market would want them.

If you think that this is an unrealistic scenario, think about this:

Imagine GPT-6, three years from now (assuming a tick-tock pace), while keeping in mind the exponential growth in capabilities and sophistication we have seen in just 6 months. If you haven’t used GPT-4 *extensively*, I highly encourage to do so.

Imagine that AI with a huge short-term memory: we saw today Anthropic announce a 100K token context window for its model Claude. Academic research has shown the capability to achieve 1-2M token context memory already.

How far will we be in 3 years? That sort of memory would give the AI the capability to coherently pose as any persona it has been trained to mimic.Imagine high-fidelity, ultra-realistic synthetic voices, considering how far we’ve already gone with Play HT and ElevenLabs.

Imagine perfect lipsync. We’ve seen all sort of increasingly realistic proof of concepts thanks to techniques like wav2lip and PCAVS. More are coming.

You can count on all of this (and much more, but I don’t want to make this post technical).

Now imagine that a beloved and highly respect CEO like Disney’s Bob Iger decides to clone himself before retiring, so that he can serve multiple smaller companies during his retirement years. That’s how I imagine it would start.

Think about the board of one of those smaller companies. they can be established players or startups. All of a sudden, they have the opportunity to choose between the AI of Bob Iger or another, less capable, in-the-flesh CEO.

Who do you think they would pick for the same salary?

Of course, this scenario is predicated on the assumption that the AI is flawless in *replicating* the CEO’s perspective, decision flows, etc.

It’s not AGI I’m talking about. Just a much more sophisticated generative AI.Not a small feat, but can you confidently say we can’t do it (don’t assume that in 3 years we’ll keep using the AI techniques we use today)?

And if it is, what exactly would stop those companies from doing the same with their CFO, their CMO, their CIO and, in cascade, all their VPs?

What if their competitors are doing it?

If you think that the executive leadership doesn’t risk being replaced by AI just like any other job category, here’s a novel data point that emerged in the six months since I wrote the post quoted above: legal experts have started thinking how existing laws might apply to AI-led companies.

Daniel Gervais of Vanderbilt Law School and John Nay of The Center for Legal Informatics at Stanford University published a paper featured in Science and titled: Artificial intelligence and interspecific law.

From the abstract:

For the first time, nonhuman entities that are not directed by humans may enter the legal system as a new “species” of legal subjects. This possibility of an “interspecific” legal system provides an opportunity to consider how AI might be built and governed. We argue that the legal system may be more ready for AI agents than many believe. Rather than attempt to ban development of powerful AI, wrapping of AI in legal form could reduce undesired AI behavior by defining targets for legal action and by providing a research agenda to improve AI governance, by embedding law into AI agents, and by training AI compliance agents.

There’s a growing amount of research exploring how to use existing legislation to align AI behavior in a way that natural language and code language seem to struggle with.

These legal professionals are not solely concerned with aligning the one AI that will rule us all. What we call AGI.

They are interested in constraining the behavior of any AI that they see as capable of leading a for-profit organization.

Further to this topic, Susan Watson, Professor of Law at the University of Auckland Business School, just published a paper titled Corporations as Artificial Persons in the AI Age.

From the research:

Boards currently make decisions for corporations by controlling the corporate fund held in the artificial legal person. Soon it will be possible for AI to make decisions for corporate entities.

Algorithms will set the parameters for those decisions. AI will, therefore, have the potential to replace boards of natural persons. Florian Möslein distinguishes between three forms of artificial intelligence: assisted intelligence and augmented intelligence (which are used to support human directors) and autonomous artificial intelligence (which is used as a replacement for human directors).

Referring to assisted/augmented intelligence, Möslein notes that directors have the authority to delegate tasks to AI, but ultimately must always maintain core management function and must supervise the outcome of delegated tasks.65 This

supervision requires directors to have a basic understanding of how these autonomous systems operate.The legal appointment of autonomous AI in the boardroom, by contrast, would require changes to the rules surrounding appointment of directors.67 Legal strategies to govern autonomous AI will focus on ex ante control of algorithms rather than ex post control of

behaviour as robo-directors can be made to comply with specific legal rules.In ‘Corporate Management in the Age of AI’, Martin Petrin comments on the growing role of AI in corporate management with emphasis on the change it will enact on corporate leadership by gradually replacing the human board of directors. This will lead to ‘fused boards’ in which all the roles of the collective human directors, officers and managers are incorporated into a comprehensive single algorithm which manages the company.

There is disagreement as to whether AI will be able to take over both the administrative and judgement tasks involved in human corporate management, with the latter requiring more complex creative, analytical and emotional capacity, though the conclusion is that eventually AI will be able to fully replace human directors and managers.

This transition will require legal reform of board composition to allow business to incorporate AI in the boardroom. It will also cause changes in liability, such as the artificial entities themselves having the capacity to be sued as well as developers

and providers of AI management software.

Allow me to emphasize the position: “the conclusion is that eventually AI will be able to fully replace human directors and managers.”

Let’s remain focused on the potential for AI to lift the world’s GDP: Goldman Sachs raised its forecast, at least for the US.

Chris Anstey, reporting for Bloomberg:

In the US, which Goldman sees as the market leader for adoption, AI is set to add a 0.1 percentage point bump to gross domestic product gains in 2027, accelerating to a 0.4 percentage point boost in 2034. The AI impact raises Goldman’s US GDP forecast to a 2% expansion rate in 2027 and to 2.3% by 2034, projections show.

It will take time for companies to adopt AI, so the impact isn’t likely to show up for many years, the Goldman team said.

“While considerable uncertainty remains about the timing and magnitude of AI’s effects, our baseline expectation is that generative AI will affect productivity” in time, Goldman economists led by Jan Hatzius wrote in a note Sunday.

The euro area would see a 0.1 percentage-point bump starting in 2028, with the lift increasing to 0.3 percentage points by 2034 — when Goldman sees the region expanding at a 1.4% pace.

…

China would see more modest gains, reaching a 0.2 percentage point lift by 2034, raising its expansion rate to 3.2%. Japan will see a 0.3 percentage point lift by 2033, taking its GDP growth to 0.9%, Goldman’s forecasts show.

Now. The capability of an analyst (I was one, a long time ago) to forecast the future is limited to his/her understanding of the present and the past. Not of the future.

No human is capable of predicting the future and, in general, as a species, we are pretty bad at it.

A case in point is exactly generative AI: nobody would have predicted the ongoing transformation of our workplace a month before Dall-E 2 and ChatGPT were introduced.

So, when an analyst makes a prediction, you should always picture in your mind a disclaimer to accompany it: “At current course and speed.”

This is a fact that is ignored by most, and one that analysis firms are not too keen to remind their customers about.

On top of this, the analyst has to be extremely good at understanding the potential and challenges of the present. While this is obvious, different analysts are better at understanding the short-term impact of the present, while others are better at understanding the long-term impact of the present.

Even when something is not uncertain, humans are, in general, better at understanding the short-term consequences of that something. And this tells you that, even with the disclaimer above, the longer term a prediction, the more likely it is to be wrong.

In this particular example, did the Goldman Sachs analysts consider the impact of synthetic advisors and AI-driven companies on the US GDP forecast?

If we decide to believe a forecast, it’s worth asking what were the assumptions that went into it.

Can AI threaten the job of the field researcher?

Not until we get to a point of robotic sophistication to match the one of generative AI, but for when that happens, we’ll have much bigger welfare concerns than preserving the job of field researchers.

In the meantime, generative AI is now being used to support field researchers.

In an article without byline (a concerning sign that it might have been generated by an AI model), the Economist writes:

The rainforests are alive with the sound of animals. Besides the pleasure of the din, it is also useful to ecologists. If you want to measure the biodiversity of a piece of land, listening out for animal calls is much easier than grubbing about in the undergrowth looking for tracks or spoor. But such “bioacoustic analysis” is still time-consuming, and it requires an expert pair of ears.

In a paper published on October 17th in Nature Communications, a group of researchers led by Jörg Müller, an ecologist at the University of Würzburg, describe a better way: have a computer do the job.

…

The researchers took recordings from across 43 sites in the Ecuadorean rainforest. Some sites were relatively pristine, old-growth forest. Others were areas that had recently been cleared for pasture or cacao planting. And some had been cleared but then abandoned, allowing the forest to regrow.Sound recordings were taken four times every hour, over two weeks. The various calls were identified manually by an expert, and then used to construct a list of the species present. As expected, the longer the land had been free from agricultural activity, the greater the biodiversity it hosted.

Then it was the computer’s turn. The researchers fed their recordings to artificial-intelligence models that had been trained, using sound samples from elsewhere in Ecuador, to identify 75 bird species from their calls. “We found that the ai tools could identify the sounds as well as the experts,” says Dr Müller.

…

The results may have relevance outside ecology departments, too. Under pressure from their customers, firms such as L’Oreal, a make-up company, and Shell, an oil firm, have been spending money on forest restoration projects around the world. Dr Müller hopes that an automated approach to checking on the results could help monitor such efforts, and give a standardised way to measure whether they are working as well as their sponsors say.

If you are interested in the actual paper, it’s here: Soundscapes and deep learning enable tracking biodiversity recovery in tropical forests.

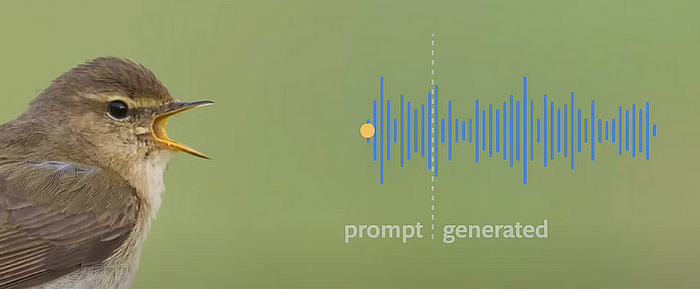

Somehow connected to this is the news that we’ve started using transformer models, like the ones that power your ChatGPT, to manipulate animal language in various ways.

I publicly suggested we might go in this direction six years ago:

Is anybody using latest #AI / #ML algorithms to try and understand animal language (e.g., dolphins)?

— Alessandro Perilli 🇺🇦 (@giano) December 7, 2017

and I hope to see this incredible achievement in my lifetime.

This article by Vishal Rajput on Medium is reasonably approachable to understand the progress made so far:

Till recently, we were looking into different AI for different tasks. But with the introduction of Transformers, we can treat everything as a form of language and truly develop a multi-modal capacity to transform any language to any other language — from fMRI to text, from text to video, and so on.

…

We are already working on models to complete an animal sound with a small audio clip. But more than that, given the motion of the animals, we are asking our models to predict what kind of sound the animal will make.

…

We have a lot of models where we give a piano prompt, and it completes the piano piece or give 3 seconds of someone’s audio, and then it can continue to speak as if the same person is speaking. And that’s the same thing we can do for animal voices and sounds. The possibility are endless.

…

I know this sounds very exciting, but we must tread extremely carefully. It is very much possible that we might be able to communicate with animals in the next 30 years, but it is also possible that we might destroy their culture by introducing sounds that disturb their millions of years of culture and thus make them unable to hunt and survive.

The hope is to find in animal sounds the same mathematical correlation between words that we defined for human language, which might unlock the secrets of communication with non-human species*.

The amazing project driving the research on non-human communication is called Earth Species Project and it’s worth some of your exploration time.

* Yes, even those non-human species, should they ever exist. When it comes to this area of research, there’s much more at stake than what it seems at first glance.

This week’s Splendid Edition is titled Immortals.

In it:

- What’s AI Doing for Companies Like Mine?

- Learn what Citigroup, Twilio, Google, DataRobot, Roche, Universal Music, CD Projekt, and Apple are doing with AI.

- Prompting

- Let’s test the Drama Queen technique.