- Intro

- How disinformation fueled by AI might impact opinions, jobs, and the economy.

- What Caught My Attention This Week

- Thousands of surveyed engineers expect less hiring in the future due to AI.

- The Screen Actors Guild (SAG-AFTRA), on strike since July 2023, accepted the use of AI to clone actor voices.

- Duolingo cut 10% of its contractor workforce because AI.

- The Way We Work Now

- Bland AI promises to automate more than 500,000 inbound or outbound phone calls with synthetic voices.

- Pakistan’s former prime minister to campaign from behind bars on Monday, with his cloned voice.

I started my career in the IT industry over 25 years ago in the then-exciting field of cybersecurity. At that time, I was focused on the most technical and tactical aspects of information technology: hacking networks and operating systems, just like you see in movies.

During that part of my life, I created one of the first four ethical hacking classes in the world for business professionals. And I was teaching it in class when the 9/11 attacks happened. We stopped the lesson and watched the events unfold in real-time on TV.

It was one of the most fun and exciting times of my life, but only because I moved out of that sector after a few years.

While I was busy doing other things in other areas of IT, the cybersecurity industry stagnated for two decades, with little fun or excitement left in it for new entrants.

Generative AI is changing everything.

If I were a young person looking for a career in cybersecurity today, I wouldn’t bother for a second to look at traditional technologies like firewalls, intrusion detection/prevention systems, etc. As I said multiple times on social media, I would focus exclusively on AI applied to cybersecurity (both for offense and defense).

Coincidentally, towards the end of 2023, a university approached me, proposing to develop a MSc on AI applied to cybersecurity. It would have been fun to create another groundbreaking, first-of-its-kind course, exactly like 25 years ago.

We couldn’t find an agreement, but it’s entertaining to think how things could have come full circle.

Why am I so keen to recommend AI to young cybersecurity professionals, to the point of being willing to go back in time and teach it myself?

Because of this video:

This is a deepfake video I generated starting from this picture of myself:

This video represents (almost) the state of the art in deepfake technology and what people call the bleeding edge of technology.

You should expect this technology to further improve throughout 2024.

The implications of this deepfake video are hardly fathomable by the general public (and even most technology professionals). That’s because the AI community doesn’t really show you what can be done with this technology, and most people don’t have the time or interest to imagine what’s possible.

I’m not showing you what really possible with this technology either, but this is what you need to know:

Despite this being a very challenging scene to deepfake, it took only 2 minutes to replace my face in every frame, and another 17 minutes to enhance the quality enough to blend it better into the frames.

In other words, I didn’t use any online service to do this. It was trivial to do it on my laptop. And if I had a desktop-class computer with a consumer-grade GPU (a normal NVIDIA card like the ones that kids buy to play the best videogames), this job would have taken me seconds instead of 19 minutes.

Anybody renting a server-class GPU on the cloud for a few dollars per hour, can generate hundreds of these each day.

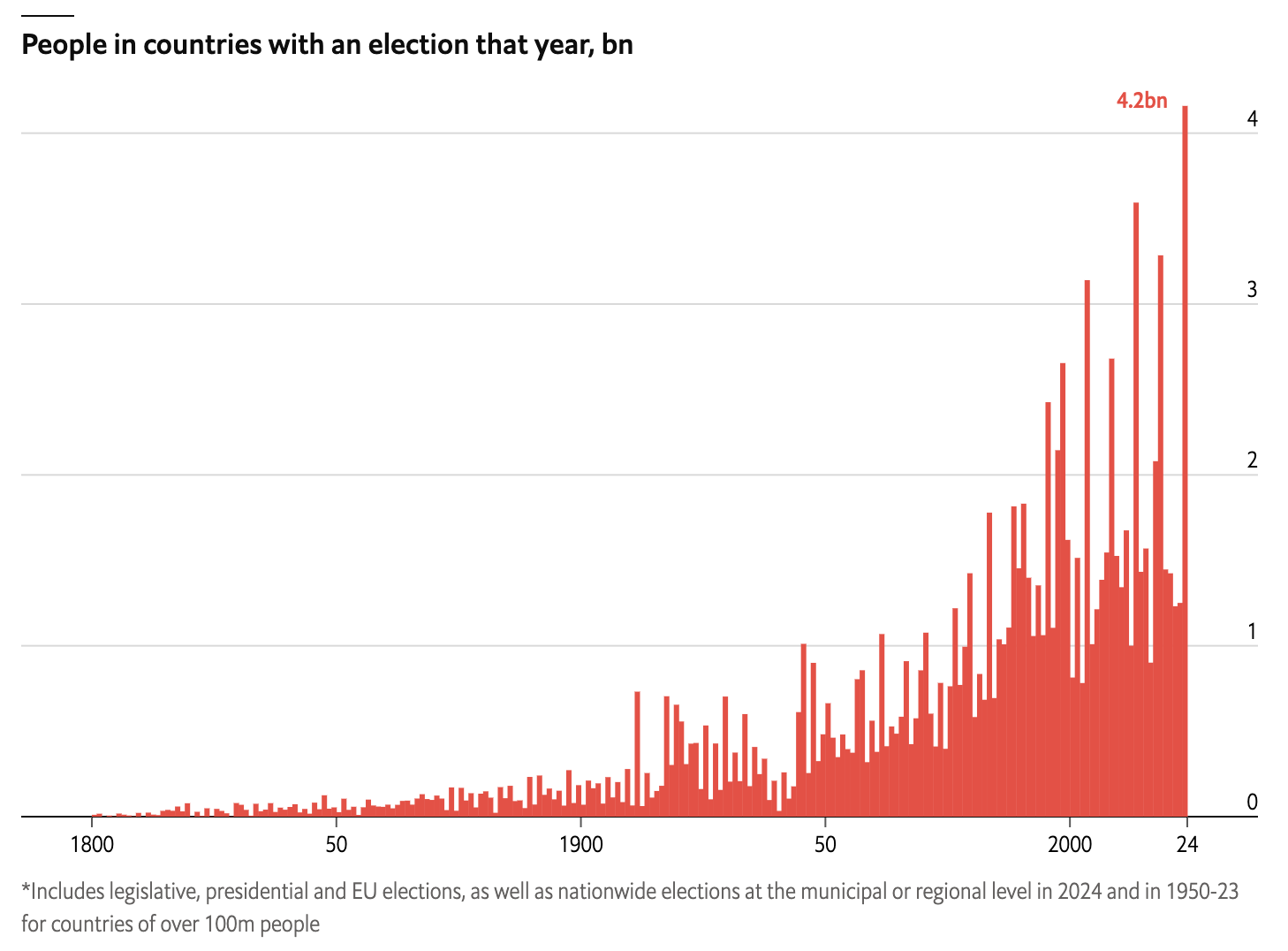

In 2024, 60 nations will hold elections, for a total of over 4 billion voters. In other words, half of the planet will be called upon to decide the future of the world. A record in the history of humanity.

Among these elections, there are:

- The presidential elections in the United States of America.

- The general elections in India, a nation that the most industrialized countries are focusing on to reduce their dependence on China.

- The presidential elections in Taiwan, on which much of the world’s production of advanced semiconductors depends.

- The presidential elections in Finland, which borders directly with Russia.

- The general elections in Mexico, which has become the most frequented entry point for illegal immigration into the United States, including for migrants from the European continent.

- The European Parliament elections, which is adopting an increasingly strict policy in terms of technology regulation.

- The mayoral elections in London, which remains the most important economic hub of the European continent.

The economic and political interests at stake are unprecedented. Therefore, the incentives to use artificial intelligence to influence the elections are enormous.

But election manipulation is just the first scenario of a long list. Stock market manipulation comes immediately after.

Here on Synthetic Work, we mentioned multiple times the warnings issued by the US Securities and Exchange Commission (SEC) about the risks of generative AI.

Bank of England now echoes the SEC.

Kalyeena Makortoff, reporting for The Guardian:

recent increase in technological advances and available data, as well as falling costs of computing power, had fuelled “considerable interest” and more widespread use across the sector, the Bank’s financial policy committee (FPC) said.

While most companies suggested the way they were using the technology was “relatively low risk”, the FPC warned that wider adoption “could pose system-wide financial stability risks”. That could mean greater “herding behaviour”, where there is a concentration of firms making similar financial decisions that then skews markets, and an increase in cyber risks.

After stock market manipulation, we have audience manipulation for advertising purposes, the most likely and common form of mass-manipulation, and audience manipulation for macroeconomic and cultural purposes, a recurring topic in this newsletter.

The most recent example: China Using AI to Create Anti-American Memes Capitalizing on Israel-Palestine, Researchers Find

And then, of course, we have audience manipulation for all sorts of criminal purposes.

At the beginning of last year, I founded an R&D company focused on AI, and one of its focus areas is disinformation and mass-manipulation. Even if this topic is out of scope for Synthetic Work, I work on these technologies every day.

So much so that I have decided to create a new page on my personal website called The State of DeepFake, where I’ll publish a growing number of deepfakes as the technology matures, in the attempt to raise awareness about how far a bad actor can go with it.

But then, if deepfakes and mass-manipulation are out of scope for Synthetic Work, why am I talking about them today?

To point out that generative AI is impacting jobs and careers even in indirect ways.

How important will the role of a cyberforensic analyst be in the future compared to the last 20 years?

How will the role of the investigator change, if at all?

What will it mean for a Chief Security Information Officer (CISO) to defend a company from cyberattacks going forward?

And, on the other side of the fence: the associate of a criminal organization today engages in activities that are very, very different from those of 30 years ago. They used to kidnap entrepreneurs and blow judges up with explosives. Now they create planetary-scale scams, steal cryptocurrency, and blackmail corporations with ransomware.

How will their jobs change now that they can easily use AI to alter reality and convince people of everything they want? Will all criminals of the future turn into cybercriminals?

The potential impact of all of this is not just on this or that job, but on the entire economy and society.

Larry Elliott, reporting for The Guardian:

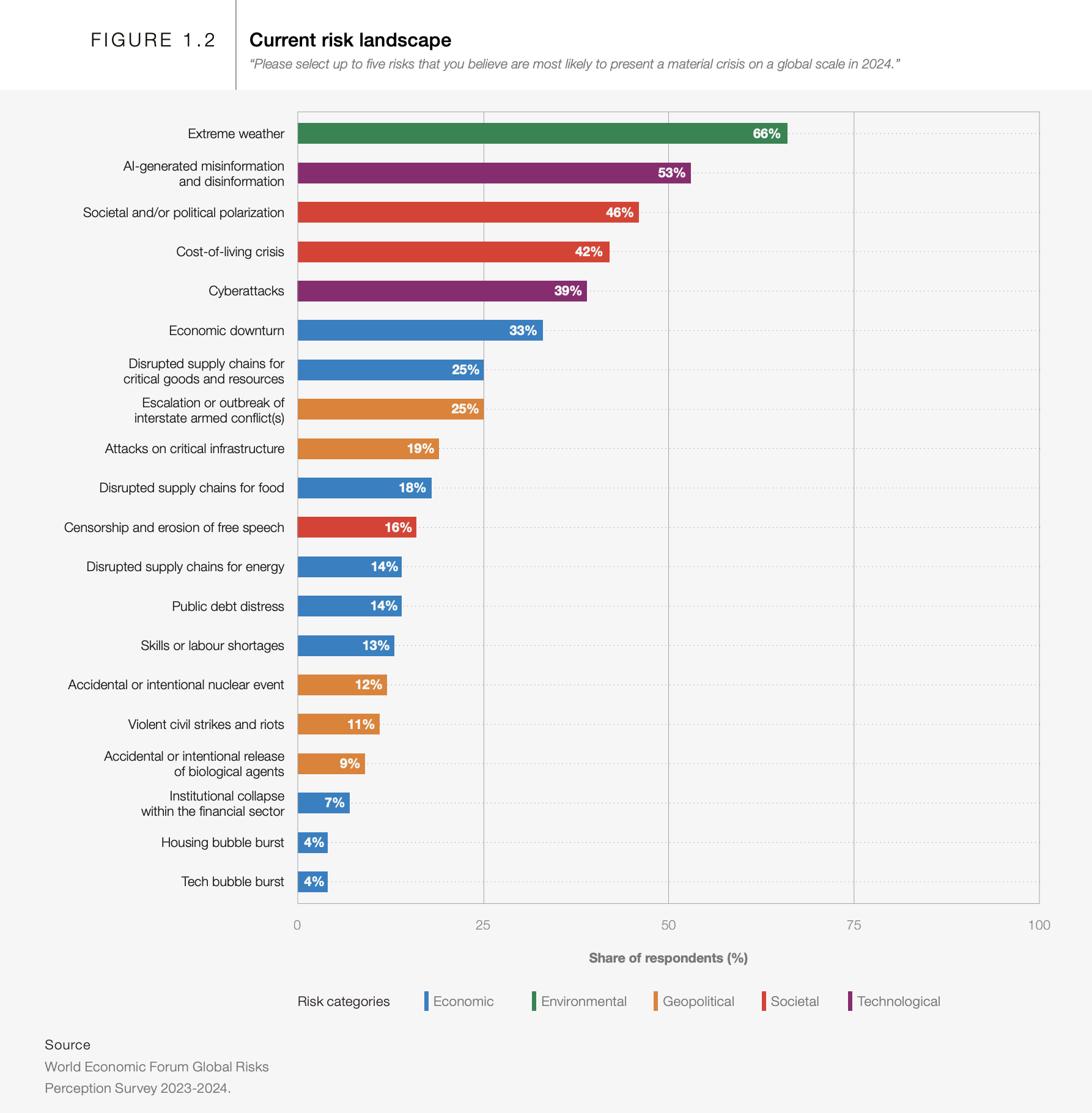

A wave of artificial intelligence-driven misinformation and disinformation that could influence key looming elections poses the biggest short-term threat to the global economy, the World Economic Forum (WEF) has said.

In a deeply gloomy assessment, the body that convenes its annual meeting in Davos next week expressed concern that politics could be disrupted by the spread of false information, potentially leading to riots, strikes and crackdowns on dissent from governments.

The WEF’s annual risks report – which canvasses the opinion of 1,400 experts – found 30% of respondents thought there was a high risk of a global catastrophe over the next two years, with two-thirds fearful of a disastrous event within the next decade.

The WEF said concerns over the persistent cost of living crisis and the intertwined risks of disinformation and polarised societies dominated the outlook for 2024

Alessandro

Thousands of surveyed engineers expect less hiring in the future due to AI.

Maxwell Strachan, reporting for Vice:

9,388 engineers polled by Motherboard and Blind said AI will lead to less hiring. Only 6% were confident they’d get another job with the same pay.

…

“The amount of competition is insane,” said Joe Forzano, an unemployed software engineer who has worked at the mental health startup Alma and private equity giant Blackstone.Since he lost his job in March, Forzano has applied to over 250 jobs. In six cases, he went through the “full interview gauntlet,” which included between six and eight interviews each, before learning he had been passed over. “It has been very, very rough,” he told Motherboard.

Forzano is not alone in his pessimism, according to a December survey of 9,338 software engineers performed on behalf of Motherboard by Blind, an online anonymous platform for verified employees. In the poll, nearly nine in 10 surveyed software engineers said it is more difficult to get a job now than it was before the pandemic, with 66 percent saying it was “much harder.”

Nearly 80 percent of respondents said the job market has even become more competitive over the last year. Only 6 percent of the software engineers were “extremely confident” they could find another job with the same total compensation if they lost their job today while 32 percent said they were “not at all confident.”

…

As an undergraduate at the University of Pennsylvania in the early 2010s, Forzano had decided to major in computer science. The degree had put him in $180,000 of debt, but he saw it as a calculated bet on a sturdy field of work. “The whole concept was [that] it was a good investment to have that ‘Ivy League degree’ in an engineering field,” he said. He thought he’d be set for life.

…

As of December, software engineers were not expressing much concern about AI making their jobs redundant. Only 28 percent saying they were “very” or “slightly” concerned in the Blind poll, and 72 percent saying they were “not really” or “not at all” concerned.But when not considering their own situation, the software engineering world’s views on AI became markedly less optimistic. More than 60 percent of those surveyed said they believed their company would hire fewer people because of AI moving forward.

So much for the “10x/100x/1000x engineer” meme.

But be careful: this article is implying a strong correlation between the challenge of finding a job today with the expectation that AI will lead to fewer jobs tomorrow. That is not necessarily the case and very little can be said on this topic without access to the data and the survey questions.

The Screen Actors Guild (SAG-AFTRA), on strike since July 2023, accepted the use of AI to clone actor voices.

Ash Parrish, reporting for The Verge:

During CES 2024, SAG-AFTRA announced an agreement with AI voice technology company Replica Studios. The agreement would permit SAG-AFTRA members, specifically voice performers, to work with Replica to create digital replications of their voices. Those voices can then be licensed out for use in video games and other interactive media projects with SAG-AFTRA authored protections.

In the announcement, SAG-AFTRA characterized the deal as a “way for professional voice over artists to safely explore new employment opportunities for their digital voice replicas with industry-leading protections tailored to AI technology.” However, as news of the deal reached the voice performer community at large, the reaction was less positive with performers either outright condemning the deal or voicing concerns about what this deal means for the future health and viability of their profession.

…

The SAG-AFTRA Interactive Media Agreement, a union contract that covers roughly 140,000 members and has several of the video game industry’s largest publishers as signatories including Activision Blizzard, Take-Two, and Electronic Arts, is currently under negotiation.

…

The Interactive Media Agreement, however, does not cover Replica Studios, and this new deal was made separately from that ongoing negotiation.

…

Basically, Replica will act as a SAG-AFTRA-approved third-party provider of AI voices to video game companies. If a SAG-AFTRA member choses to license out their voice, Replica’s agreement ensures that performer will be fairly compensated, their voice data will be protected from unauthorized use, and that a replicated voice cannot be used in a project without the performer’s informed consent.

The voice actors pleased with this deal, and the ones who feel betrayed by it, are missing the point.

It’s completely irrelevant if voice actors will be compensated or not. Very soon, we’ll reach a level of technological maturity allowing any company or individual to generate completely original synthetic voices, capable of replicating human emotions and nuances with a level of fidelity that will be indistinguishable from reality.

At that point, unless we are talking about a world-class actor like Morgan Freeman, nobody will have an incentive to use a real human voice.

Human voices are limited, expensive, and not available 24/7.

Synthetic voices are infinite and cheap, and their impact on the audience can be measured and acted on in a fully automated way.

If anything, companies will race to find the one synthetic voice that is most beloved by national and international audiences. At that point, human voice actors will not be fairly compensated. They will be not compensated at all.

Duolingo cut 10% of its contractor workforce because AI can “come up with content and translations, alternative translations, and pretty much anything else translators did.”

Brody Ford and Natalie Lung, reporting for Bloomberg:

About 10% of contractors were “offboarded,” a company spokesperson said Monday. “We just no longer need as many people to do the type of work some of these contractors were doing. Part of that could be attributed to AI,” the spokesperson said.

…

The spokesperson said the job reduction isn’t a “straight replacement” of workers with AI, as many of its full-time employees and contractors use the technology in their work. Duolingo had 600 full-time workers at the end of 2022, according to company filings. The spokesperson added that no full-time employees were affected by the cutback.

Lauren Forristal, reporting for TechCrunch, adds some color:

“The reason [Duolingo] gave is that AI can come up with content and translations, alternative translations, and pretty much anything else translators did. They kept a couple people on each team and call them content curators. They simply check the AI crap that gets produced and then push it through,” wrote No_Comb_4582.

A company spokesperson explained to us that GPT is used to translate sentences and then “human experts validate that the output quality is high enough for teaching and is in accordance with CEFR standards for what learners should be able to do at each CEFR level.”

…

the company disputed calling the departures “layoffs,” saying that only a “small minority” of Duolingo’s contractors were let go as their projects wrapped.

The next time you read somebody online sarcastically saying that AI is not replacing any job, maybe invite them to read this newsletter. They might wake up from the dream and start working on a plan B.

This is the material that will be greatly expanded in the Splendid Edition of the newsletter.

Given that we focused on disinformation and synthetic voices in this issue of Synthetic Work, I’d like to point your attention to a new demo from the startup Bland AI.

Listen carefully:

The startup promises to automate more than 500,000 inbound or outbound phone calls simultaneously. And it promises to allow you to clone any voice you want.

In itself, the approach is not revolutionary. In 2023, we have already seen various examples of startups that have combined different AI models to support phone conversations with humans.

What happens is that one AI model converts what a person answering the phone says into text. This is called speech-to-text (STT). Then, another AI model generates a sensible written response to what the person said. This is dear friend large language model (LLM).

Finally, a third AI model converts the response of the LLM into an audio file with a realistic synthetic voice. This is called text-to-speech (TTS).

These three models usually take seconds to respond, a time too long for a credible phone conversation. But in the last 6 months, the international AI community has developed a series of optimizations for all these three models to the extent that today it is possible to get a response from each one in milliseconds, rather than seconds.

Bland has put together the optimized version of these three models, and has created an automation engine capable of sustaining credible and fast phone conversations.

Part of the trick is that the voice quality generated by the TTS model is low.

In many other use cases, this would be a problem. But if the startup aims to simulate a fixed-line phone call, and targets the segment of the population less attentive to technological developments, or less detailed-oriented, then the low quality of the synthetic voice becomes a strength, rather than a weakness.

Think about how many voters can be reached in this way, especially in the days immediately before an election. Think about how quickly it’s possible to spread a message, whether legitimate or illegitimate, or a news story, true or false, among those who are more vulnerable to scams but who go to vote regularly.

But let’s not think about the worst-case scenario.

Instead, let’s think about all those people who are in outbound sales around the world. Those jobs are safe, as long as you can make 500,000 phone calls a day.

Pakistan’s former prime minister to campaign from behind bars on Monday, with his cloned voice.

The Guardian reports the story (without a byline):

Artificial intelligence allowed Pakistan’s former prime minister Imran Khan to campaign from behind bars on Monday, with a voice clone of the opposition leader giving an impassioned speech on his behalf.

Khan has been locked up since August and is being tried for leaking classified documents, allegations he says have been trumped up to stop him contesting general elections due in February.

His Pakistan Tehreek-e-Insaf (PTI) party used artificial intelligence to make a four-minute message from the 71-year-old, headlining a “virtual rally” hosted on social media overnight on Sunday into Monday despite internet disruptions that monitor NetBlocks said were consistent with previous attempts to censor Khan.

PTI said Khan sent a shorthand script through lawyers that was fleshed out into his rhetorical style. The text was then dubbed into audio using a tool from the AI firm ElevenLabs

…

The audio was broadcast at the end of a five-hour live-stream of speeches by PTI supporters on Facebook, X and YouTube, and was overlaid with historic footage of Khan and still images.

…

“It wasn’t very convincing,” said the 38-year-old business manager Syed Muhammad Ashar in the eastern city of Lahore. “The grammar was strange too. But I will give them marks for trying.”

…

Analysts have long-warned that bad actors may use artificial intelligence to impersonate leaders and sow disinformation, but far less has been said on how the technology may be used to skirt state suppression.

Long-term readers of Synthetic Work know that the job of the politicians is being impacted by generative AI, at least in terms of how they draft policies and how they collect feedback from the population.

Now even political campaigns could be transformed by this technology.

Normally, this section of the newsletter sees only a story per week. Today we make an exception because I’m hoping that you can see the implications coming from the combination of the two stories together.

What happens if I clone the voice of an influential political leader to spread a message to 500,000 voters the day before the elections?

What happens if I clone the voice of a controversial political opponent to spread a lie to 500,000 voters the day before the elections?

What happens if I clone the voice of a hateful political leader of the past to rally 500,000 fanatics the day before the elections?

This week’s Splendid Edition is titled Even a monkey could be an AI master with this.

In it:

- What’s AI Doing for Companies Like Mine?

- Learn what the US State of Pennsylvania, the US National Security Agency (NSA), and Nasdaq (perhaps) are doing with AI.

- A Chart to Look Smart

- High-quality prompting can save companies millions in inference costs.

- Prompting

- A simple prompting technique to mitigate the bias in LLM answers before you deploy AI applications in productions.

- The Tools of the Trade

- A mighty plugin to turn WordPress into an AI powerhouse for you and your lines of business.