- Maersk and Wesco have started using generative AI to negotiate contracts with their suppliers.

- Unilever and Siemens are using generative AI to analyze their suppliers and find replacements.

- Travelers Cos. is using AI to assess the condition of insured sites and assist claim management.

- Ubisoft is testing the use of generative AI for a wide range of applications, from game design to scriptwriting.

- In the What Can AI Do for Me? section, we learn a technique to improve the quality of our corporate presentations with AI-generated images.

I’m very happy to report that the AI Adoption Tracker now counts 76 documented early adopters. At the current pace, which I constrain to give you the chance to digest the information, we’ll be well over 100 by the end of the year. Enough to illustrate the opportunity across industries and give everyone plenty of business ideas.

So much so that I’m thinking about putting together a conference talk titled “How 50 Early Adopters Are Using AI to Transform Their Industries.”

That said, before you dive into today’s Splendid Edition, here’s a little quote from Ilya Sutskever, OpenAI co-founder and Chief Scientist, I captured from his brief interview at the CEO Summit hosted by Khosla Ventures:

I think that two things are of value, two things are worth keeping in mind. One is obvious: some kind of special data that cannot be found anywhere else. That can be extremely helpful. And I think the second one is to always keep in mind not just about where things are right now but where things will be in two years in four years and try to plan for that.

I think those two things are very helpful. The data is helpful today but even a little bit kind of trying to get an intuitive sense for yourself of where do you imagine things being insane three years and how will it affect some of the basic assumptions of what the product is trying to do. I think that can be a helpful thing.

…

Say you’re playing with a model and you can see that the model can do something really cool and really maybe amazing if it was reliable, but it’s so unreliable so you kind of like “Forget it, it’s not. There’s no point using it.”

So, that’s the kind of thing which can change…Something which is unreliable can become reliable enough.What people are sharing and you say “Oh look at this cool thin,m which works once in a while, but if it worked what would happen?”

This kind of thought experiments, I would argue, can help prepare for the kind of near to medium term future.

As usual, if you find this newsletter valuable, please share it far and wide.

Alessandro

What we talk about here is not about what it could be, but about what is happening today.

Every organization adopting AI that is mentioned in this section is recorded in the AI Adoption Tracker.

In the Logistics industry, Maersk and Wesco have started using generative AI to negotiate contracts with their suppliers.

Oliver Telling, reporting for the Financial Times:

Some of the world’s biggest companies are turning to artificial intelligence to navigate increasingly complex supply chains as they face the impact of geopolitical tensions and pressure to eliminate links to environmental and human rights abuses.

…

Although AI support in supply chain management has been used for years, the development of so-called generative AI technology has been offering more opportunities to further automate the process.More multinationals have faced the need to keep abreast of their suppliers and customers amid disruptions during the Covid-19 pandemic as well as rising geopolitical tensions.

New supply chain laws in countries such as Germany, which require companies to monitor environmental and human rights issues in their supply chains, have driven interest and investment in the area.

…

In December, the world’s second-largest container shipping group helped provide $20mn in funding for Pactum, a San Francisco business that says its ChatGPT-like bot has been negotiating contracts with suppliers for Maersk, Walmart and distribution group Wesco.

…

“When there is war or Covid or supply chain disruption, you need to reach out to suppliers,” said Kaspar Korjus, Pactum’s co-founder, who said the start-up’s chatbot was negotiating deals worth up to $1mn on behalf of “tens” of Fortune 500 companies. “[With] one disruption after another these days, it takes humans too much time…Walmart don’t have time to reach out to tens of thousands of suppliers.”

Maersk is also using generative AI for automated custom classification, forced labor compliance, and shipment screening.

From the same article:

Evan Smith, chief executive of New York start-up Altana, said the company, whose customers include Danish shipping group Maersk as well as the US border authorities, has scoured customs declarations, shipping documents and other data to build a map connecting 500mn companies globally.

Customers can use its AI-enabled platform to trace products back to suppliers in Xinjiang, Smith added, or track if their own products are being used in Russian weapons systems.

“Just to build the map, you’re talking about billions of data points in different languages. The only way you can work through all that raw data is with AI.”

Up to 96 per cent of supply chain professionals are planning to use AI technology, according to a survey this month of 55 executives by logistics group Freightos, although only 14 per cent were already using it.

Almost a third believed that using AI would lead to significant job cuts in their business, however, underlining concerns over the technology’s impact on job security.

From the previous article, we learn that Unilever in the Consumer Stable Products industry and Siemens in the Manufacturing industry, are using generative AI to analyze their suppliers and find replacements.

Oliver Telling, reporting for the Financial Times:

Since 2019, Siemens has employed the services of Scoutbee, a Berlin start-up that this year launched a chatbot that it says can respond to requests to locate alternative suppliers or vulnerabilities in a user’s supply chain. “The geopolitical aspect is a key topic for Siemens,” said Michael Klinger, a supply chain executive at the company.

Scoutbee chief executive Gregor Stühler said Unilever, another customer and the maker of Marmite and Magnums, was also able to identify new suppliers when China went into lockdown during the pandemic.

In the Insurance industry, Travelers Cos. is using AI to assess the condition of insured sites and query troves of documents.

Rick Wartzman and Kelly Tang mention it in the report for the Wall Street Journal we discussed in the Free Edition of this week:

Travelers Cos., which notched an overall effectiveness score of 62.1 last year to rank 107 and has led the insurance industry in AI postings since 2020, has also been leaning in. CEO Alan Schnitzer highlighted for analysts this month that among the ways Travelers has leveraged AI is to “assess roof and other site-related conditions,” in conjunction with aerial imagery, so as to “achieve pricing that is accurately calibrated to the risk” of insuring those parcels; “facilitate targeted cross-selling, supporting our effort to sell more products to more customers”; and garner “insights that improve the customer experience.”

Travelers has extended beyond traditional AI, which can analyze huge amounts of data and make predictions, and into the realm of generative AI, which can create new content. For instance, it is piloting a “Travelers claim knowledge assistant,” a generative AI tool trained on a mountain of proprietary, technical source material that was previously only accessible in thousands of different documents. Its aim, Schnitzer told analysts, is for the company’s claims professionals “to easily access…actionable information,” thereby “increasing speed, accuracy and consistency” with consumers and distribution partners.

In the Gaming industry, Ubisoft, via its R&D division called La Forge, is testing the use of generative AI for a wide range of applications, from game design to scriptwriting.

Fernanda Seavon, reporting for Wired:

“Incorporation of AI in our workflows relies on three axes: creating more believable worlds, reducing the number of low-value tasks for our creators, and improving the player experience,” says Yves Jacquier, executive director of Ubisoft La Forge.

Jacquier describes several ways that his company is already experimenting with AI, from smoother AI-driven motion transitions in Far Cry 6, which make the game look more natural, to the bots designed to improve the new player experience in Rainbow Six Siege. There’s also Ghostwriter, an AI-powered tool that allows scriptwriters to create a character and a type of interaction they would like to generate and offers them several variations to choose from and edit.

“Our guiding principle when it comes to using AI for game development is that it needs to assist the creator, not the creation,” Jacquier adds. With a “human-in-the-loop” approach, Jacquier says that AI will not replace or compete with developers, but will facilitate and optimize some aspects of their work, or open new possibilities of creation for them.

When I wrote Issue #14 – How to prepare what could be the best presentation of your life with GPT-4, one of the most popular Splendid Editions ever published, I omitted one part: how to generate the images that accompany the text in each slide.

Arguably, this is one of the most time-consuming and difficult parts of preparing a presentation. Most people, especially in the tech industry where I served most of my career, don’t think images are that important. Those people don’t realize that, according to some estimates, 30% to more than 50% of the human cortex is dedicated to visual processing.

We are visual creatures (which explains why most of us would prefer to watch a YouTube video than read an impossibly long newsletter like this one).

But even if we wouldn’t ignore this fact, the effort required to find the right image for each slide is so enormous that most of us just give up and settle for a white slide with bullet points.

At which point, we need to be honest with ourselves.

If our goal is to check the box in your project management app and say that you have delivered the presentation in time, then we are good to go, and the aforementioned Splendid Edition will be more than enough to help.

If, instead, our goal is to be sure that our idea is understood and remembered by the audience, and it spreads far and wide, then we’ll need to make an effort to find the right images, too.

If you are a senior executive, preparing a big conference keynote, and you work for a public company, there’s a chance that your overreaching marketing department will insist on using poorly chosen stock images to illustrate your points.

I always pushed back against that practice and I don’t recommend it to anybody.

The designers in the marketing department can’t understand what was in your mind when you prepared the slides, and can’t possibly find the best images to convey the message you want to convey.

You might argue that, as a senior executive, you are always pressed for time and you can’t possibly dedicate time to find images from a stock catalog.

The counter-argument is that there’s nothing more important that you could do than spreading the ideas in your presentation to advance the cause of your company, and if you don’t have the time to do a really good job, then maybe you shouldn’t be the presenter in the first place.

There’s a reason why Steve Jobs dedicated three weeks to a month to the rehearsal of the WWDC conference keynote.

Something tells me that he was pressed for time, too.

So let’s assume that we are in charge of our images and we want to do a good job.

In reality, this is a two-challenges task:

- You have to figure out what you want to say and translate that into an image that fits the narrative

- You have to find that image

The first challenge is the truly important one, and we’ll get to that in a moment.

The second challenge is just a search problem. Or it used to be.

Until six months ago, your only option would be to spend the time you don’t have on stock image websites, trying to find the right picture or diagram.

This problem is now almost completely solved thanks to a particular type of generative AI model called diffusion model, which reached a level of maturity that is surreal.

This, for example, is a picture generated by an AI artist with Midjourney 5.2 just this week:

Diffusion models are the ones that power so-called text-to-image (txt2img or t2i) AI systems like Midjourney or DreamStudio by Stability AI.

Just like for large language models, your prompt is a text description of the image you want to generate and it requires some practice to generate the images you want at a quality that is acceptable for a conference presentation. But at least, you can have exactly the image you want, and not a poor substitute found after hours of searching on a stock image catalog.

The t2i systems that exist today have different strengths and weaknesses, so let’s review them briefly:

The system is very opinioned in terms of look and feel. It doesn't offer the ultimate flexibility in terms of poses and styles.

The system heavily censors sometimes innocuous words and concepts in the prompt as it prepares to launch on the Chinese market.

Midjourney is the target of one or more lawsuits for alleged infringement of copyright. That might have legal implications for companies using its service.

An open access version of DreamStudio, called StableStudio, is available for free for customization if you have the technical skills.

To achieve maximum flexibility in terms of poses, style and automation (unmatched by other t2i systems) you have to download the new diffusion model underpinning DreamStudio, SDXL 1.0, run it on your computers (or a cloud instance), and replace the DreamStudio interface with open source alternatives like WebUI, SD.Next, or ComfyUI. All of this requires technical skills and has a steep learning curve.

Because of the legal risks associated with the way Midjourney has trained its diffusion model, many companies prefer to play safe and use Adobe Firefly instead. Even if the quality is still not as good.

Eventually, the supreme courts all around the world will have to decide whether diffusion models infringe copyrights or not when they are trained from copyright material. But this is a long-term conversation that we are following through the Free Edition of Synthetic Work.

While we wait for courts all around the world to reach a consensus, we’ll use Adobe Firefly for this tutorial.

On top of these four, there are hundreds of other t2i systems built on top of diffusion models. Some of them simply enforce an invisible system prompt that guarantees higher-quality images at the expense of flexibility. Others have fine-tuned the underlying diffusion models to exclusively generate a certain type of image, like home furniture for interior design, or supermodels for advertising.

For today’s task, the general-purpose t2i systems in the comparison table are the most relevant ones.

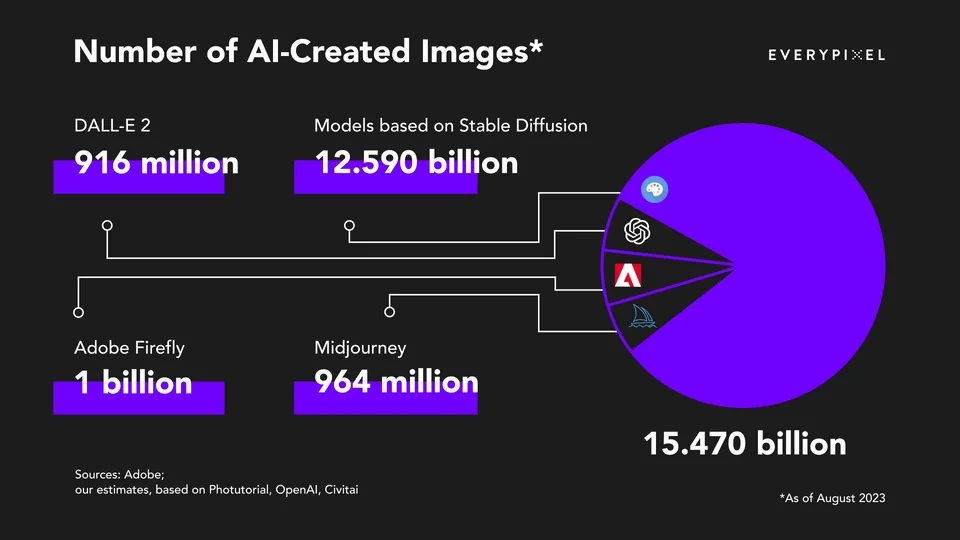

And boy, have people used these t2i systems!

The bottom line is that generative AI has drastically reduced the friction in finding the right images for your presentation. But it has not eliminated it, because you still have to write the prompt, and that’s not trivial. Yet.

Generating Images for Presentations

As I said before, writing prompts for a t2i system can be as nuanced as writing prompts for an AI chatbot powered by a large language model. It’s not something that can be exhausted in a single tutorial.

If there’s enough interest, once Midjourney will finally enable a web interface, we will dedicate some How to Prompt sections of the Splendid Edition to image generation.

For now, we want to focus on a single trick: get an AI chatbot like OpenAI ChatGPT to write the prompt for a t2i system like Adobe Firefly.

Which brings us back to the first challenge of the two I previously listed: you have to figure out what you want to say for each slide and translate that into an image that fits the narrative.

The goal is to automate the process and generate the images for our presentation in bulk.

So, how can we do such an ambitious thing?

There are many ways to approach the problem. Today we’ll focus on my favorite one.

Explain by Analogy

I’ve been on conference stages worldwide for 23 years, delivering almost 500 presentations, and I’ve always used this approach, explaining complex ideas with analogies that could be represented by a single image.

The beauty of this approach is that people in your audience are already familiar with a lot of very complex systems that they encounter in their daily lives. They already dedicated significant brain power to understanding those systems. So there’s a broad range of mental models that you could leverage to explain your thing without adding extra cognitive load to your audience.

Said in another way: in most cases, there’s no need to articulate yet another very complex system for your audience. You just have to map one-to-one the complex idea you want to spread with a complex idea already in their brain.

The non-beautiful part of this approach is that, apparently, making analogies is extremely difficult to do for people. That’s why so few do it, and so many do it poorly.

But large language models can do it splendidly.

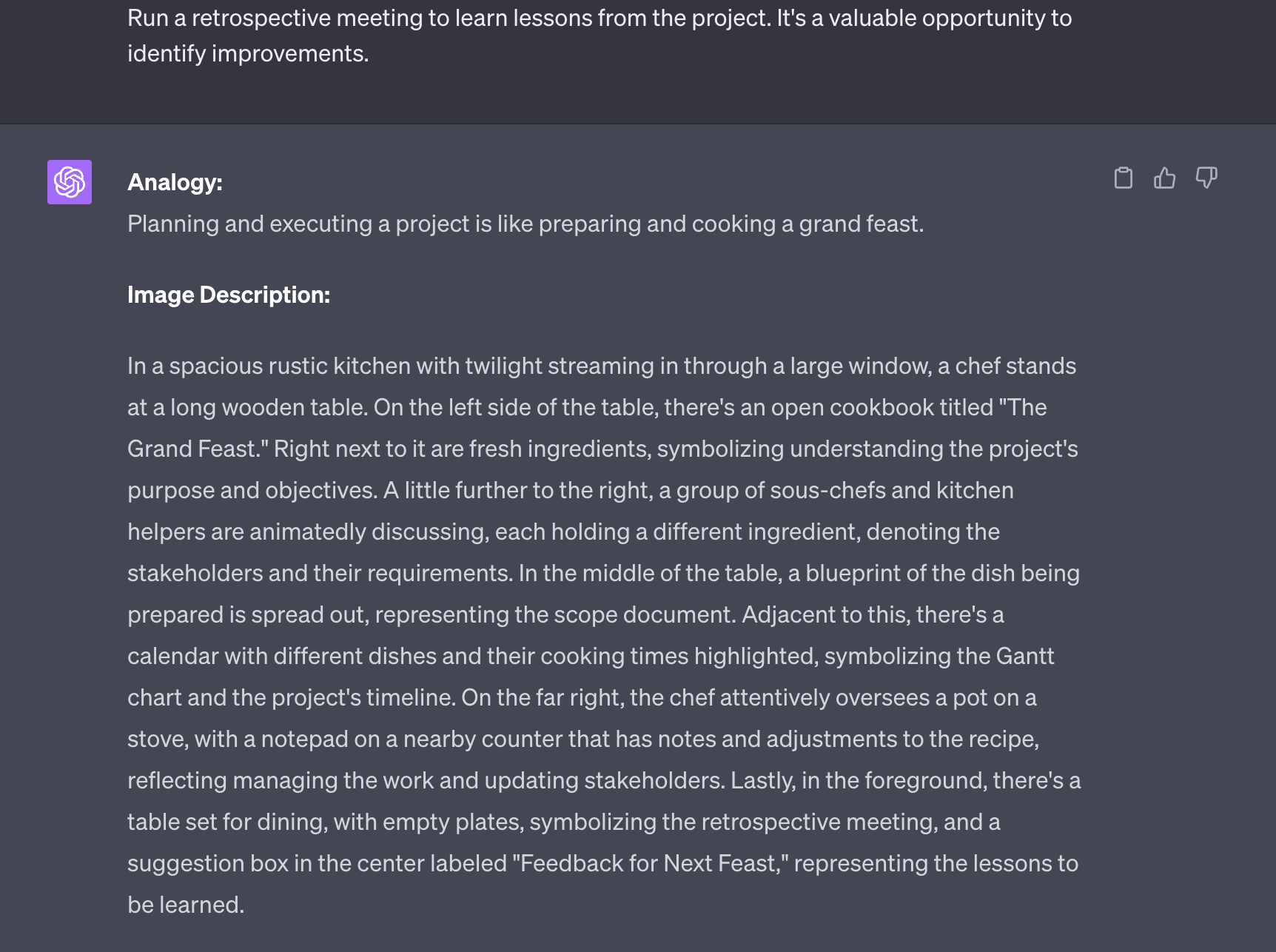

So, can we use GPT-4 to generate an analogy representing whatever is in our slide and, from there, generate a prompt suitable for Adobe Firefly?

Let’s find out.

To start, let’s pick a random presentation. I head to SlideShare, a graveyard of terrible bullet points presentations, and I stumble upon this one from 2016:

Possibly one of the driest presentations ever created.

Here the author simply took the text that would normally go in a document and put it on slides. For this person, like for most of us, a slide is just a document page with a different aspect ratio.

So, how can we extract a memorable analogy from a slide like this?

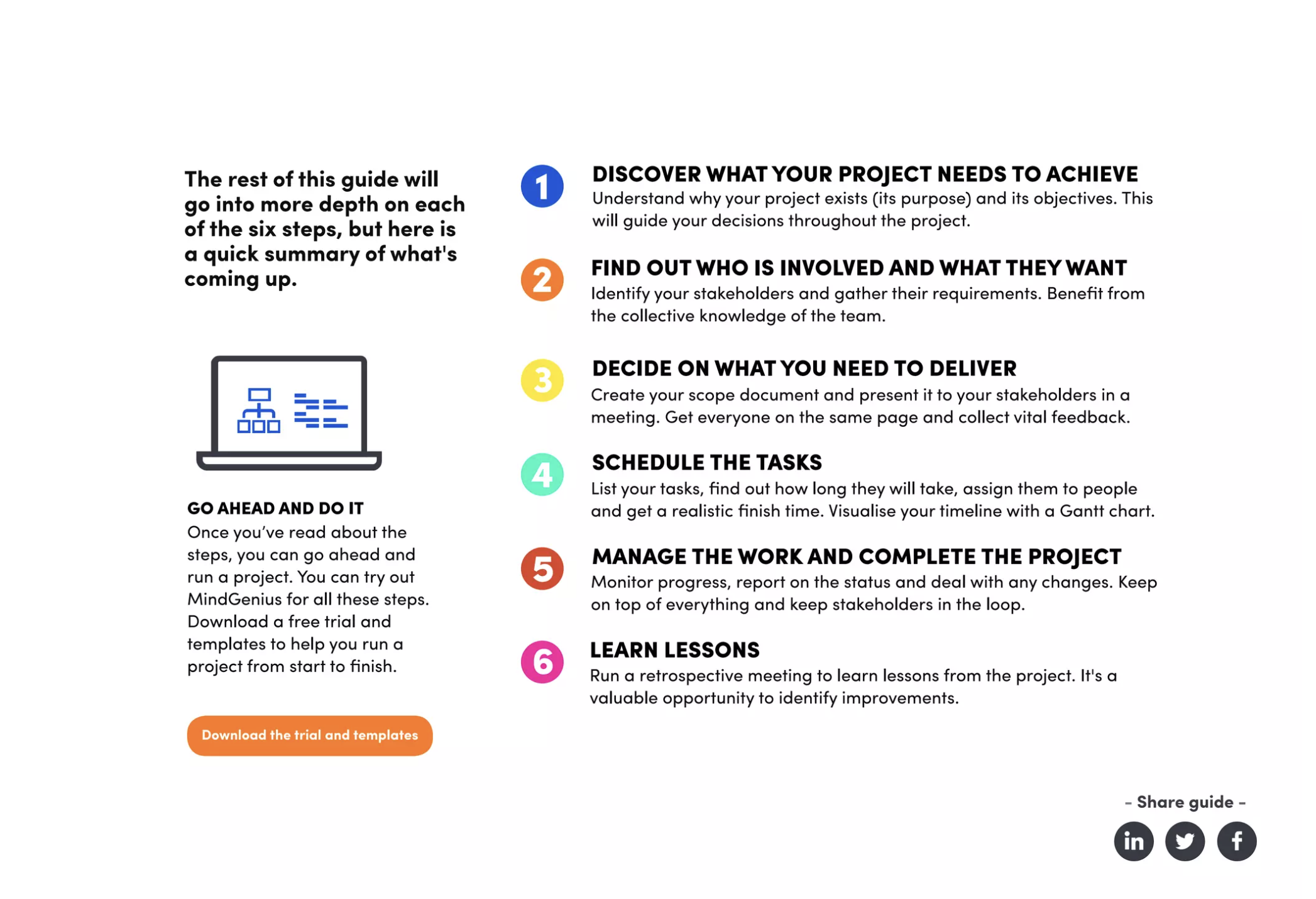

GPT-4, please do a miracle:

Notice that, as usual, we used the Assign a Role technique referenced in the How to Prompt section of Synthetic Work.

Also, notice that we broke the task into two parts: first, we asked GPT-4 to generate an analogy and then to generate an image to represent the analogy, to guarantee ourselves higher accuracy.

Finally, notice that we asked the model to generate a single paragraph to describe the image representing the analogy. GPT-4 is far more capable than that and can easily generate a full page of instructions, but today’s diffusion models don’t accept long prompts and even the one generated below will have to be trimmed a bit.

Nonetheless, sometimes miracles happen:

I’m sure that the cooking analogy has been used before in the context of project management, so this was not an overly challenging task. Nonetheless, you can see GPT-4 being quite creative in details like the analogy for the Gantt chart or the lessons to be learned.

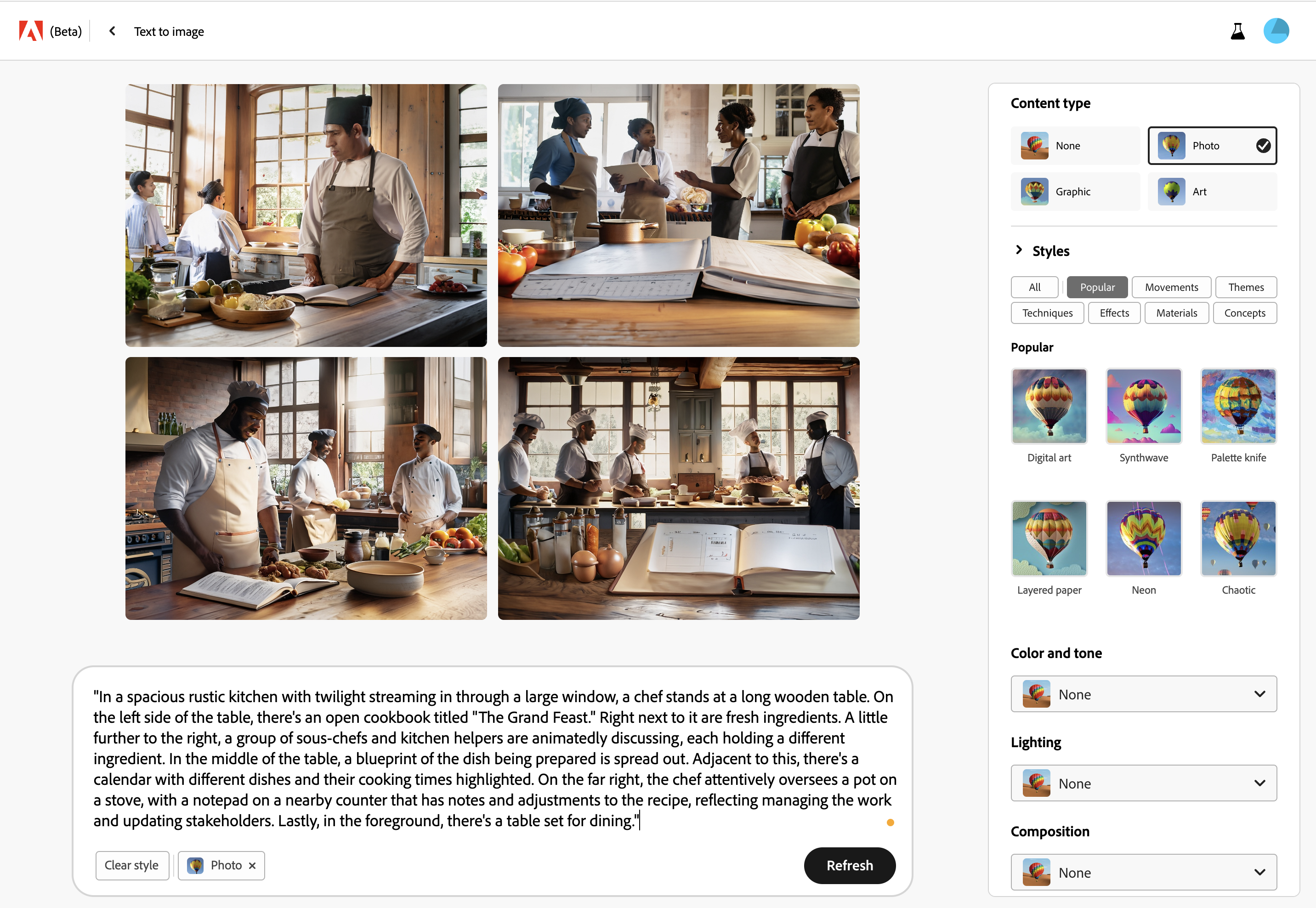

Now we simply take this and put into Adobe Firefly to generate our image. Again: I had to trim a bit the prompt as it won’t fit the allowed length.

A closer look at any of these images will make you understand why nobody uses Firefly:

The quality of this picture can be marginally improved by tweaking the prompt with a series of modifiers that will force the diffusion model to focus on quality and details. And Firefly is really good at keeping into account all elements of the prompt. But Adobe’s model can only go this far in its current incarnation.

Out of the box, DreamStudio running SDXL 1.0 doesn’t do much better. In fact, in terms of capturing the elements of the prompt, it does worse:

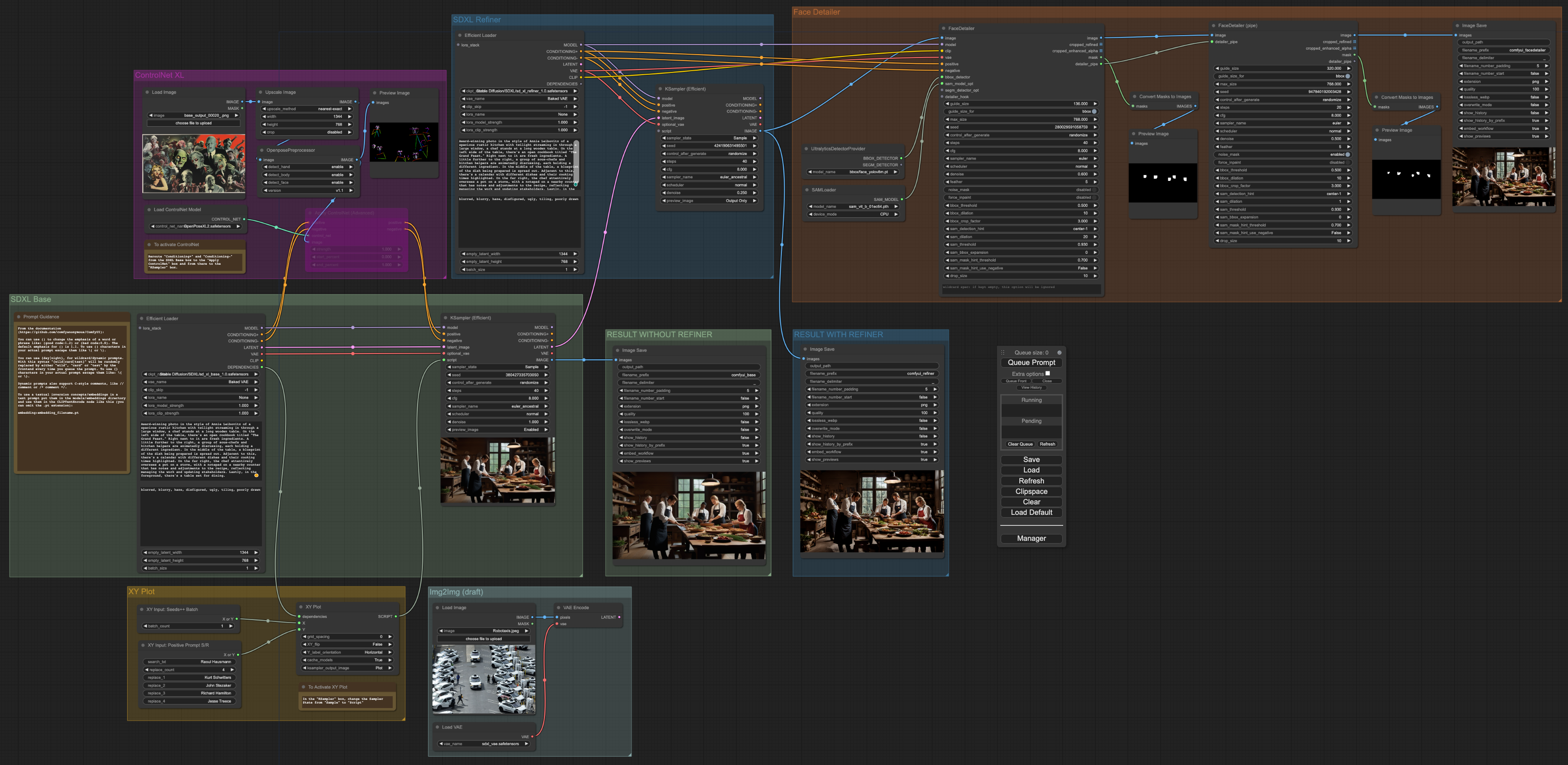

But if you know what you are doing, both in terms of prompting techniques and image processing pipeline, you can get to something that, at minimum, looks like this:

Much more can be done (for once, there are way too many men in this picture), but generating this image already required me to set up the following pipeline:

Yes, it was a non-trivial effort. But once you have a pipeline like this in place, the act of generating the image is a one-button push and a wait, in my case, of 6 minutes (which can be reduced to seconds with the right hardware).

Yes, this is what happens behind the scenes of t2i systems like Midjourney, just at a much bigger scale.

Of course, this approach is unsustainable. Nobody should study 6 months an uber-complicated open source image generation system just to produce the images of a single presentation. At that point, it’s way cheaper and less stressful to hire a designer.

Unless, of course, you are setting up a system like this, inclusive of the LLM component that generates the analogy, to automatically produce the images for all the presentations of your entire company.

That’s where the AI impact on jobs starts to become evident and, as the digital artist quoted in the Free Edition of this week put it: “It’s efficiency in its final form. The artist is left as a clean-up commando, picking up the trash after a vernissage they once designed the art for.”

But we are digressing.

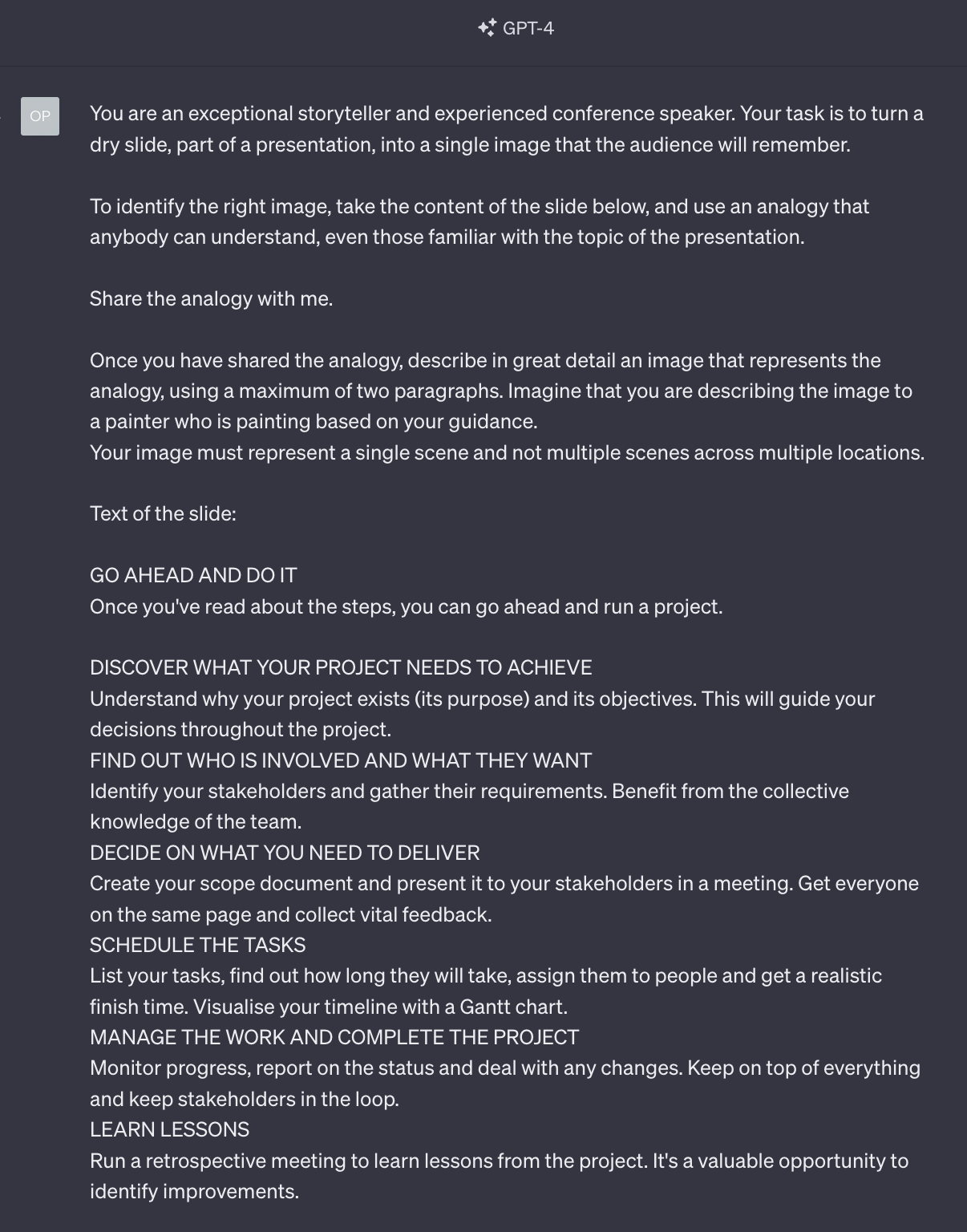

If you need to generate the images of a single presentation, the strongly opinionated approach of Midjourney is the most appealing solution for the large majority of people:

This was generated with Midjourney 5.2 with no modifications to the original prompt generated by GPT-4 and no additional modifiers. We’ll soon see what version 6 is capable of.

The point is not much about what quality we can achieve with a basic prompt without further tweaking, but how easily we can enhance our presentations with appropriate images.

Happy analogizing.