- What’s AI Doing for Companies Like Mine?

- Learn what Deliveroo, Moody’s, and Amazon are doing with AI.

- A Chart to Look Smart

- A creative application of generative AI produces accurate simulations of disease progression with limited data.

- The Tools of the Trade

- A local ChatGPT that, finally, can be used to chat with multiple documents (yes, Excel spreadsheets, too).

What we talk about here is not about what it could be, but about what is happening today.

Every organization adopting AI that is mentioned in this section is recorded in the AI Adoption Tracker.

In the Foodservice industry, Deliveroo founder & CEO, Will Shu, revealed that the company is testing generative AI for both food recommendations and customer service interaction.

From a recent interview with Bloomberg’s Caroline Hyde:

A: …we’ve been definitely utilizing gen AI…We have, in our employee version of the app a recommendation engine. So you can type “I want a healthy Mexican sort of meal or within this caloric range. It doesn’t always work if I’m honest…and it comes back with what is. Are increasingly better and better recommendations.

…

I think on the customer care side, we’ve done a lot of really cool things. Gen AI will summarize the last ten interactions with the consumer and then tell the agent “Hey, do we think this consumer’s happy?” and “What’s a summary of how the last few interactions went?” without having to look all the stuff up yourself.I think something like that’s been really, really powerful.

…

We’ve had over 10,000 applications a week for people to work, 10,000 a week to work on our platform. And I think the thing that’s interesting is these workers could get a job anywhere else, right? You could go work at a pub, you could go work at a warehouse, you could go work in hospitality, and they’re choosing to work with us. And to me, that is just quantitative proof that what we’re doing the workers want as opposed to an opinion. And I think that’s something we’re really proud of.Q: Where I’m currently sat over in America, the big hand-wringing by II has been jobs. And I’m actually interested that someone from the audience said, Will we see robots deliver our food in London? How much are you thinking about automation when it comes to workers to delivery?

A: I don’t think it’s going to happen for some time. I think, you know, maybe in more rural areas, maybe places with less population density, that could be a thing. But the UK at the end of the day, outside of villages, is a highly densely populated place, as are the major markets we’re in in France, Italy, Hong Kong, Singapore, Dubai, places like that.

So I don’t see that kind of happening anytime soon.

We definitely spent time talking to different robotic players, but it’s kind of been something we’ve been talking about for eight years at this point. So we’ll see what happens.

Regarding the second answer, you should know that, for two years, Deliveroo had a robotic division that was internally developing robotic arms to prepare food. When things started to be difficult for the company, they shut down the division to focus on the core business.

So, despite what the CEO says in the interview, the company has an enormous interest in this space. Just not necessarily focused on the delivery side of the Foodservice industry.

Regarding the transcription above, you should know that Bloomberg’s transcription tech is terrible and I had to correct a huge number of mistakes. I always do. It shouldn’t be this way. OpenAI has released a state-of-the-art, almost flawless, speech-to-text technology as open source, and its performance is exceptional after one year of optimizations by the AI community.

If you publish transcriptions of any sort as part of your company material, don’t be like Bloomberg.

In the Financial Services, the credit ratings and research company Moody’s is partnering with Google to test the use of generative AI for financial report analysis and report wriring, and to fine-tune its own LLMs for third-party use.

From the official press release:

Powered by Google Cloud’s gen AI platform, Vertex AI, and leveraging Moody’s unique analytical expertise, Moody’s and Google will explore co-creation of fine-tuned LLMs purpose-built for financial professionals, enabling customers to perform faster, deeper analyses of financial reports, disclosures and other materials. For example, customers will be able to interrogate, analyze, and draw decision-ready insight directly from financial disclosures.

…

Moody’s will enable access to its proprietary datasets through BigQuery, Google Cloud’s serverless data warehouse, which helps customers manage, query, and analyze data. This integration will allow customers to combine Moody’s vast databases with their native data assets, and use them in combination with LLMs in Vertex AI.

…

Moody’s will introduce Vertex AI Search to increase efficiencies by automating manual workflows and combining multiple data sets for easier summation, deeper insights, and overall improved productivity.

William Shaw, reporting for Bloomberg, adds more color:

For Moody’s, the software will leave an electronic trail showing where the information its citing came from, according to Philip Moyer, vice president of artificial intelligence and business solutions within the cloud business at Alphabet Inc.’s Google. He said that should also prevent the technology from hallucinating

A bold statement, considering that Google’s PaLM 2 model, underpinning the consumer-grade AI service called Bard, hallucinates so much more than its competitors to be almost unusable.

More from the article:

Moody’s will also offer the large language models it’s developing to other financial firms, which can also use it to analyze information for rote tasks.

For instance, Moyer said, the software could help a bank employee quickly assess whether the bank should onboard a small business client. That’s because the staffer could instruct the large-language model to find the top three risks the potential client identified in its financial disclosures or write three bullet points summarizing its most recent earnings call or identify five peers with similar carbon footprints.

Within minutes, the bank employee could make a judgment call based on research that would have previously taken hours.

No company in the world is willing to share direct access to its proprietary data. But using a fine-tuned large language model as proxy for that data guarantees a reasonable amount of protection against theft and a source of revenue.

Expect many companies, across multiple industries, to do the same.

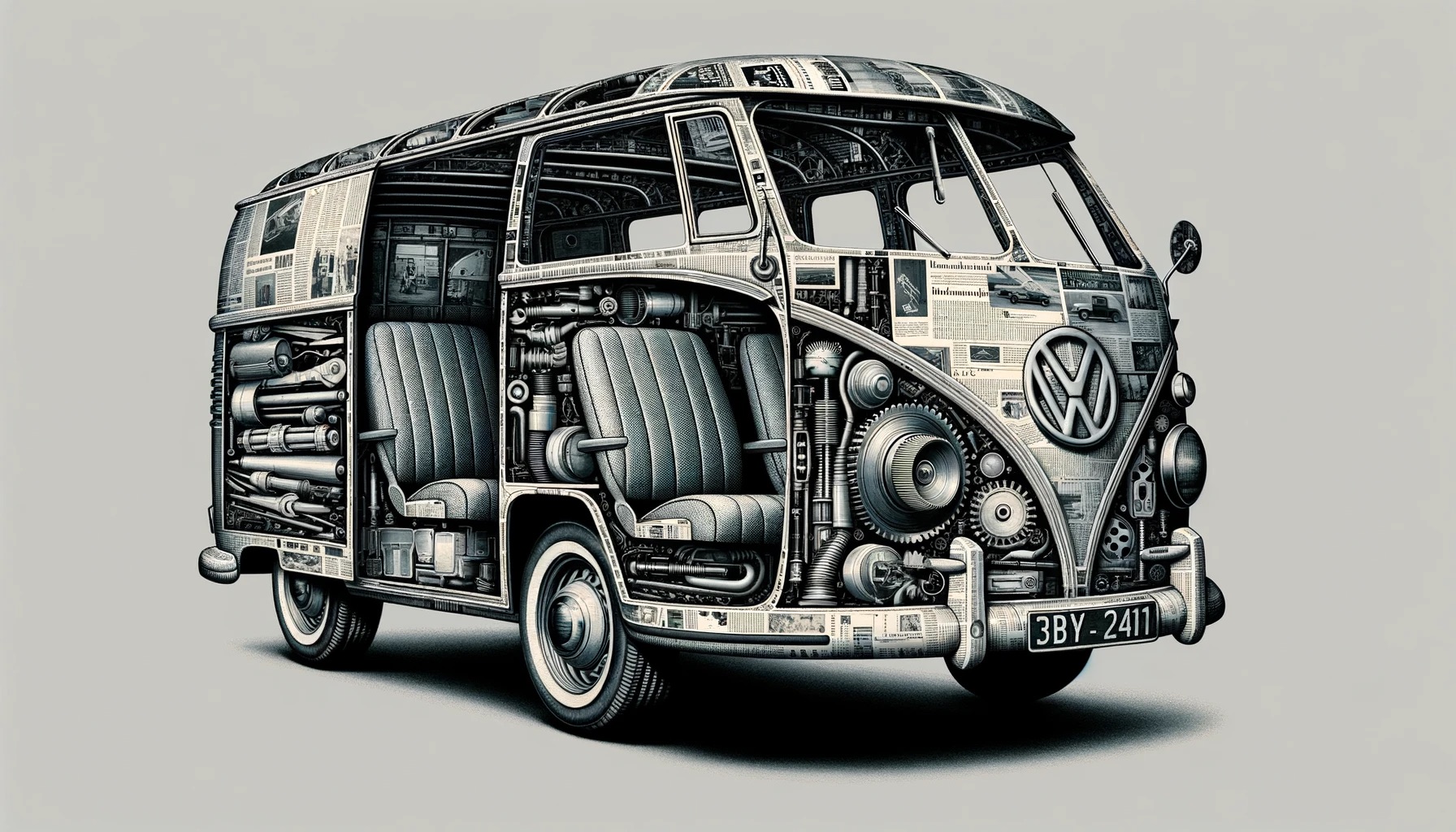

In the E-commerce industry, Amazon has revealed multiple new applications for various types of AI, powering a new class of robots inside its warehouses, and to automatically inspecting damage to delivery vans.

Let’s start with the warehouses.

Sebastian Herrera, reporting for The Wall Street Journal:

The revamp will change the way Amazon moves products through its fulfillment centers with new AI-equipped sortation machines and robotic arms. It is also set to alter how many of the company’s vast army of workers do their jobs.

Amazon says its new robotics system, named Sequoia after the giant trees native to California’s Sierra Nevada region, is designed for both speed and safety. Humans are meant to work alongside new machines in a way that should reduce injuries, the company says.

It is unclear how the new system will affect Amazon’s head count, and the company declined to provide details about its expectations except to note that it doesn’t see automation and robotics as vehicles for eliminating jobs.

Sequoia enables the company to put up items for sale on its website faster and be able to more easily predict delivery estimates, said David Guerin, the company’s director of robotic storage technology. The new program reduces the time it takes to fulfill an order by up to 25%, the company said, and it can identify and store inventory up to 75% faster. Amazon launched the system this week at one of its warehouses in Houston.

“The faster we can process inventory, the greater the probability that we’re going to be able to deliver when we said we could,” Guerin said. He said Amazon expects the new system to make up a significant portion of the company’s operations in the next three to five years.

…

In the new structure, vehicles transport products inside tote containers to a new sortation machine equipped with small robotic arms and computer vision. From the sortation machine, the bins are routed to an employee who picks items for delivery. Remaining inventory is consolidated by Sparrow, a robotic arm Amazon unveiled last year.In the previous system, vehicles moved around Amazon products, but the new sortation machine, tote containers and Sparrow weren’t involved. Previously, employees might have to reach high up on a shelf to pick a heavy item, but now the new system delivers containers around the waist level, with a goal to reduce injuries.

…

Guerin, the robotics storage director, said Amazon’s plans for Sequoia include the same-day sites.

…

Amazon said it would also start to test a bipedal robot named Digit in its operations. Digit, which is designed by Agility Robotics, can move, grasp and handle items, and will initially be used by the company to pick up and move empty tote containers.

We talked about Digit in this week’s Free Edition of Synthetic Work. Go check the videos of those robots there.

Let’s talk about the delivery vans, now.

Jay Moye, writing on the company’s official blog:

Amazon has unveiled a new artificial intelligence (AI)–based technology that can spot even the smallest anomalies in Amazon delivery vans—from tire deformities and undercarriage wear to bent or warped body pieces—before they become on-road problems. The new Automated Vehicle Inspection (AVI) technology offers reassurance to fleet managers who previously had to rely solely on the human eye and manual inspections for daily safety rounds. Amazon is launching the AVI technology in partnership with tech startup UVeye in the U.S., Canada, Germany, and the UK.

…

“The last thing I want is for something preventable to happen—like a tire blowing out because we missed an imperceptible defect during our morning inspection,” said Bennett Hart, an Amazon Delivery Service Partner (DSP) who owns the logistics company Hart Road. “This technology improves the safety of our fleet.”

…

“When you go to the doctor, you expect to see a scan; we kind of do the same thing but for vehicles,” said Amir Hever, UVeye’s CEO.With the vehicle rolling at 5 mph, the AI system performs a full-vehicle scan in a few seconds, identifies problems, classifies them based on severity, and immediately sends the results to a computer. From there, a DSP can determine the fixes and services they need to perform to have well-maintained vehicles on the road the next day.

…

While the technology was originally invented to scan the underside of vehicles at borders and security checkpoints, it’s now using AI to seek more specific and minute details, such as vehicle damage. AVI relies on machine “stereovision,” meaning that it uses two vantage points to construct a full 3D image, and deep learning, a subset of machine learning in which a layered neural network mimics the learning processes of the human brain.

…

“We couldn’t simply pull this UVeye solution off the shelf and start using it,” Chempananical said. “Recognizing the unique demands of Amazon’s vast fleet of more than 100,000 delivery vans, ranging from custom delivery vans to electric Rivian vans, we worked directly with UVeye to train the AI models and algorithms in accordance with the Roadworthy Guidelines, Amazon’s rigorous standards for keeping the wheels turning safely.”

…

By making inspections faster, more accurate, systematic, and objective, AVI has already found hidden damage patterns. For example, 35% of all issues stem from tires. These issues include sidewall tears and debris and nails lodged in treads, issues not easily picked up by manual inspections previously. With AVI, DSPs will receive notifications to replace tires before they turn into a bigger problem.

In Issue #33 – You’re not blind anymore, we explored how the new vision of ChatGPT, thanks to the new GPT-4V model, could significantly impact the insurance industry, by automating the classification of pictures coming from damaged vehicles.

Amazon’s approach is different but it might have a big impact on the insurance industry, as well.

You won’t believe that people would fall for it, but they do. Boy, they do.

So this is a section dedicated to making me popular.

So far, I’ve seen three very impressive examples of out-of-the-box thinking applied to generative AI for extraordinary applications.

One is the training of AI models with spectrograms of music to generate new music. That is the famous Riffusion project.

The second is the training of AI models with fMRI scans, to convert thoughts into images. That is the MindEye research of the prodigy CEO of MedARC, Tanishq Mathew Abraham.

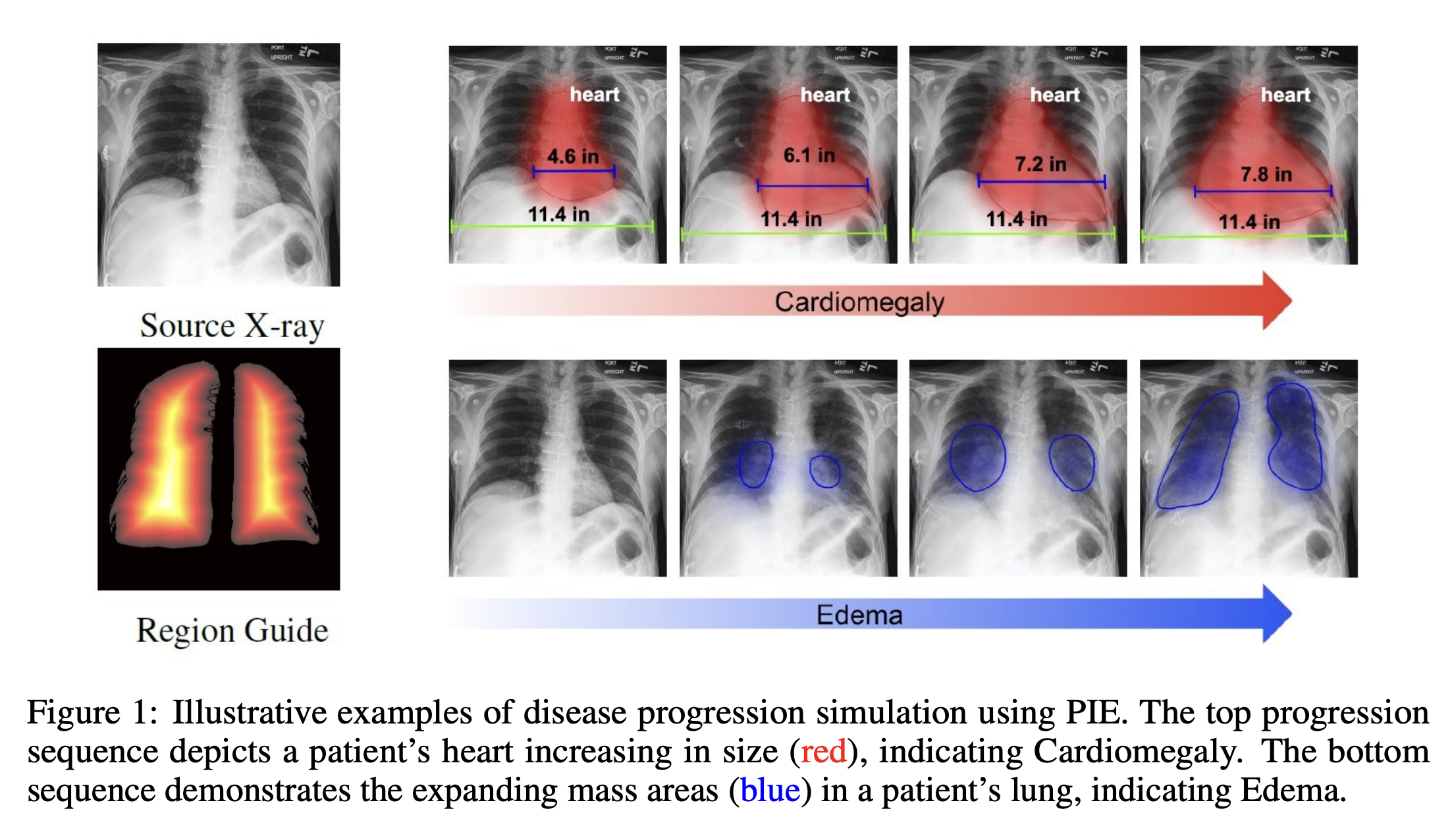

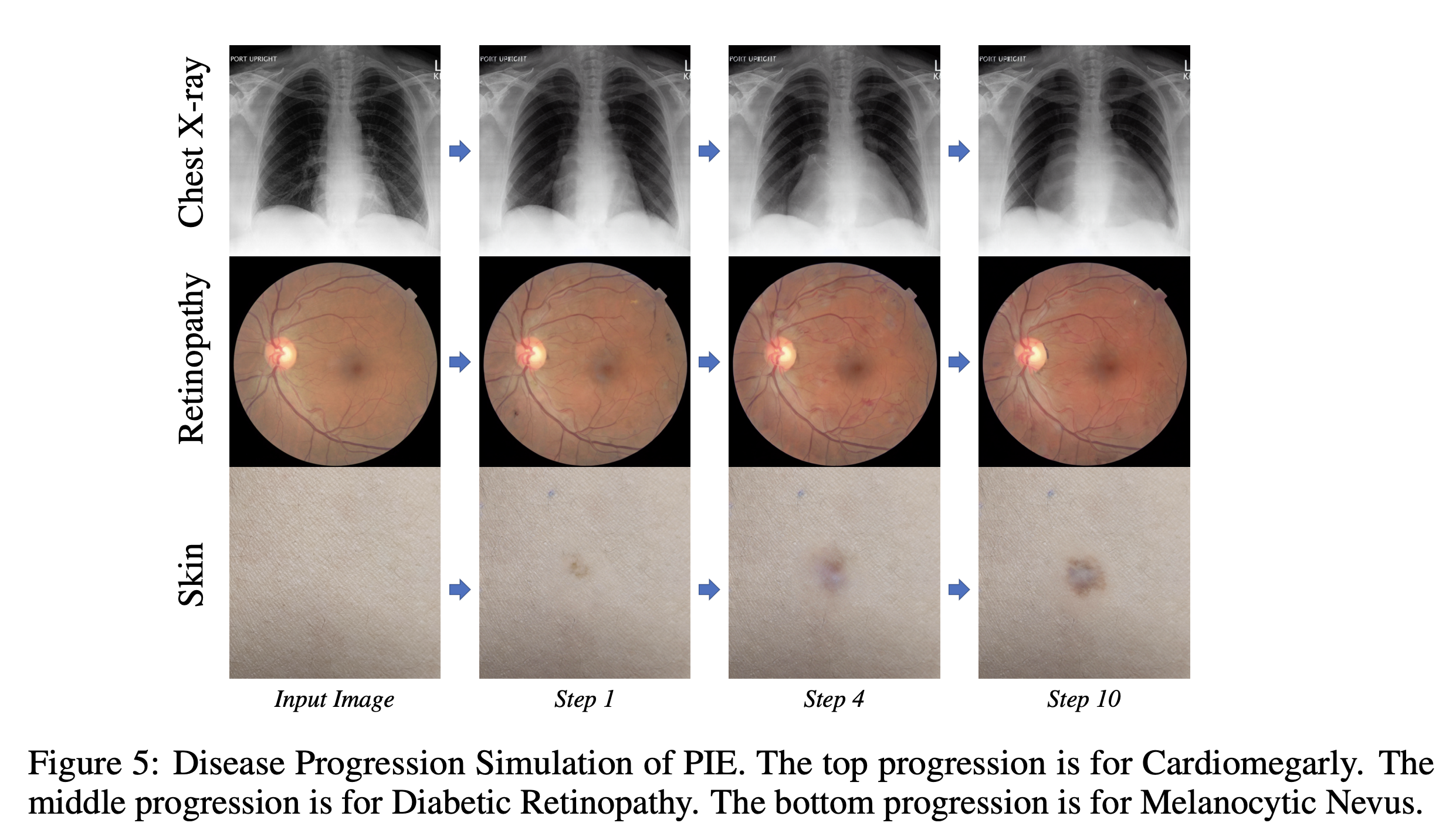

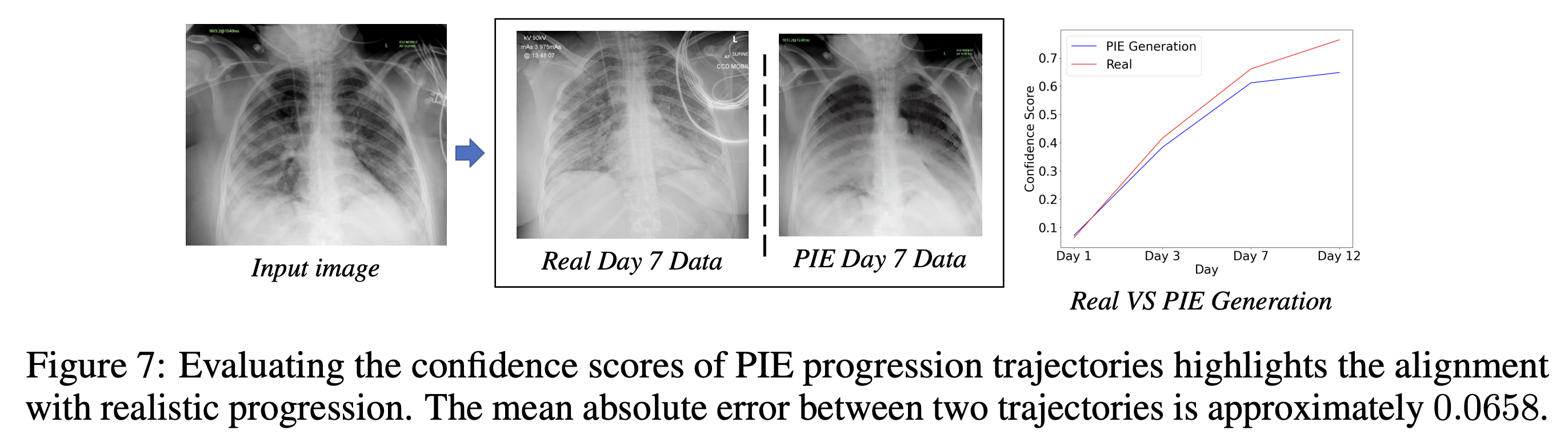

The third one is called PIE, a technique to simulate disease progression that could transform diagnosis and treatment in the Health Care industry.

Coincidentally (?), the people behind all three projects are employed by or gravitating around Stability AI.

First, let’s use the paper to understand the problem:

Disease progression refers to how an illness develops over time in an individual. By studying the progression of diseases, healthcare professionals can create effective treatment strategies and interventions. It allows them to predict the disease’s course, identify possible complications, and adjust treatment plans accordingly. Furthermore, monitoring disease progression allows healthcare providers to assess the efficacy of treatments, measure the impact of interventions, and make informed

decisions about patient care. A comprehensive understanding of disease progression is essential for improving patient outcomes, advancing medical knowledge, and finding innovative approaches to prevent and treat diseases.However, disease progression modeling in the imaging space poses a formidable challenge primarily due to the lack of continuous monitoring of individual patients over time and the high cost to collect such longitudinal data. The intricate and multifaceted dynamics of disease progression, combined with the lack of comprehensive and continuous image data of individual patients, result in the absence of established methodologies.

Moreover, disease progression exhibits significant variability and heterogeneity across patients and disease sub-types, rendering a uniform approach impracticable.

Past disease progression simulation research has limitations in terms of its ability to incorporate clinical textual information, generate individualized predictions based on individualized conditions, and utilize non-longitudinal data. This highlights the need for more advanced and flexible simulation frameworks to accurately capture the complex and dynamic nature of disease progression in imaging data.

With this in mind, the researchers have demonstrated that we don’t need to continuously monitor the progression of a disease to accurately simulate its progression.

All it takes is a single image, taken at the beginning or in the middle stage of the disease, accompanied by a short clinical report of the patient’s condition.

These two artifacts are then processed via a generative AI model, thanks to a special pipeline, which spits out a series of images that simulate the progression of the disease.

Hard to believe, but various tests, against real data about the progression of a disease, and against other predictive, have proven that the technique is significantly more accurate:

The researchers also asked the opinion of veteran practitioners:

To further assess the quality of our generated images, we surveyed 35 physicians and radiologists with 14.4 years of experience on average to answer a questionnaire on chest X-rays. The questionnaire includes disease classifications on the generated and real X-ray images and evaluations of the realism of generated disease progression sequences of Cardiomegaly, Edema, and Pleural Effusion.

…

The participating physicians have agreed with a probability of 76.2% that the simulated progressions on the targeted diseases fit their expectations.

I let you read the more technical details of their technique.

You might think that these futuristic applications are still far away from being used in the real world, but Riffusion, which we mentioned at the beginning of this section, just secured a $17 million seed round of funding to bring its technology to market.

And that’s in a market that is actively hostile to AI-generated music.

The Health Care industry, instead, is embracing AI-generated solutions with open arms. The world is eager to transform the way we do medicine.

Since OpenAI released its model Code Interpreter, now renamed Advanced Data Analysis (ADA?), we have had the possibility to upload and analyze multiple files at once.

That’s wonderful, except that OpenAI is still not completely clear about its privacy policy and its security architecture to trust it 100% with sensitive data.

As we wrote in past issues of Synthetic Work, some organizations have inked a deal with OpenAI to have an on-premises deployment of ChatGPT for a minimum guaranteed spending of $100,000 per year. If you are not one of those organizations, uploading sensitive information inside ChatGPT is not recommended.

On top of that, I highly discourage you from uploading any sensitive document on any third-party service that acts as a wrapper for OpenAI’s API, no matter how exceptional they promise to be.

To address this issue, the AI community has worked incessantly to create a host of open alternatives that you could use to analyse multiple sensitive documents.

In Issue #30 – AI is the Operating System of the Future, we saw one of these solutions, called Open Interpreter.

The problem with Open Interpreter is that, besides a very rudimentary interface, it makes a lot of calls to OpenAI’s API, turning your document analysis into a very expensive exercise.

Since we tested it in September, the project extended its support to local large language models like LLaMA 2 and Falcon 180B, but this scenario is still poorly supported on platforms other than Windows and Nvidia.

Today we look at a much better alternative that works on any OS and has a much better user interface.

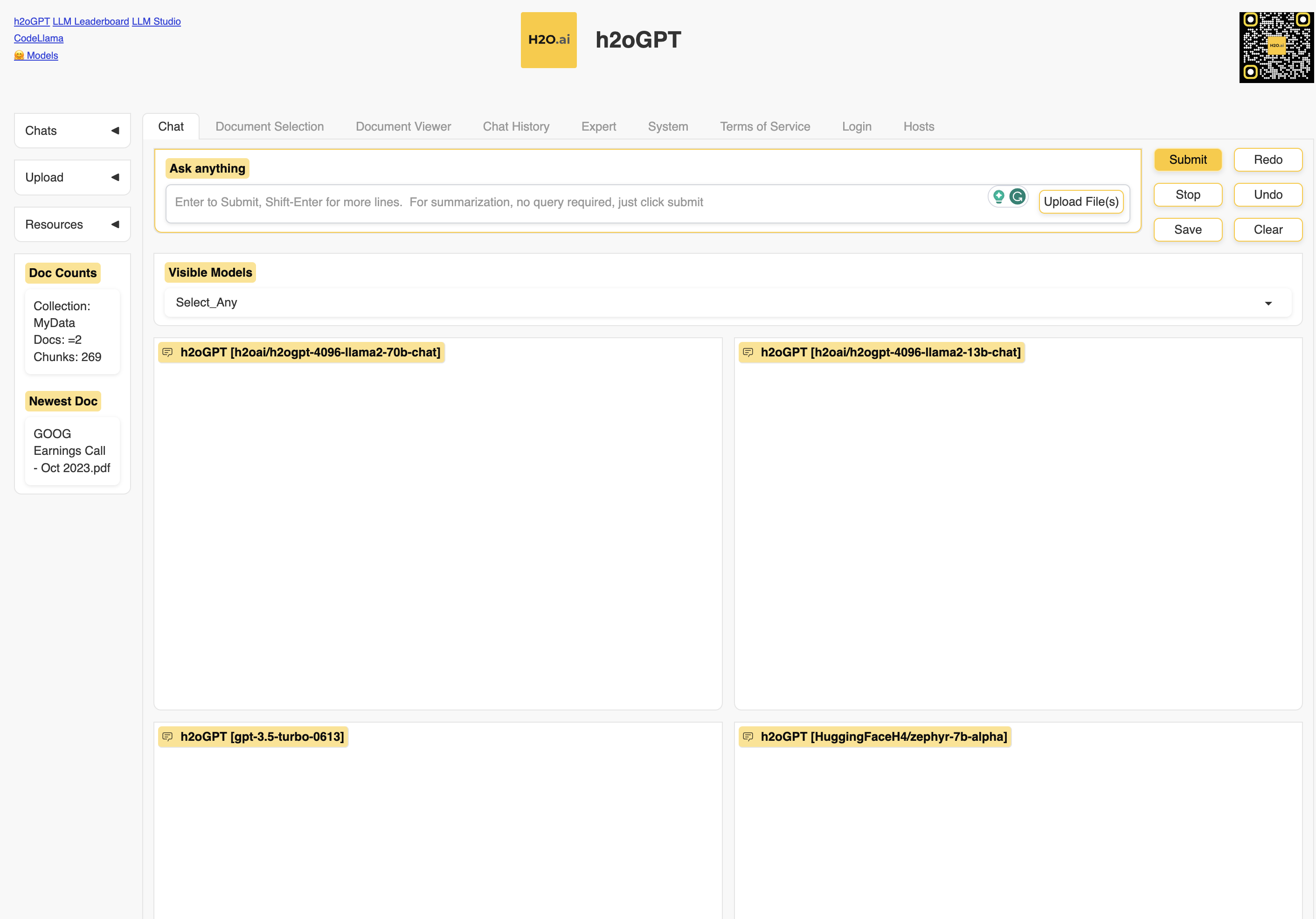

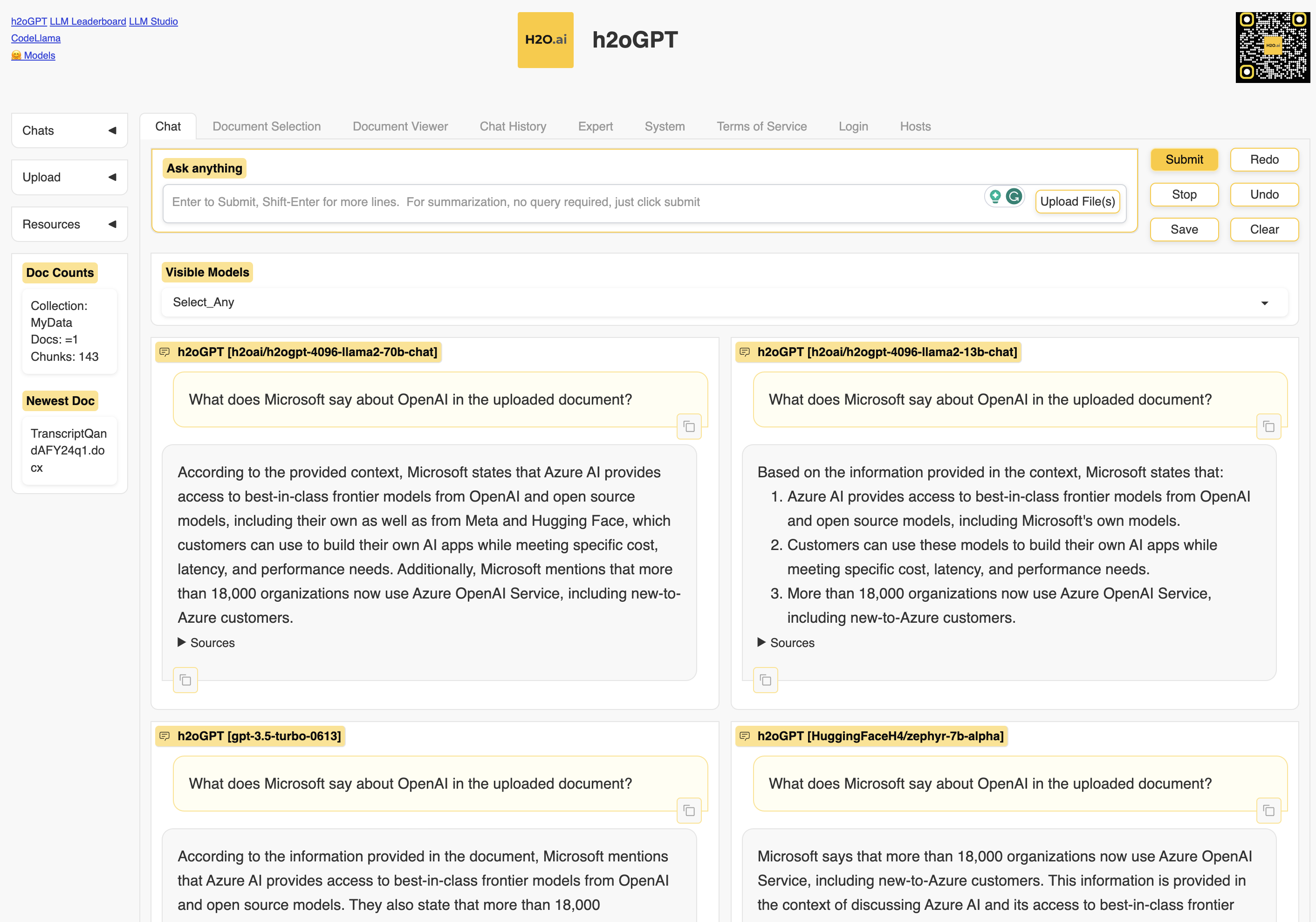

The project is developed by the AI startup h2o.ai, it’s called h2oGPT, and it’s truly open source, released under an Apache 2.0 license. Which means that any company can use it internally or for commercial applications without restrictions.

We don’t have to go through the technicalities of the installation, as h2o.ai gracefully set up an online demo:

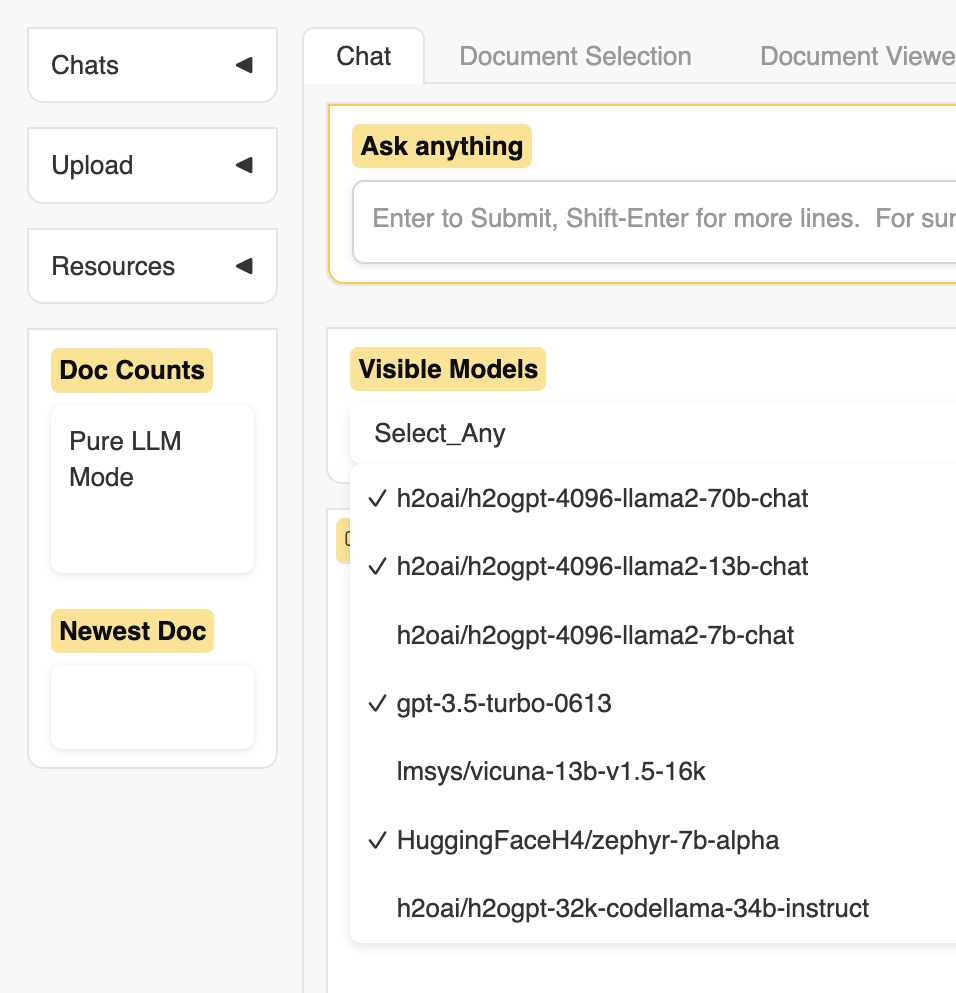

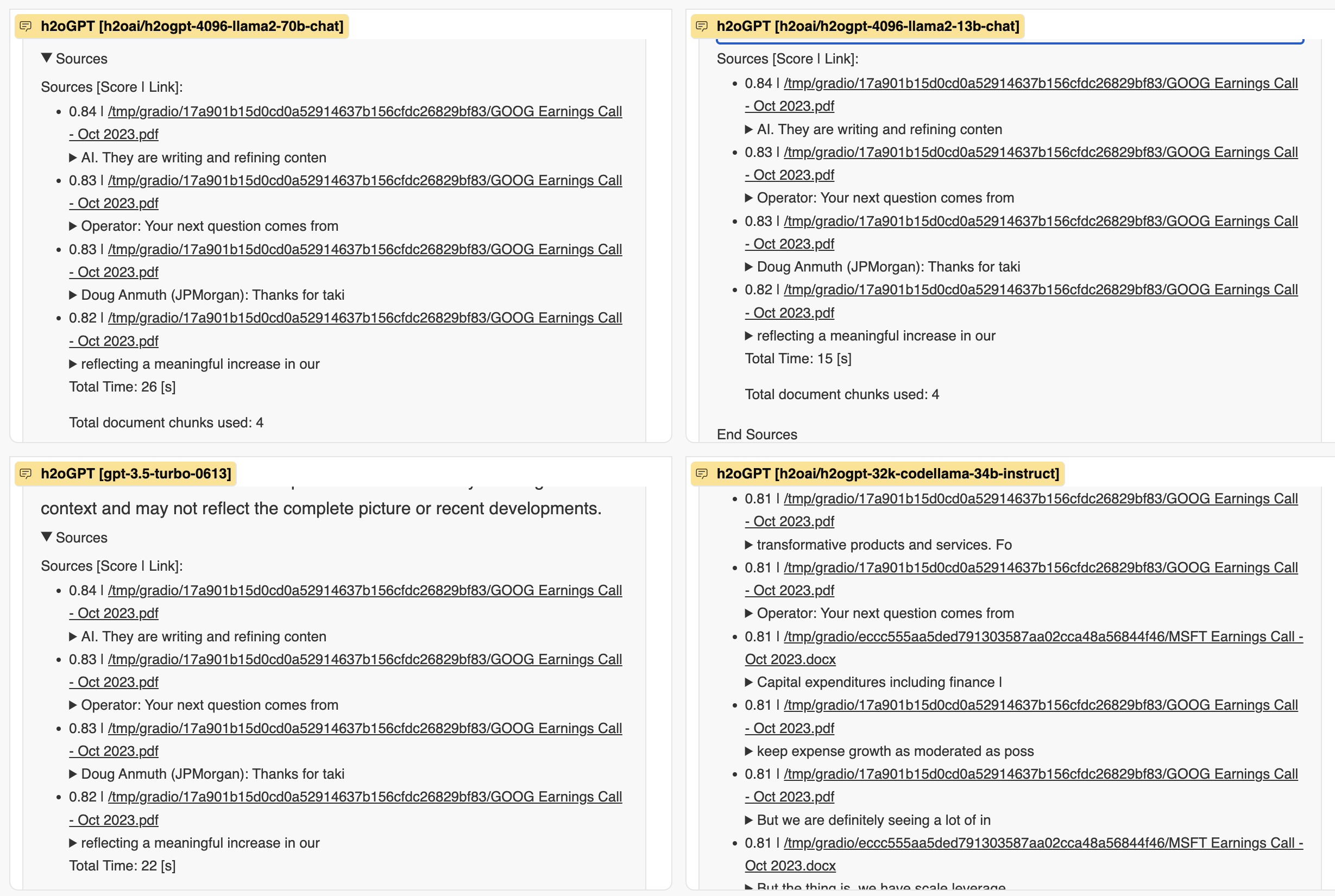

As you can see, in a very clever way, the tool can be configured to compare the answers of multiple LLMs at the same time. In this particular demo, h2o.ai selected the four following models:

- A version of LLaMA 70B fine-tuned by the company

- A version of LLaMA 13B fine-tuned by the company

- OpenAI GPT-3.5-Turbo

- A small(ish) multi-language model called Zephyr 7B

In the demo, you can swap any of these four models with others that are preconfigured for the demo, but in your local installation, you can install any model you want and connect to centralized models like GPT-4 served either by OpenAI or by Microsoft Azure.

For example, I’m much more interested in testing the answers of the open model CodeLLaMA with a context window of 32K tokens.

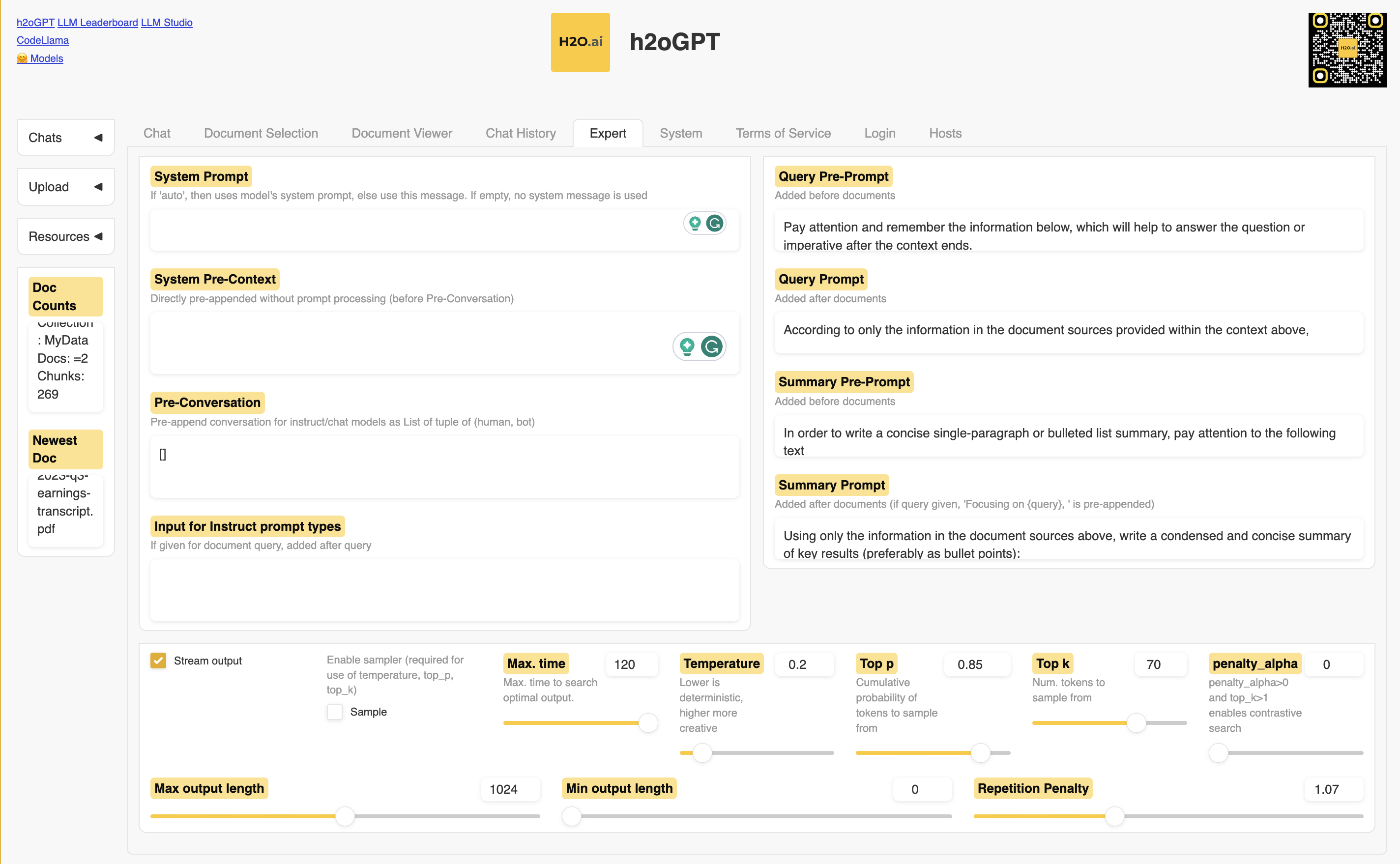

The tool is very powerful, allowing you to define multiple types of system prompts (what OpenAI calls Custom Instructions), to condition the model in different scenarios.

You can even define the maximum length of the answers, and the so-called temperature of the model, which is a way to control how creative the answers are.

However, the most powerful capability is its support for a wide range of documents, including PDFs, Excel and Word files, HTML, and even images.

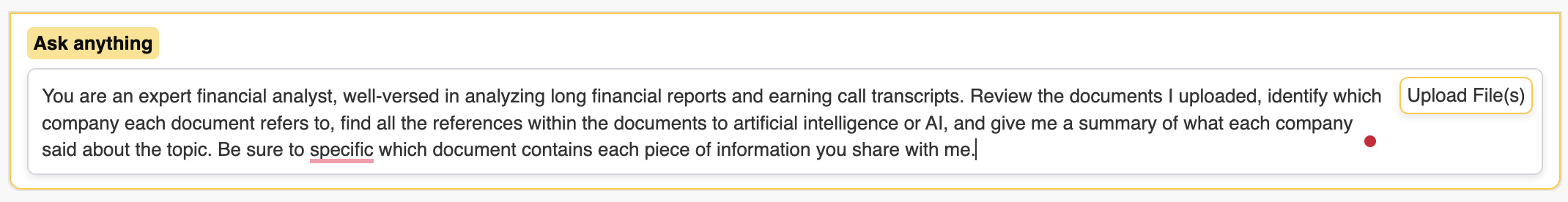

All we have to do is write our prompt as we would in any other LLM and upload one or more files that will be used as context.

Good, but what matters is if the tool is truly capable of operating a full analysis across multiple documents with different formats.

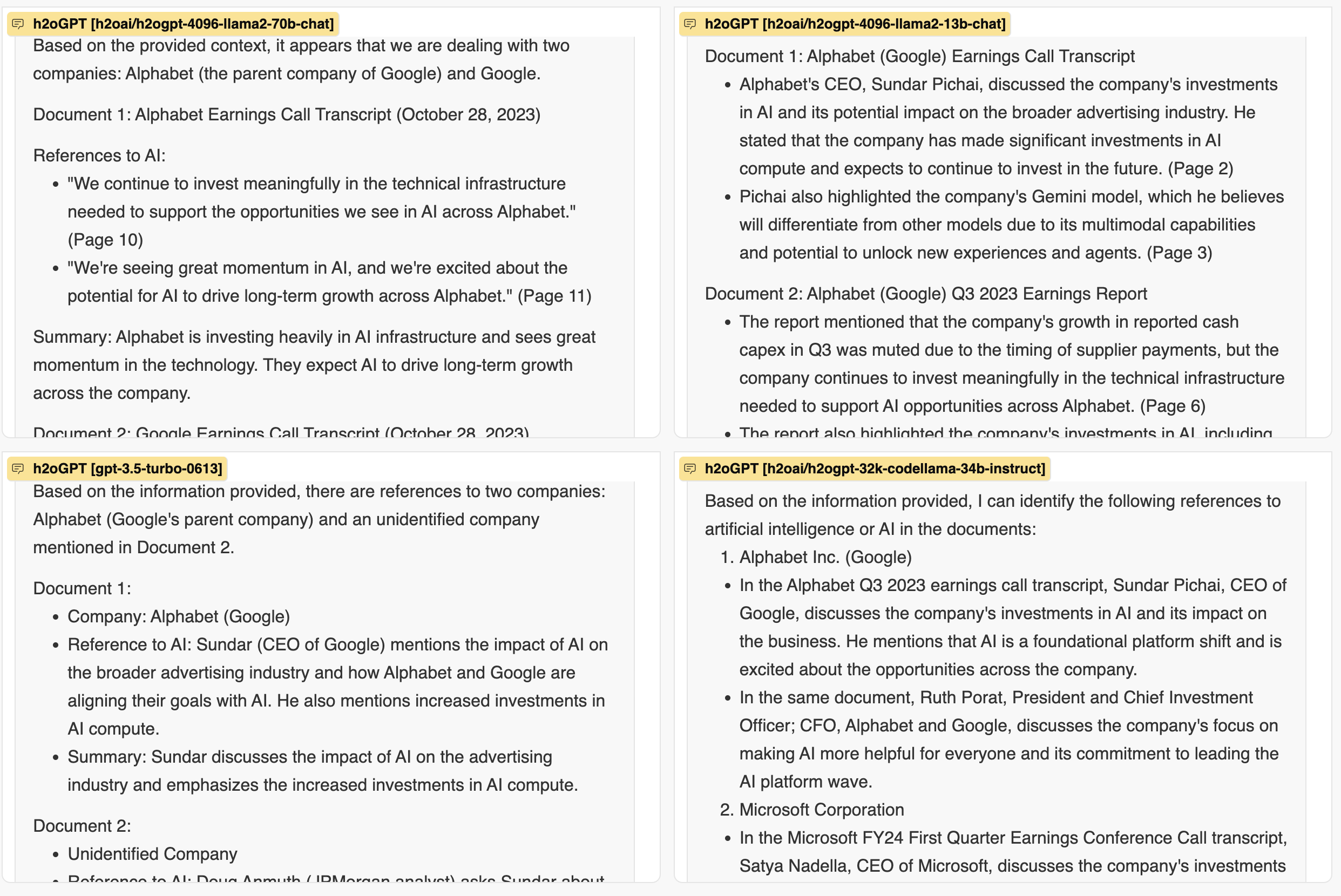

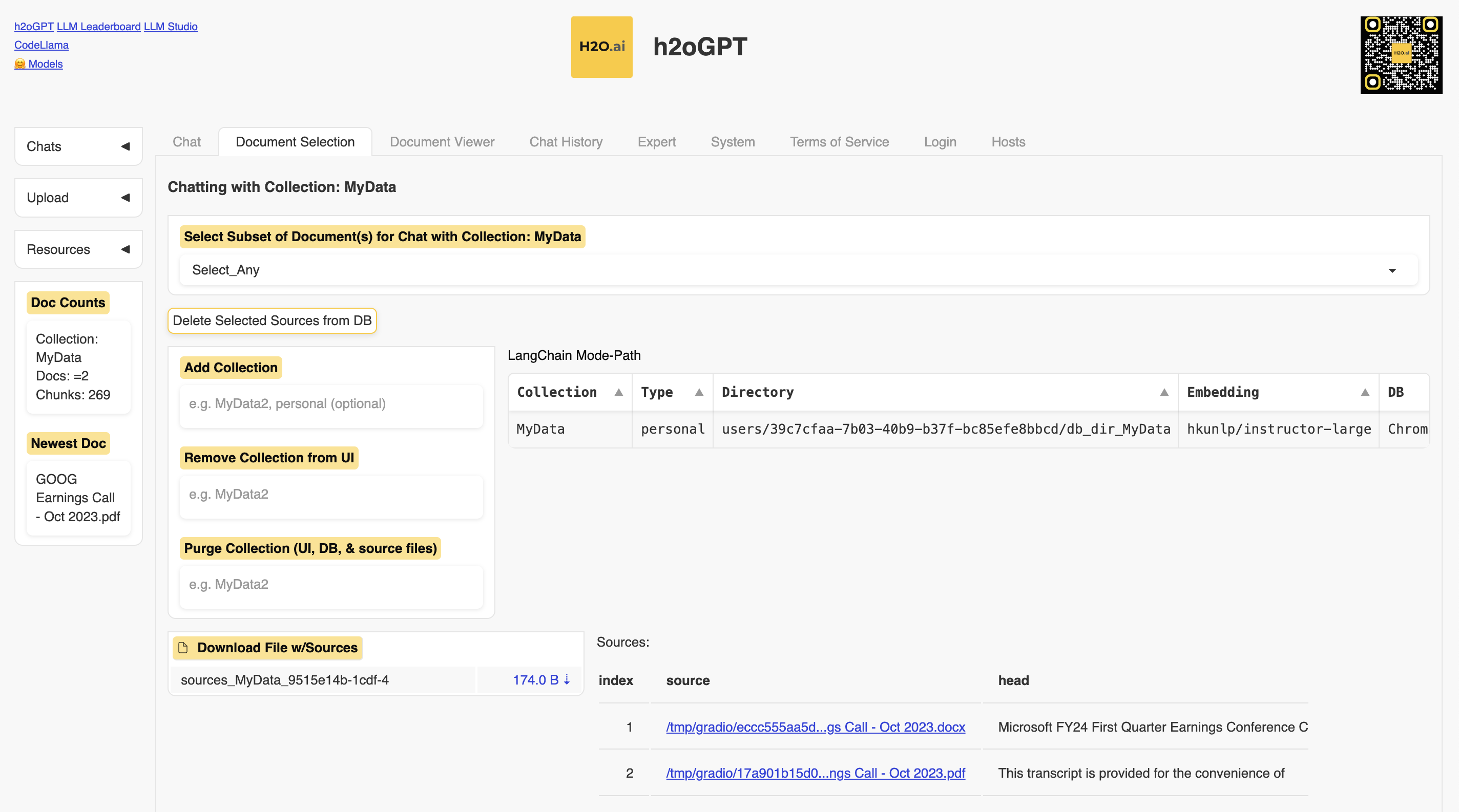

To test that, let’s upload the earnings call transcripts of both Google and Microsoft, which took place earlier this week. The former is in PDF format, while the latter is in DOCX format:

As you can see, only the CodeLLaMA model with the extended context window was capable of differentiating the two documents.

A remarkable capability of h20GPT is that it allows you to see all the sources of the answer the LLM provided, even without an explicit prompt request, also showing you the degree of confidence of the model in the answer.

As you can see from the source list, the first three models didn’t even look into the second document. Perhaps, their very limited 4K token context window didn’t allow them to do so. I’ll have to experiment more with the tool to understand better what’s happening in this scenario.

Another remarkable capability of h20GPT is the organization of the documents you have uploaded.

First, you can organize the documents in collections, which define the context given to the LLM to find its answers. You can keep all documents in a single collection or segment the documents into multiple collections to perform a more granular analysis.

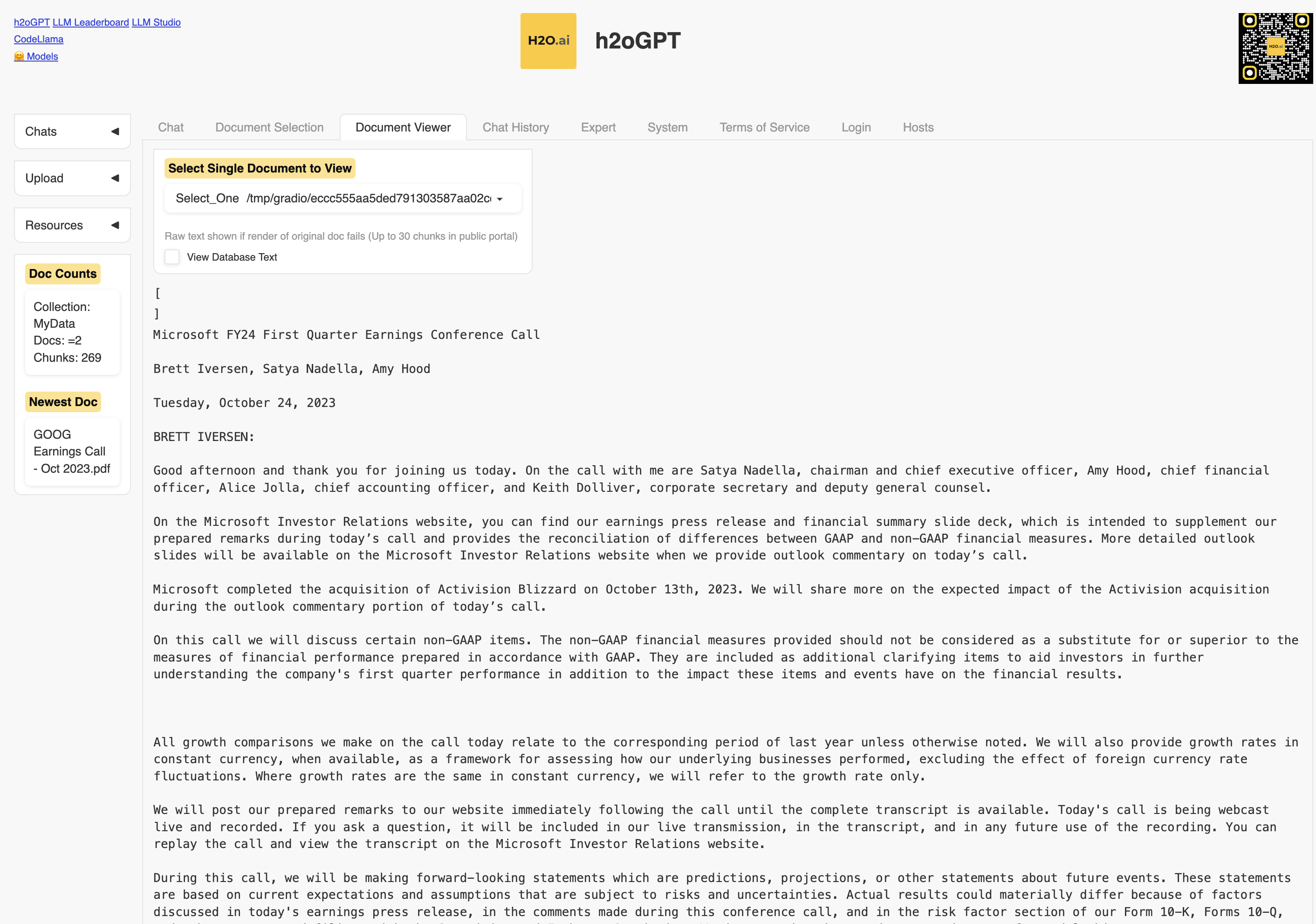

Second, you can see the content of each document irrespective of its original format:

Lastly, you can export and re-import your chats with h2oGPT, which is a very useful feature to further analyze the interactions with the LLMs or to publish them in a content management system or website.

h20.ai enhanced the handling of the documents with a few optimization techniques to improve the quality of the answers and the tool can do a lot more things than we have the time to see here.

Yet, what we have seen so far is enough to understand its potential. Truly remarkable and highly recommended if you are looking for a quick way to build an internal generative AI solution without depending on OpenAI models.