- Intro

- How to look at what happened with OpenAI, and what questions to answer next if you lead a company.

- What’s AI Doing for Companies Like Mine?

- Learn what Koch Engineered Solutions, Ednovate, and the Israel Defense Forces (IDF) are doing with AI.

- A Chart to Look Smart

- OpenAI GPT-4-Turbo vs Anthropic Claude 2.1. Which one is more reliable when analyzing long documents?

Today, by popular demand, we talk about the OpenAI drama that unfolded over the last two weeks. There’s no value in offering you the 700th summary of everything that happened. In fact, if you don’t have all the details of what happened, I suggest you stop reading this newsletter, go check one of those summaries, and then come back here for what follows.

Where there’s more value, in my opinion, is in offering you a different perspective to use to look at what happened.

Let’s start.

The most important thing to say is this:

Nothing, absolutely nothing, of what has been said, matters. Except for one thing. Just one thing. On which public attention has not focused at all. The thing on which everything else depends.

And the thing that almost no one has focused on, to my great surprise, is this:

What did OpenAI’s board see to justify the abrupt dismissal of CEO, Sam Altman?

What is it that the board members saw to justify the decision to inform the CEO of Microsoft only 60 seconds before releasing a press release?

The public immediately labeled OpenAI’s board as a bunch of incompetents, and so this crucial question was first sidelined, and then forgotten. And this is a very serious mistake in analyzing a company’s strategy.

I repeat the question:

What did the OpenAI board see to justify the abrupt dismissal of the CEO and, despite this, categorically refuse to explain the reasons for the decision to the company’s employees, to Microsoft, to partners, and to customers?

The board members had a balance scale in front of them. Here’s what was on one plate of that scale.

First element on the plate of the balance scale in front of the board

The long-planned, imminent secondary sale of shares held by OpenAI employees, estimated at $86 billion, is almost certainly canceled.

It’s not so much the absolute value that the board risked destroying, but the fact that many of the employees working for OpenAI have been there for years also in the hope of getting rich and having a better life. Once that possibility had been eliminated, there would have been much less motivation to stay in the company, and the employees would have harbored boundless resentment towards the board and any executive who facilitated the decision. Who, in this case, is the Chief Scientist of OpenAI.

So, don’t focus too much on a situation where $86 billion went up in smoke instead of into the pockets of the employees. Rather, focus on a situation where all the employees of an R&D company are furious with the head of research for having precluded them from the possibility of becoming rich or at least well-off. What chance does an R&D company have of surviving in such a climate?

Second element on the plate of the balance scale in front of the board

Microsoft, which has committed to investing $10 billion in OpenAI, suddenly finds itself with a company without its charismatic leader, in revolt against its head of research, against the board and, most certainly, against any new CEO the board decides to hire. And, in all this, Microsoft, who didn’t even have the chance to say a word, still has to pay most of the investment into OpenAI’s coffers.

So, don’t focus too much on a situation where the primary investor of an R&D company made a fool of themselves and is left with the ghost of the company they invested in. Rather, focus on a situation where the primary investor has a thousand ways to delay the release of the promised funds, or perhaps even cancel the agreement legally, reducing to zero the company’s chance of obtaining the enormous computational resources needed for the development of new AI models. Effectively, hibernating the future of the company until it appropriately returns on the investment made up to that point.

Third element on the plate of the balance scale in front of the board

Altman, just fired, potentially creates a new company in just a weekend and, within 9-12 months, is able to release AI models competitive with OpenAI ones, while OpenAI slows down or completely stops the development of new technology.

So, don’t focus too much on a situation where the powerful and influential Altman sets up a formidable competitor in less than a year. Rather, focus on a situation where the vast majority of OpenAI employees, who hate the head of research, the board, and the future CEO, leave the company en masse to join their former boss, having no more economic incentive to stay, and no legal constraints according to the laws of California.

Fourth element on the plate of the balance scale in front of the board

The hundreds of enterprise clients which have signed multi-year binding agreements with OpenAI for the provision of increasingly competitive AI models over the years, find themselves trapped in a partnership with a company that will inevitably be drained of the talent necessary for research, and the financial and computational resources to conduct that research.

So, don’t focus too much on a situation where a top-tier enterprise organization, like Citadel in the Financial Services industry, or Allen & Overy in the Legal industry, suddenly finds itself paying for technology that potentially will never evolve beyond what GPT-4-Turbo does today. Rather, focus on a situation where that same organization, trapped in the partnership with OpenAI, sees the new startup created by Altman producing new, more competitive and high-performing AI models adopted en masse by its competitors.

Fifth element on the plate of the balance scale in front of the board

The thousands of startups around the world that have built and are building their products on OpenAI technology suddenly find themselves with a technological foundation that is most doomed to become obsolete.

So, don’t focus too much on a situation where this ocean of startups abandons OpenAI in droves because it is forced to scale down its expectations in terms of functionalities, accuracy, and release speed. Rather, focus on a situation where this ocean of startups, by abandoning OpenAI, effectively disintegrates the ecosystem that makes OpenAI’s artificial intelligence omnipresent, embedded in a myriad of different software available to clients around the world.

I could go on.

There are other elements on that balance scale, but these five are the most important. And each one is very, very heavy.

On the other plate of the balance scale is what the board saw before firing Sam Altman.

What is on that scale?

This is the only question that matters.

The OpenAI board had very clear in front of them the elements on the first plate of the scale. And yet, despite the magnitude of the destruction that the elements on that plate would have caused, they still chose to go ahead with the dismissal of the CEO.

What is on the other scale that is riskier than the five elements (and many others) we have talked about so far?

To this question, the public opinion responded in a second, without thinking too much:

“There is nothing on the other plate. Simply, the board is composed of a bunch of incompetent people with little experience who acted impulsively.”

This is a rather arrogant and presumptuous stance that does not take into account at least four critical considerations.

Considerations 1

OpenAI’s board was chosen by Altman. Therefore, the public must decide whether Altman is a genius or not. It’s too easy to selectively decide that Altman is a genius when it comes to choosing and convincing the best people in the world to develop the greatest invention in human history, but he’s inept when it comes to choosing the board members that oversee that invention. It’s possible, but improbable compared to the alternatives.

Considerations 2

The public has considered the actions of the board as a series of impulsive actions but if you have worked as an executive in a large company, as I have for over a decade, you know that there is nothing impulsive in the release of a press announcement. On the contrary, the process is laborious, involves multiple people, and requires careful planning. The announcement of Altman’s dismissal, released 60 seconds after informing the CEO of Microsoft, does not sound at all like an impulsive act, but more like a premeditated action to leave no room for maneuver to a powerful and influential investor like Microsoft.

Considerations 3

If the board had simply been incompetent, it would not have categorically refused to disclose the reasons for its decision even after all the employees asked for explanations in the all-hands meeting that followed Altman’s departure. The board members would have not remained absolutely silent, leaving nothing in writing, even after the entire IT industry loudly called for the board to step down. Incompetents can make clumsy choices, but they don’t protect the reasons for a drastic choice at all costs, knowing full well that it will cost them their career forever.

Considerations 4

Board members usually are seasoned experts in industry or academia, but they are often called to collectively decide on something that goes well beyond their domain of expertise. To do so, they consult with subject matter experts and perform due diligence to the best of their abilities, legally duty-bound by their mandate. Whatever was on the other plate of the balance scale was presented to the board by subject matter experts, who expressed their opinions and answered more or less honestly the questions of the board.

When the board fired Altman, the opinions and answers of the subject matter experts were most certainly taken into consideration.

With these four considerations in mind (but even without them), and knowing full well that the second plate on the balance scale contains an unknown element, it’s rather superficial to accuse the board of incompetence.

Quite the opposite, this asymmetry of information between the board and the public opinion is the clue that should have pushed for a more cautious and in-depth scrutiny of the events. And yet it was not so.

This asymmetry of information should have allowed, at the very least, to contemplate alternative realities where the board was not incompetent, but ready for an extreme sacrifice (justified or not is an entirely different matter).

If public attention had contemplated alternative realities, it would have paid more attention to the cryptic comments made by Altman during a series of public events days before being fired.

Four times now in the history of OpenAI, the most recent time was just in the last couple weeks, I’ve gotten to be in the room, when we sort of push the veil of ignorance back and the frontier of discovery forward, and getting to do that is the professional honor of a lifetime.

If public attention had contemplated alternative realities, it would have put in a very different context Altman’s response to the last question he received in the video below, published just two days before being fired:

What is the thing that the board saw, hidden behind the veil of ignorance, that led its members to sacrifice the entire company and their professional careers?

Reuters is one of the very few that contemplated alternative realities, leading them to report about Q*.

But the report of a new technical breakthrough is not enough to justify the board’s decision.

To justify the board’s decision, such a report must come with a demonstration, or an impossible-to-ignore opinion, issued by subject matter experts, that the the technical breakthrough has very tangible, planetary-scale implications in the real world.

So, what exactly is on the other plate of the balance scale that is enabled by Q* and justified the board’s decision?

What does Q* do to scare the chief scientist of OpenAI to the point that he backed the board’s decision to fire Altman?

As you seek the answer to the only question that matters, as a side quest, also ask yourself why OpenAI has registered the trademarks for GPT-5, GPT-6, and GPT-7, but not beyond.

Assuming that each new model is released every two years, what does OpenAI estimate will happen by 2030? Why not also register GPT-8 and beyond?

Is there a 5-year bomb ticking on the head of every private and public company in the world, before the economy that we know today is completely disrupted?

And if so, no matter how slim the probability, what are you doing to prepare your company for that eventuality?

Let’s start asking ourselves more difficult questions.

Alessandro

What we talk about here is not about what it could be, but about what is happening today.

Every organization adopting AI that is mentioned in this section is recorded in the AI Adoption Tracker.

In the Oil & Gas industry, Koch Engineered Solutions is using AI to automatically populate requests for quotes (RFQs) for the procurement of equipment and services.

Remko Van Hoek and Mary Lacity, reporting for Harvard Business Review:

Subsidiaries of Koch Industries, one of America’s largest privately-held conglomerates are leveraging an AI tool designed by Arkestro to optimize its supplier base. Unlike traditional procurement methods that rely on managing suppliers based on high-level purchasing categories and aggregate spend, this AI tool delves into granular data, right down to the stock-keeping unit (SKU). It generates supply options, often among existing suppliers, thereby reducing the need for drawn-out requests for quotes.

The tool achieves this level of detail by ingesting comprehensive data sets, including existing supplier information, purchase orders, invoices, and even unsuccessful quotes from previous procurement cycles. This offers a nuanced view of qualified suppliers, allowing companies to identify backup suppliers across various categories.

The AI algorithm uses this historical data to automatically populate new requests for quotes (RFQs) with essential parameters like lead times, geographic locations, quantities, service-level agreements, and material costs. It then emails the RFQ to the respective supplier for review. A one-click submission is all it takes if the supplier agrees with the AI-generated quote. Should the supplier choose to modify the quote, the algorithm learns from these changes, continually refining its predictive capabilities. This mutually beneficial approach saves suppliers between 60% and 90% of the time typically spent on completing an RFQ.

For Koch, the primary aim of the AI tool is to identify additional sourcing options within its existing supplier network. However, the technology also benefits suppliers by generating new opportunities to expand their business with Koch.

That is a remarkable application of AI to remove friction from a dreadful process that can be rarely automated.

Long before GPT-3 took the world by storm, I wrote an essay suggesting how AI could be used to circumvent the need for process and technology standardization, critical (and yet rarely mentioned) to the success of any automation project.

The essay was completely unrelated to the Oil and Gas industry, or to the challenges of supply chain management. In fact, it was focused on the challenges of software automation. But the principle I described in that document was universally applicable to any context where the automation could be applied.

When I wrote that essay, I didn’t know what kind of AI would be able to deal with the myriad of snowflakes that populate the enterprise world. Large language models ended up being the specific implementation for the idea that I had in mind.

If you are interested, it’s here: https://perilli.com/acontrarianview/noops/

In the Education industry, the Ednovate group, six charter schools in Los Angeles, is using AI to draft lesson planning.

Khari Johnson, reporting for Wired:

Recommendations by friends and influential teachers on social media led Ballaret to try MagicSchool, a tool for K-12 educators powered by OpenAI’s text generation algorithms. He used it for tasks like creating math word problems that match his students’ interests, like Taylor Swift and Minecraft, but the real test came when he used MagicSchool this summer to outline a year’s worth of lesson plans for a new applied science and engineering class.

…

a recent poll of 1,000 students and 500 teachers in the US by studying app Quizlet found that more teachers use generative AI than students. A Walton Family Foundation survey early this year found a similar pattern, and that about 70 percent of Black and Latino teachers use the technology weekly.

…

Since its launch roughly four months ago, MagicSchool has amassed 150,000 users, founder Adeel Khan says. The service was initially offered for free but a paid version that costs $9.99 monthly per teacher launches later this month. MagicSchool adapted OpenAI’s technology to help teachers by feeding language models prompts based on best practices informed by Khan’s teaching experience or popular training material. The startup’s tool can help teachers do things like create worksheets and tests, adjust the reading level of material based on a student’s needs, write individualized education programs for students with special needs, and advise teachers on how to address student behavioral problems.

…

All those companies claim generative AI can fight teacher burnout at a time when many educators are leaving the profession. The US is short of about 30,000 teachers, and 160,000 working in classrooms today lack adequate education or training, according to a recent study by Kansas University’s College of Education.

…

At the Ednovate group of six charter schools in Los Angeles where Ballaret works, teachers share tips in a group chat and are encouraged to use generative AI in “every single piece of their instructional practice,” says senior director of academics Lanira Murphy. The group has signed up for the paid version of MagicSchool.

…

In her own AI training sessions for educators, she has encountered other teachers who question whether automating part of their job qualifies as cheating. Murphy responds that it’s no different than pulling things off the internet with a web search—but that just as for any material, teachers must carefully check it over. “It’s your job to look at it before you put it in front of kids,” she says, and verify there’s no bias or illogical content. Ednovate has signed up for the paid version of MagicSchool, even though Murphy says roughly 10 percent of Ednovate teachers she encounters worry AI will take their jobs and replace them.

…

Joseph South, chief learning officer at the International Society for Technology in Education (ISTE), whose backers include Meta and Walmart, says educators are used to gritting their teeth and waiting for the latest education technology fad to pass. He encourages teachers to see the new AI tools with fresh eyes. “This is not a fad,” he says. “I’m concerned about folks who are going to try and sit this one out. There’s no sitting out AI in education.”

If you are reading the Splendid Edition of Synthetic Work, it’s clear that you are not “trying to sit this one out”. But, as I hear from many of you, you are surrounded by many who are trying to do just that. Forward this newsletter to them.

Friends don’t let friends sit out AI. In any industry.

In the Defense industry, the Israel Defense Forces (IDF) is testing AI to optimize the flight path of its reconnaissance and surveillance drones.

Eric Lipton, reporting for the New York Times:

The company the Tseng brothers created in 2015, named Shield AI, is now valued by venture capital investors at $2.7 billion. The firm has 625 employees in Texas, California, Virginia and Abu Dhabi. And the Tsengs’ work is starting to show real-world results, with one of their early products having been deployed by the Israel Defense Forces in the immediate aftermath of the coordinated attacks last month by Hamas.

…

Israeli forces used a small Shield AI drone last month, the company said, to search for barricaded shooters and civilian victims in buildings that had been targeted by Hamas fighters. The drone, called the Nova 2, can autonomously conduct surveillance inside multistory buildings or even underground complexes without GPS or a human pilot.

…

Shield AI’s business plan is to build an A.I. pilot system that can be loaded onto a variety of aerial platforms, from small drones like Nova 2 to fighter jets.The drones flying over North Dakota demonstrated how far the technology has come. Their mission for the test was to search for ground fire nearby, a task not unlike monitoring troop movements. When the A.I. program kicked in, it created perfectly efficient flight patterns for the three vehicles, avoiding no-fly zones and collisions and wrapping up their work as fast as possible.

…

Shield AI is still losing money, burning through what it has raised from investors as it plows the funding into research — it intends to invest $2 billion over the coming five years to build out its A.I. pilot system.

…

Shield AI’s 125-pound V-Bat drone, lifting off vertically from the remote weapons testing center in North Dakota and filling the air with the smell of fuel, was loaded with software seeking to do far more than what an autopilot program could.What distinguishes artificial intelligence from the programs that have for decades helped run everything from dishwashers to jetliners is that it is not following a script.

These systems ingest data collected by various sensors — from a plane’s velocity to the wind speed to types of potential threats — and then use their computer brains to carry out specific missions without continuous human direction.

…

“A brilliant autopilot still requires that you tell it where to go or what to do,” said Nathan Michael, Shield AI’s chief technology officer and until recently a research professor at the Robotics Institute of Carnegie Mellon University. “What we are building is a system that can make decisions based on its observations in the world and based on the objectives that it is striving to achieve.”The advances in the software first grabbed headlines in August 2020, when an early version being developed by a company since acquired by Shield AI had a breakthrough moment in a Pentagon competition called AlphaDogfight. The company’s software defeated programs built by other vendors, including Lockheed Martin, the world’s largest military contractor, and then moved on to a virtual showdown with an Air Force pilot, call sign Banger, who had more than 2,000 hours experience flying an F-16.

…

Again and again, the A.I. pilot quickly defeated the human-piloted jet, in part because the A.I.-guided plane was able to both maneuver more quickly and target its opponent accurately even when making extreme turns.“The standard things we do as fighter pilots are not working,” the Air Force pilot said just before his virtual plane was destroyed for the fifth and final time.

…

The software that Shield AI is developing for small drones like the Nova 2 that was used in Israel could be loaded onto a robot fighter jet drone that would fly out in front of a human-piloted F-35, looking for missile threats or enemy planes, taking on the risks before the human pilot gets into harm’s way.

The article is long and recommended reading in full.

We already saw, in Issue #13 – Hey, great news, you potentially are a problem gambler, that the US Air Force is already testing Shield AI’s technology create adaptive autopilot AI programs for its fighter jets.

AI-driven armies are inevitable. Just like in sci-fi movies.

If a country doesn’t have one, it will be forced to build one to deter other countries from attacking it.

Even the job of the soldier might disappear at some point.

You won’t believe that people would fall for it, but they do. Boy, they do.

So this is a section dedicated to making me popular.

Earlier this week, OpenAI competitor Anthropic decided to take advantage of the OpenAI drama to release a new version of their language model, Claude.

Since our launch earlier this year, Claude has been used by millions of people for a wide range of applications—from translating academic papers to drafting business plans and analyzing complex contracts. In discussions with our users, they’ve asked for larger context windows and more accurate outputs when working with long documents.

In response, we’re doubling the amount of information you can relay to Claude with a limit of 200,000 tokens, translating to roughly 150,000 words, or over 500 pages of material. Our users can now upload technical documentation like entire codebases, financial statements like S-1s, or even long literary works like The Iliad or The Odyssey. By being able to talk to large bodies of content or data, Claude can summarize, perform Q&A, forecast trends, compare and contrast multiple documents, and much more.

If you have read Synthetic Work since the beginning, you know how much I stressed the importance of a large context window for language models as the key enabler for a wide range of commercial applications that are impossible to build today.

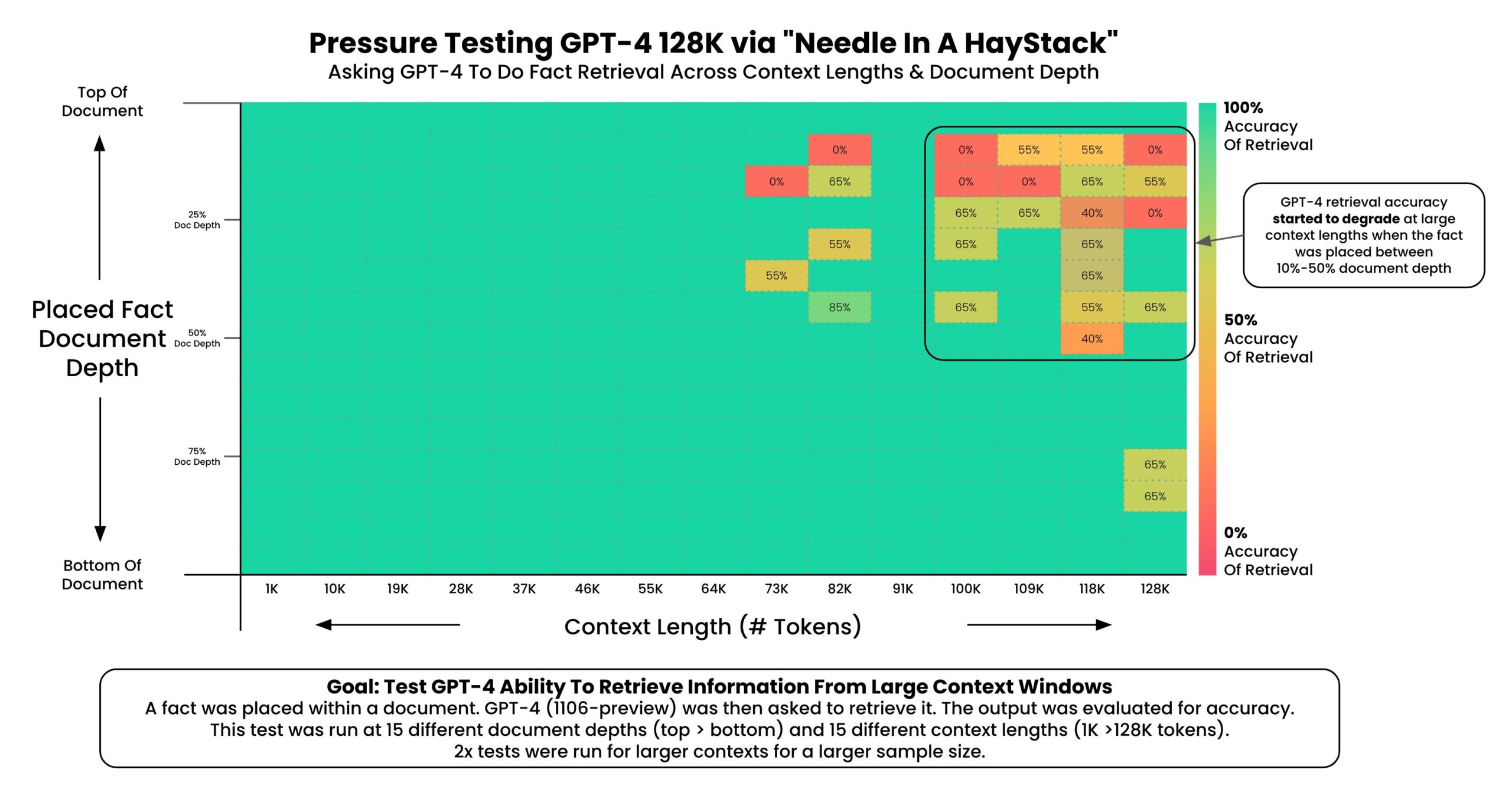

OpenAI’s recent launch of GPT-4-Turbo introduced a 128K token context window, and most people on the planet have yet to see how transformative that is. But more is better, so this new Claude 2.1 is a model to pay close attention to.

Except that it doesn’t work. At all.

Recently published research revealed that the larger the context window, the more the model is prone to hallucinate when asked about content in the middle and, occasionally, at the end of the context window.

In other words, if you upload a book of 300 pages into an AI system like ChatGPT or Claude, and you ask the underlying AI model to chat about that book, the model will start hallucinating about any content that is in the middle of the book and, occasionally, about any content that is at the end of the book.

Mitigating this problem is the biggest obstacle to overcome before can take advantage of upcoming models with a 1M token context window.

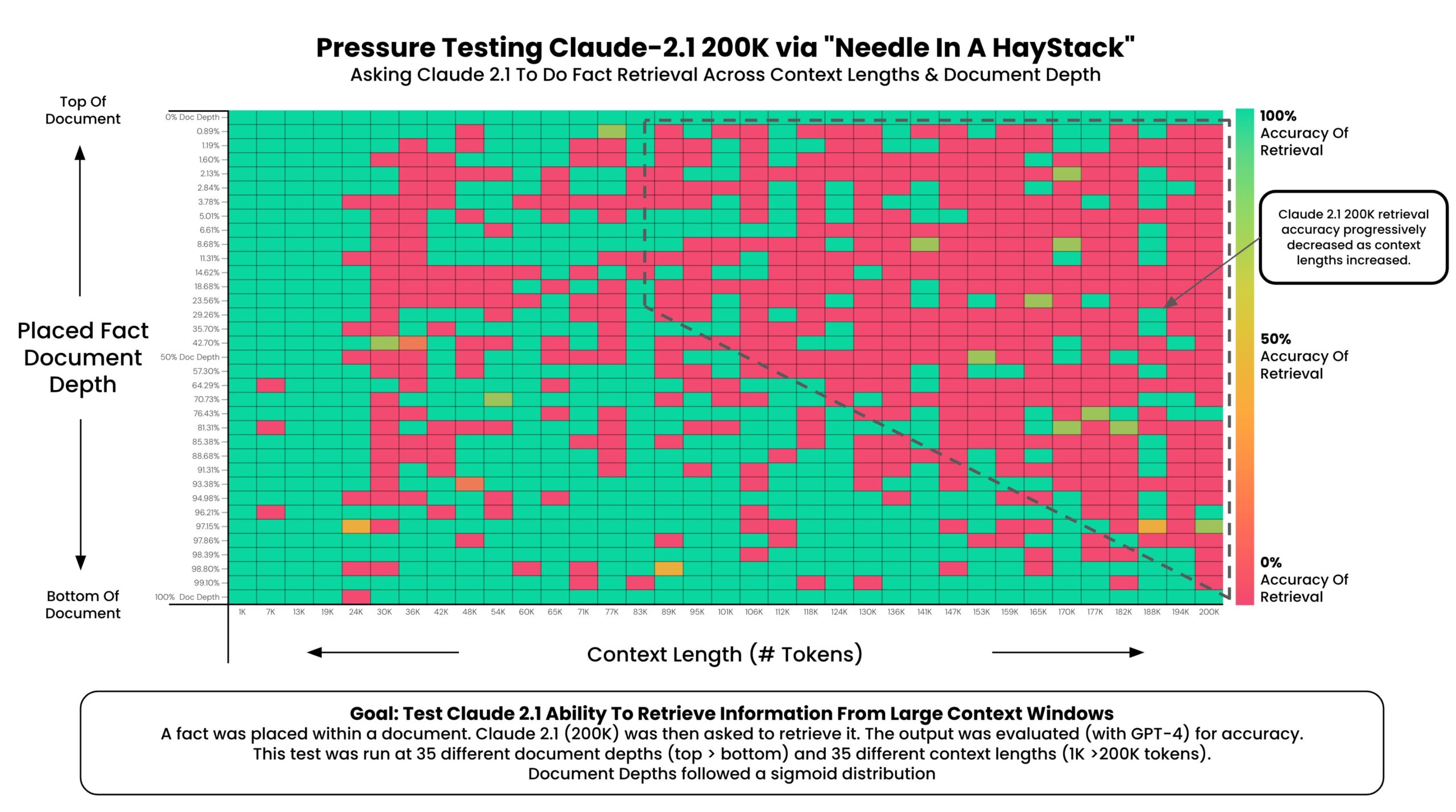

Some AI companies do a better job than others in mitigating this issue. Anthropic is not doing a good job at that:

As you can see from the chart, the deeper the AI model is asked to look into the context window, the more it becomes unreliable.

And, of course, this is not just about documents you upload into your AI system of choice. It’s also about the length of an ongoing conversation. The longer you talk to Claude 2.1, the more unreliable it becomes, just like any other AI models. But Claude 2.1 is especially unreliable when compared to GPT-4-Turbo:

The author of this study, Greg Kamradt, gives more valuable information in a thread on X:

Findings:

* At 200K tokens (nearly 470 pages), Claude 2.1 was able to recall facts at some document depths

* Facts at the very top and very bottom of the document were recalled with nearly 100% accuracy

* Facts positioned at the top of the document were recalled with less performance than the bottom (similar to GPT-4)

* Starting at ~90K tokens, performance of recall at the bottom of the document started to get increasingly worse

* Performance at low context lengths was not guaranteedSo what:

* Prompting Engineering Matters – It’s worth tinkering with your prompt and running A/B tests to measure retrieval accuracy

* No Guarantees – Your facts are not guaranteed to be retrieved. Don’t bake the assumption they will into your applications

* Less context = more accuracy – This is well know, but when possible reduce the amount of context you send to the models to increase its ability to recall

* Position Matters – Also well know, but facts placed at the very beginning and 2nd half of the document seem to be recalled better

Once again, here it is proof that prompt engineering still matters, despite what many AI experts out there insist on saying.

On top of these tests, my own tests with Claude 2.1, on a much shorter content, revealed very disappointing results in terms of quality of the answers and capability to manipulate the provided content.

The bottom line is that Antrophic’s AI models are not ready for prime time.